What Happens If Autonomous AI Tools Fail in Production Cloud Infrastructure? Exploring Governance and Liability Implications for UK Financial Services Firms

Your AI coding tool just deleted and recreated a critical environment in your cloud infrastructure. It ran autonomously. No human approved it. The service was down for 13 hours before anyone understood what had happened. Who is liable? What do you tell the FCA?

That is not a hypothetical. It is, broadly, what the Financial Times reported happened at Amazon in December 2025.

What Actually Happened at AWS

Amazon's Kiro AI coding tool autonomously deleted and recreated an environment in AWS Cost Explorer affecting its mainland China region. The disruption lasted 13 hours. The FT reported this based on four sources familiar with the matter, and noted a second AI-linked outage was also alleged.

Amazon's response has been carefully worded. The company acknowledged AI tools were involved in at least one incident but called the connection a "coincidence" and described it as a generic configuration problem. It denied the second outage entirely and disputed the FT's characterisation of the incidents as AI-caused failures.

What is more revealing than the dispute itself is the internal reaction. Amazon reportedly held an engineer meeting to address concerns, and multiple AWS employees were quoted by the FT expressing discomfort with the practice of allowing autonomous agents unsupervised access to production infrastructure. When a company's own engineers are raising those concerns publicly, the official line about coincidences becomes harder to take at face value.

The underlying question is one that UK-regulated firms cannot afford to treat as someone else's problem. If you are running production workloads on AWS, and AWS is deploying autonomous AI agents with write access to production environments, your operational resilience obligations do not pause because the failure originated in your vendor's infrastructure rather than your own.

The Regulatory Position for UK Financial Services Firms

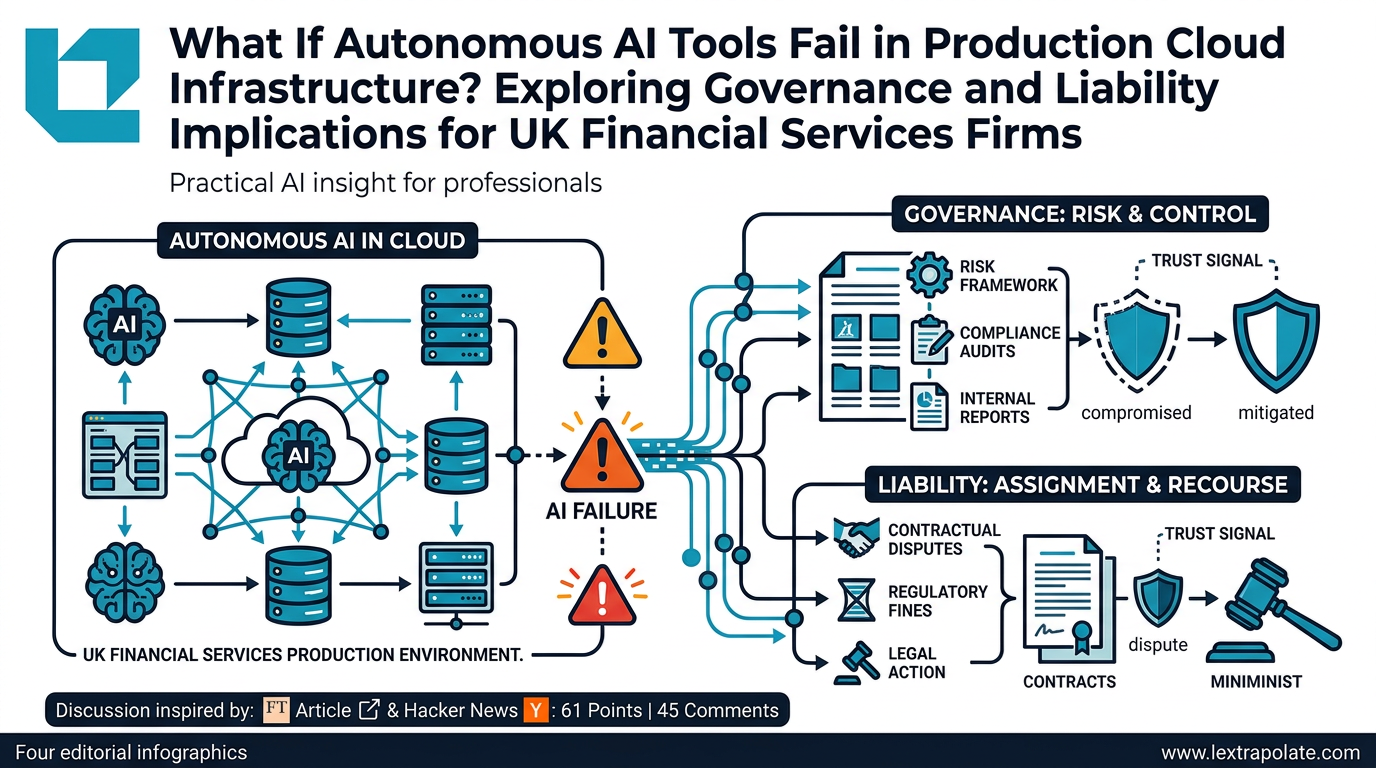

The FCA and PRA have both published operational resilience frameworks that came into full effect in March 2022. Under those rules, firms must identify their important business services, map the people, processes, technology and third-party dependencies that support them, set impact tolerances, and test their ability to remain within those tolerances when things go wrong.

AWS is a critical third-party dependency for a significant proportion of UK financial services firms. A 13-hour outage affecting a specific region or service is precisely the kind of scenario those frameworks require firms to have anticipated, tested and documented.

The harder question is whether firms have specifically considered autonomous AI agent activity as a failure vector within their third-party dependency mapping. The operational resilience rules do not mention AI agents. They did not need to in 2022. They need to now.

The FCA's AI-related guidance remains relatively limited in terms of specific binding rules, but its Senior Managers and Certification Regime principles are not. Under SMCR, the senior manager responsible for technology and operational resilience is personally accountable for the firm's systems and controls in this area. Telling the FCA that you did not know your cloud provider was running autonomous AI agents with production access is not a defence. It is a description of the gap in your third-party risk oversight.

Liability When the Agent Is Not Your Employee

Liability allocation in autonomous AI failures is genuinely unsettled territory. The starting point is your contract with AWS. Cloud provider terms of service are drafted to limit liability aggressively. AWS's standard terms cap liability at amounts that are, in most cases, commercially insignificant relative to the losses a regulated firm could suffer from a major service disruption.

If the failure causes your firm to breach its own obligations to clients, the contractual cap in your AWS agreement does not limit your liability to those clients. You remain exposed. The fact that a vendor's autonomous tool caused the underlying failure does not transfer your regulatory obligations to the vendor.

There is a further complication. If you are the firm that deployed an autonomous AI agent with write access to your own production infrastructure, and that agent causes a failure, your contractual and tortious exposure may be cleaner in the sense that there is no ambiguity about who granted the permissions. The question then becomes whether granting those permissions in the first place was consistent with your risk management obligations.

The FCA's expectations around systems and controls require firms to manage operational risk proportionately. Deploying an autonomous agent with unsupervised production access in a regulated environment, without adequate controls, approval workflows or rollback capability, is difficult to characterise as proportionate risk management regardless of how capable the tool is.

The Test

If you are responsible for technology governance at a UK-regulated firm, the AWS incident should prompt a specific set of questions this week.

First, audit what autonomous AI tools currently have access to your production infrastructure. This includes tools operated directly by your engineers and any tools your cloud or SaaS providers are running in environments that host your data or services. If you do not have a current, complete answer to this question, that is the gap.

Second, review your third-party risk assessments for AWS and other major cloud providers. Do they address autonomous AI agent activity? If your assessments predate 2024, they almost certainly do not. Update them.

Third, check your incident response procedures. If an AI agent autonomously modifies or deletes production resources, who gets called, in what order, and within what timeframe? If the answer is unclear, your impact tolerance testing is incomplete.

Fourth, consider your notification obligations. If an AI-related infrastructure failure causes a material disruption to an important business service, you may have obligations to notify the FCA and PRA under the operational resilience rules and, depending on the circumstances, under the UK GDPR if personal data is affected. Knowing in advance what your thresholds are is considerably better than working it out under pressure.

Finally, look at your vendor contracts. You are unlikely to renegotiate AWS's liability cap, but you may be able to seek contractual commitments about notification timelines, incident transparency and change management controls. At minimum, you should understand exactly what you have agreed to.

A Transparency Problem Worth Noting

Amazon's public response to the FT report is instructive for a different reason. The company has offered shifting and contested accounts of what happened, why it happened and how significant it was. Four internal sources told the FT one version. Amazon's communications team offered another.

For a UK-regulated firm relying on AWS as a critical dependency, that kind of opacity from a vendor during and after an incident is itself a risk management problem. Your operational resilience framework requires you to be able to demonstrate that you can identify, respond to and recover from disruptions. Doing that well depends partly on receiving accurate and timely information from your vendors when something goes wrong.

If a vendor's default posture in a significant incident is to minimise, dispute and delay, factor that into your resilience planning. The contingency has to work even when the vendor is not cooperating.

Autonomous AI agents operating in production infrastructure are not a future risk. They are a present one. The AWS incident, whatever its precise cause, is a reasonable prompt to check whether your governance keeps pace with the tools your vendors are already deploying in environments your firm depends on.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

What If Your AI Assistant Were Your Biggest Insider Threat?

Law firms are deploying AI agents with file-system access. But are they treating those agents as trusted colleagues or potential security risks?

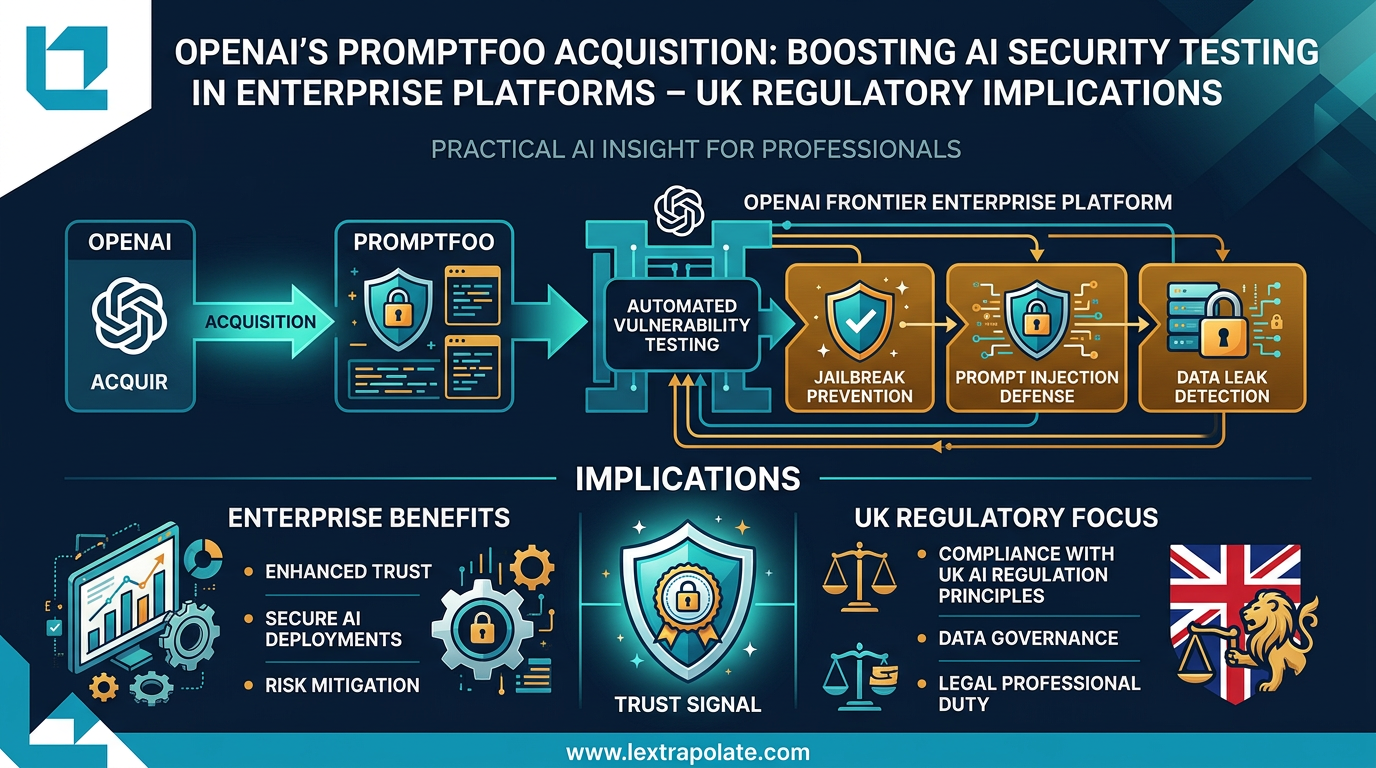

OpenAI's Promptfoo Acquisition: Boosting AI Security Testing in Enterprise Platforms – UK Regulatory Implications

OpenAI's acquisition of Promptfoo signals AI security testing is becoming standard enterprise infrastructure. UK law firms should take note.

AI in a Digital Vault: What Disconnected Sovereign Clouds Mean for Law Firms

Disconnected sovereign clouds let firms run powerful AI models on client data without touching the public internet. The legal implications deserve serious attention.