What if you can no longer trust the video your client sent you?

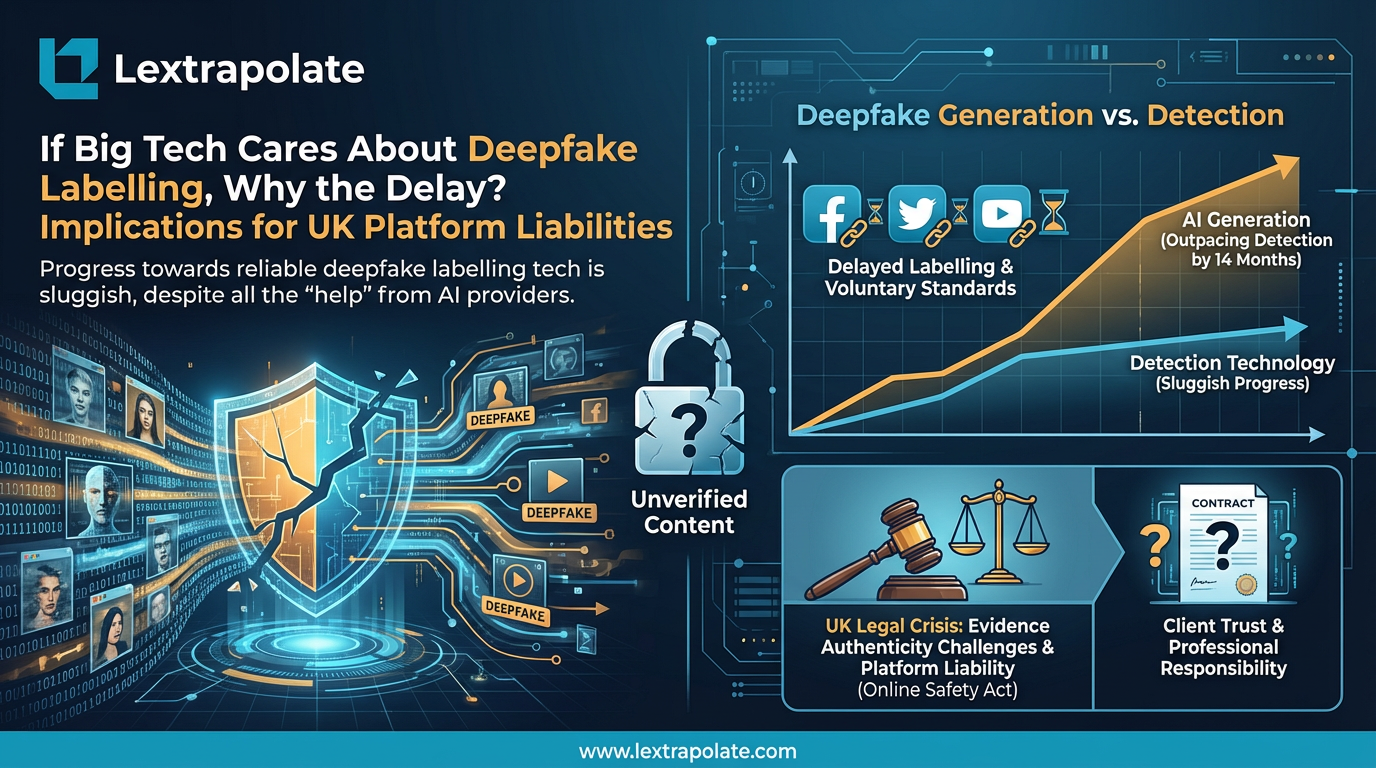

In 2026, the English law of evidence faces a fundamental crisis: deepfake generation has officially outpaced detection by a margin of 14 months. For a solicitor or barrister, the question is no longer whether a video looks real, but whether its cryptographic provenance can survive a challenge in the High Court.

Because the question is not whether the video looks real. The question is whether it is.

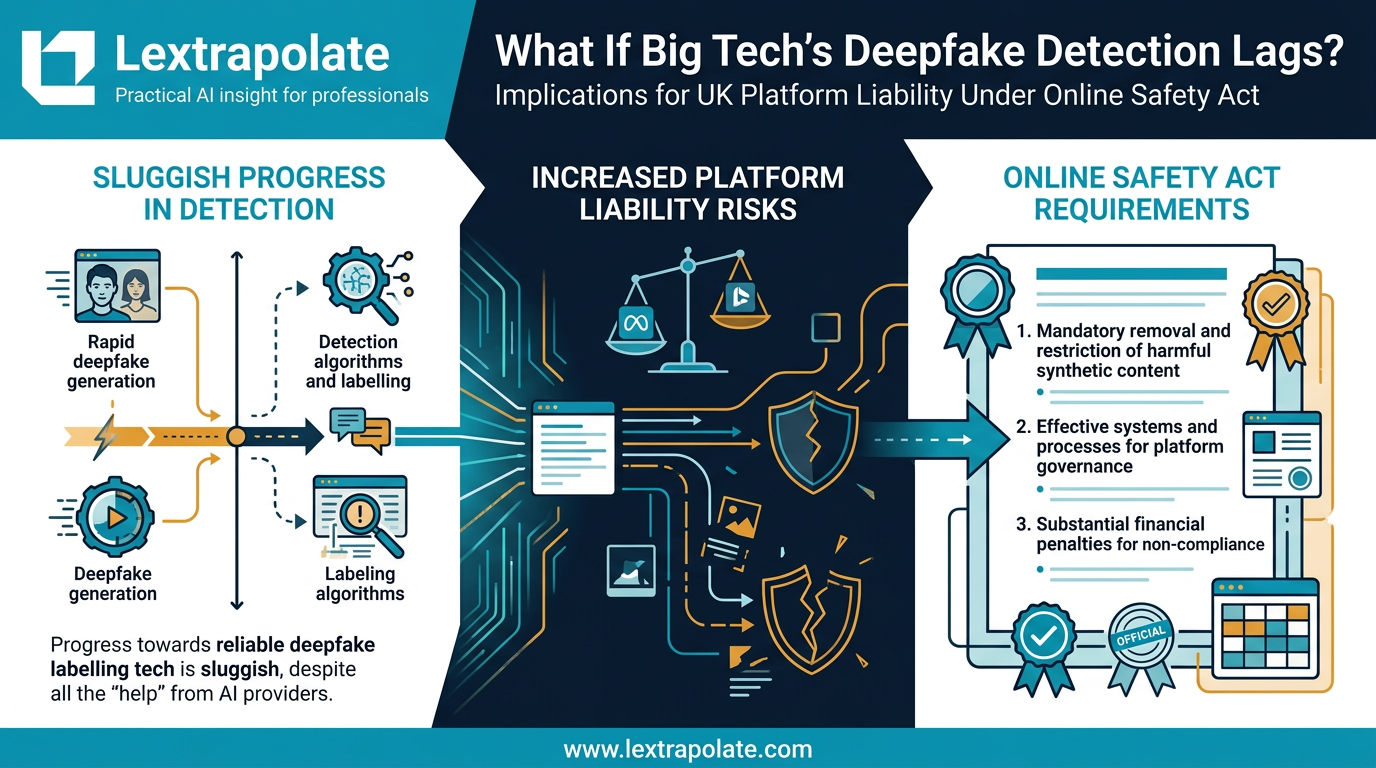

Deepfake generation has outrun deepfake detection, and the gap is widening. That is not an alarmist claim. It is the current technical consensus. The models producing synthetic video are improving faster than the tools trying to identify it. Detection methods that worked last year fail against content produced by this year's generation models. The spatial artefacts that used to betray AI-generated faces, the unnatural blinking, the texture inconsistencies around hairlines, are largely gone. Voice replication has reached the point where audio alone is not a reliable tell.

Adam Mosseri, head of Instagram, put it bluntly at the end of 2025: authenticity is becoming infinitely reproducible. He was talking about creator culture. The same observation applies, with considerably higher stakes, to legal proceedings.

The technical picture is not reassuring

Detection tools exist. Platforms including Meta have invested in them. The Coalition for Content Provenance and Authenticity (C2PA) has developed a watermarking and provenance standard designed to create a chain of custody for digital content, cryptographically linking media files to their origin. Some AI providers are embedding these signals in content they generate.

The problem is twofold. First, C2PA watermarks are voluntary. A bad actor generating a deepfake to deploy in fraud or litigation has no reason to attach one. Second, the absence of a watermark tells you nothing. Authentic content that predates C2PA adoption, or that has been processed or compressed after creation, will have no provenance signal either. You cannot distinguish "definitely fake" from "probably genuine but unverified."

Commercial detection tools from providers like McAfee and others have proliferated, but independent testing shows inconsistent accuracy, particularly against content generated by newer models. The honest position is that no tool currently available offers reliable detection across all media types and generation methods. Forensic analysis by specialists can raise or lower the probability of authenticity, but it cannot deliver certainty.

For a lawyer, probability is not enough.

What the law currently requires, and where it falls short

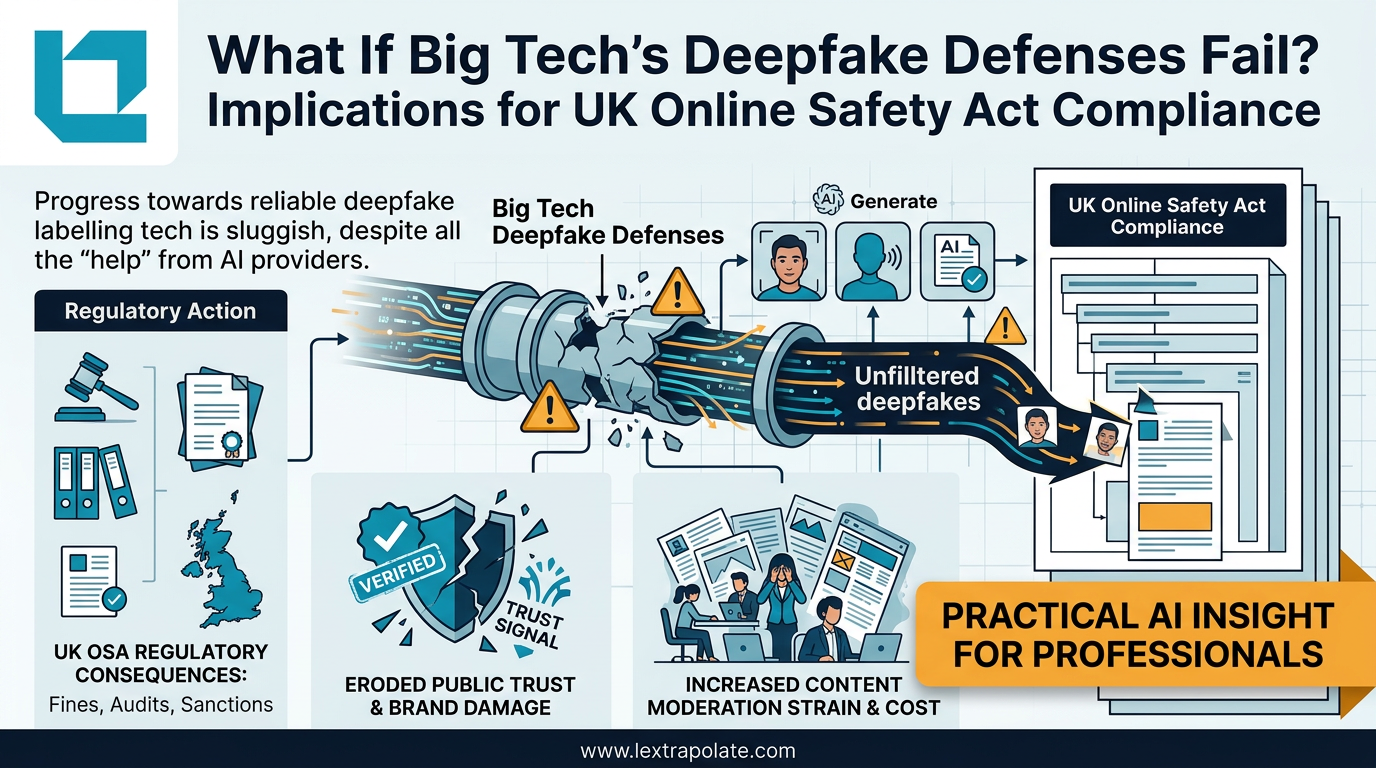

The Online Safety Act 2023 imposes obligations on regulated platforms to identify and manage harmful deepfakes, including synthetic content used to spread misinformation or facilitate fraud. Ofcom's enforcement powers are real. But the Act addresses platforms, not individual pieces of content, and it does not create any direct mechanism for verifying the authenticity of media files before they enter legal proceedings.

The Fraud Act 2006 already captures deepfake-facilitated fraud. A synthetic video fabricated to deceive a court or manufactured to support a false insurance claim falls squarely within section 2 (fraud by false representation). The criminal liability is clear. What is less clear is how a practitioner is supposed to identify the fraud before it is already embedded in the case.

The Civil Procedure Rules and the Criminal Procedure Rules both contain provisions for the disclosure and authentication of electronic evidence, but they were drafted in a world where the authenticity of a video file was not routinely in question. The courts have not yet caught up with a reality where any video could plausibly be synthetic. There is no prescribed standard for digital media authentication in UK civil or criminal procedure that addresses AI-generated content specifically.

The Data (Use and Access) Bill currently before Parliament touches on AI-generated data in limited ways, but it does not fill this gap. We are, for now, working with rules that assume the problem is document forgery, not algorithmic fabrication at scale.

The professional conduct dimension

A barrister or solicitor who places reliance on digital evidence without any verification process is not simply taking a technical risk. There is a professional conduct dimension here that the profession has not yet fully confronted.

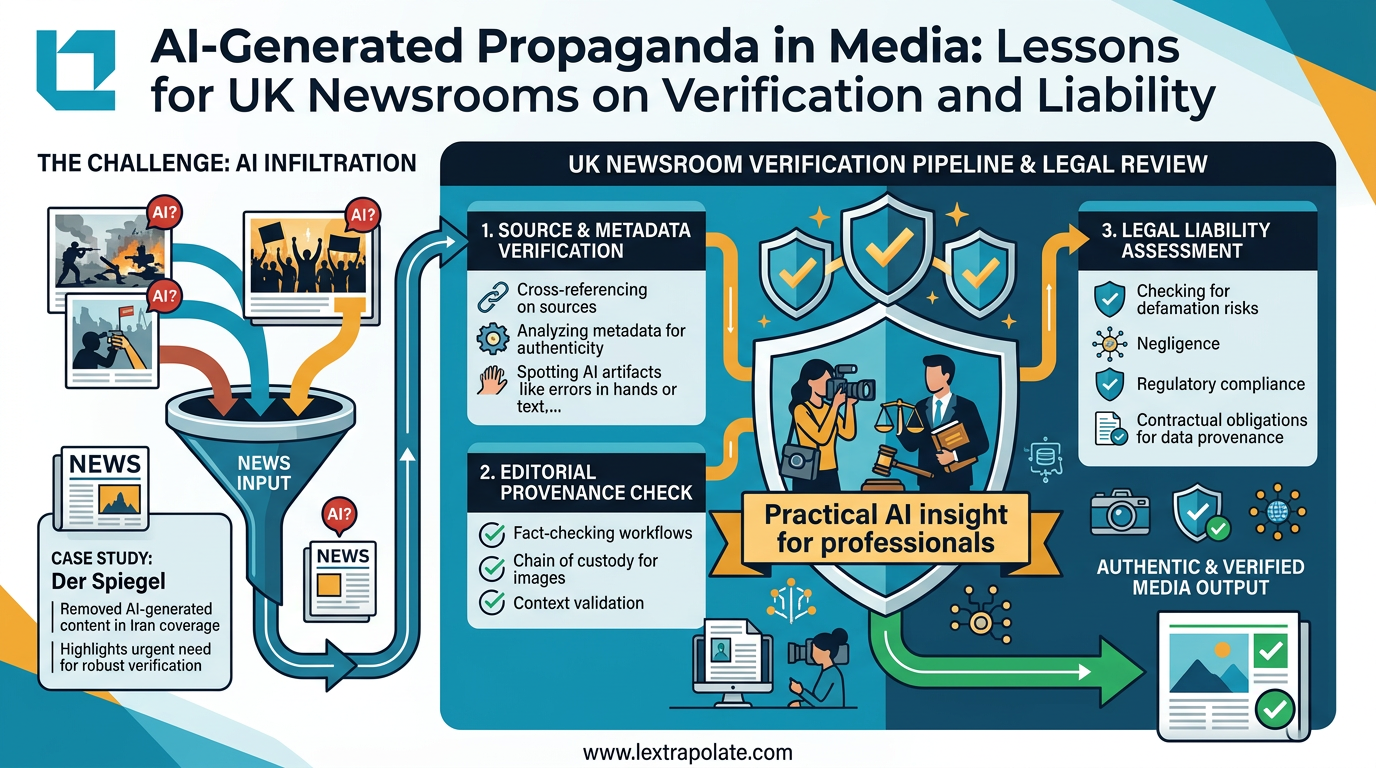

The duty of candour to the court, under the BSB Handbook for barristers and the SRA Code of Conduct for solicitors, requires practitioners not to mislead the court. Placing fabricated evidence before a tribunal without having taken reasonable steps to verify it would, in serious cases, engage that duty. "I thought it looked genuine" is not a defence that will satisfy a disciplinary tribunal in 2026.

The standard of "reasonable steps" will evolve as the problem becomes better understood. Firms that establish verification workflows now will be ahead of that curve. Firms that wait for a headline-grabbing case to force the issue will be playing catch-up, possibly at a client's expense.

What this looks like on Monday morning

Verification of digital media does not require a forensic laboratory for every case. It requires a tiered approach based on materiality.

For high-value media, video or audio evidence central to a disputed issue, instruct a digital forensics expert with specific competence in AI-generated content detection. Their report should address provenance, technical artefacts, and the limits of their conclusions. Brief the expert properly: a generic "is this genuine?" instruction is insufficient.

For lower-stakes material, apply a structured scepticism checklist. Check whether C2PA provenance data is present and consistent. Consider the source chain: where did this file come from, and is there a continuous, explicable custody record? Does the content contain anything implausible or inconsistent with other evidence in the case? If any of those questions raise doubt, escalate.

Document the process. If your firm has taken reasonable steps to verify material and those steps are recorded, you have a defensible position. If you have done nothing and the content turns out to be synthetic, you do not.

Firms with large volumes of media-heavy work, personal injury, financial crime, family proceedings involving surveillance footage, should consider whether their standard disclosure protocols need updating. The assumption that a file bearing an authentic-looking filename and metadata is what it appears to be is no longer safe.

Where this leaves us

The technology is not going to solve this cleanly or quickly. C2PA provenance standards are promising but incomplete. Detection tools are reactive by design: they identify artefacts left by yesterday's models, not today's. The regulatory framework addresses platforms, not practitioners.

That leaves the profession carrying a responsibility it did not ask for and has not yet fully accepted. The integrity of evidence in legal proceedings depends, increasingly, on lawyers asking a question they were never trained to ask: did this actually happen, or was it generated?

That is an uncomfortable shift. It is also, now, a necessary one.

If your firm does not have a policy on digital media verification, draft one. If your client hands you a video that matters, verify it before you rely on it. The stakes of getting this wrong, for your client, for the proceedings, and for your own practice, are not hypothetical.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

What If Big Tech's Deepfake Defences Fail? Implications for UK Online Safety Act Compliance

Detection tech is losing the arms race against deepfake generators. UK lawyers need technical literacy now, not when the first case lands.

What If Big Tech's Deepfake Detection Lags? Implications for UK Platform Liability Under the Online Safety Act

Deepfake detection is losing the arms race. For UK lawyers, that creates evidence integrity risks and Online Safety Act exposure worth taking seriously now.

Seeing Is No Longer Believing: What the Der Spiegel AI Image Scandal Means for UK Professionals

Der Spiegel pulled AI-generated propaganda images from its Iran coverage. UK lawyers and journalists using visual evidence need to update their workflows now.