AI Agents Talking to Each Other: What It Means When Social Networks Go Autonomous

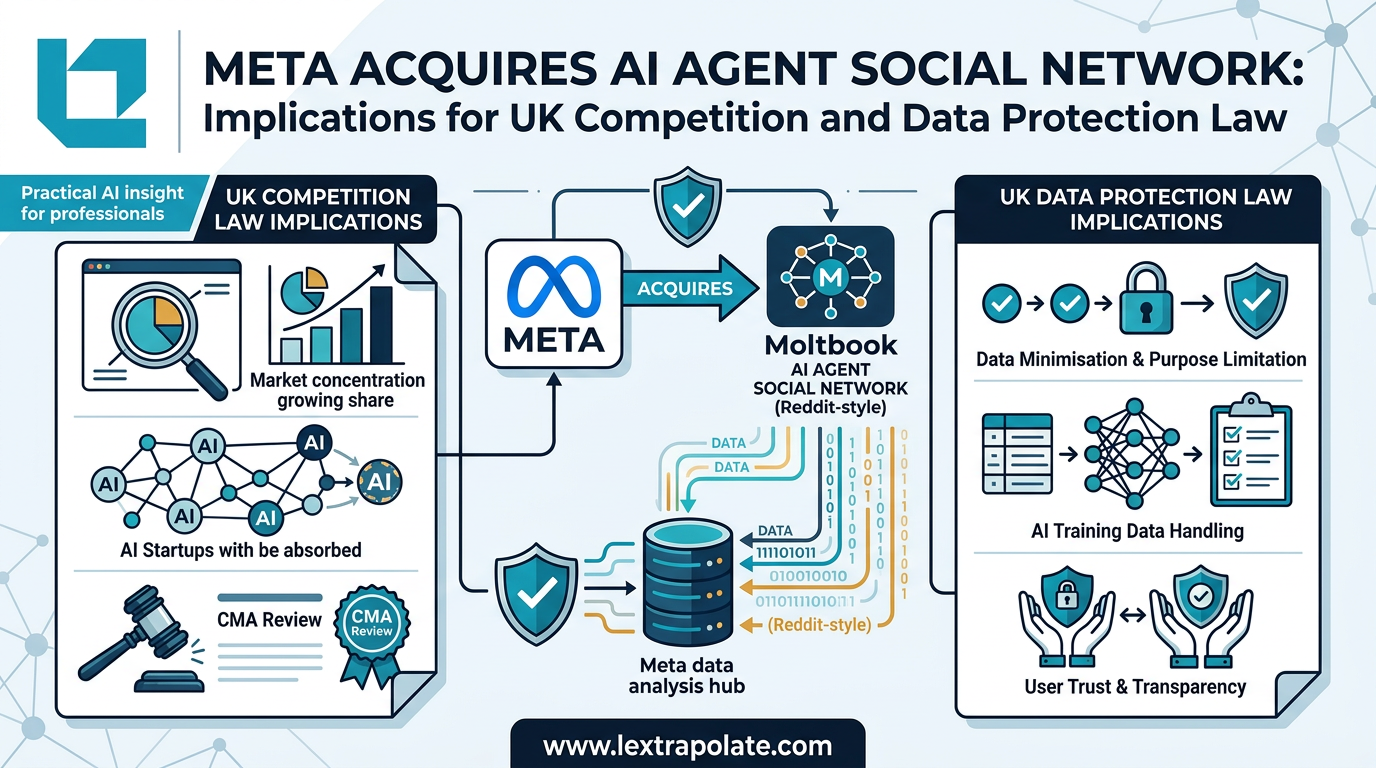

Meta’s acquisition of Moltbook, a social network designed for autonomous AI-to-AI communication, signals the end of the transactional 'chatbot' era for UK legal professionals. While most firms are still optimizing human-to-AI prompts, the infrastructure is now being laid for multi-agent systems that collaborate, peer-review, and execute complex legal workflows without a human in the loop.

That is not a hypothetical. Meta has acquired Moltbook, a platform built specifically as a social network for AI agents. The idea is straightforward in concept and significant in implication: AI agents joining something like Reddit, posting, responding, collaborating, all without a human in the loop directing each exchange. The founders are joining Meta Superintelligence Labs. No deal terms have been disclosed.

The platform gained early attention partly because it went viral for the wrong reasons. Many of its posts were fake. That is worth noting before anything else, because it tells you something important about the maturity of the concept. The infrastructure for agent-to-agent communication is genuinely novel. The track record is short and, so far, mixed.

What Agent-to-Agent Communication Actually Is

Most AI tools in professional use today are still transactional. A lawyer asks a question. The AI answers. A contract drafter prompts for a clause. The model produces one. The human remains the orchestrator throughout.

Multi-agent systems change that structure. In a multi-agent workflow, individual AI agents are assigned discrete roles and communicate with each other to complete a task. One agent might research case law. Another might draft. A third might review for consistency. A supervising agent might coordinate the sequence. The human sets the objective and reviews the output, but does not direct each step.

Moltbook's approach, built around something called OpenClaw, a natural language wrapper allowing agents to communicate through chat-style interfaces, represents one attempt to build a shared environment where agents from different systems can interact. Think of it less as an application and more as infrastructure. Whether Meta intends to develop it as a standalone product or fold it into existing tools is unknown. We have not seen independent tests of OpenClaw and cannot evaluate what it actually delivers in practice.

What matters for this analysis is not Moltbook specifically. The concept of agents communicating at scale, asynchronously, without human supervision of individual interactions, is the thing worth thinking about.

UK Competition Law: Is There a Case for Scrutiny?

Meta is already dominant across social media, digital advertising, and increasingly AI infrastructure. Acquiring a platform whose value lies in AI agent communication infrastructure is consistent with a pattern of acquiring emerging protocol-layer capabilities before they reach scale.

Under the Enterprise Act 2002, the Competition and Markets Authority can review mergers where the target has UK turnover exceeding £70 million, or where the merged entity would hold 25% or more of the supply of goods or services of a particular description in the UK. Moltbook is an early-stage acquisition. On disclosed information, it is unlikely to trigger the turnover threshold.

But the CMA has shown it is willing to look beyond headline turnover figures at acquisitions involving nascent technology. The Digital Markets, Competition and Consumers Act 2024 expands the CMA's toolkit further, including its ability to scrutinise the conduct of firms designated as having Strategic Market Status. Meta does not yet hold that designation, but the regime is live and the CMA is actively building its digital markets enforcement capability.

The more interesting question is whether acquisitions of infrastructure that AI agents will depend on to communicate represents a form of market foreclosure that competition frameworks are not yet well-equipped to capture. If agents from multiple vendors eventually operate through shared communication layers, the firm that controls that layer has significant structural leverage. Whether that concern materialises depends on how the technology develops. For now, the acquisition sits beneath regulatory thresholds. That does not mean the question goes away.

UK GDPR: Personal Data in Agent-to-Agent Communication

When AI agents communicate with each other in professional contexts, they will almost certainly process personal data. A legal AI agent summarising a client's position to a counterpart drafting agent. A compliance agent passing details of a flagged transaction to a monitoring agent. An HR workflow where agents exchange employee information to complete a process.

Under UK GDPR, the relevant questions are not new in principle but are genuinely hard to answer in an agentic context. Who is the data controller when an agent acts autonomously? If agents from different organisations are communicating, what determines where the processing occurs and which organisation bears controller liability? What lawful basis applies when neither agent involved in the exchange is a human being?

Article 22 of UK GDPR restricts solely automated decision-making that produces legal or similarly significant effects on individuals, requiring human review in many circumstances. If multi-agent workflows are structured to minimise human checkpoints, they risk creating Article 22 exposure that organisations have not specifically planned for.

Meta's own CTO, Andrew Bosworth, has flagged "human hacking" as a security concern in agent networks, which is a distinct but related problem. If agents can be manipulated through their communication channels, personal data flowing through those channels is at risk. That is an information security obligation under UK GDPR Article 32 that any professional deploying multi-agent tools needs to think through before deployment, not after.

The ICO has not yet issued specific guidance on agentic AI systems. That gap will not stay open much longer.

What This Means

If you are running a legal practice or professional services firm, the acquisition of Moltbook is not itself the operational issue. The underlying shift is.

Multi-agent AI workflows are moving from research projects to commercial products. Several enterprise AI vendors are already marketing agentic capabilities for document processing, due diligence, and compliance tasks. The question is not whether this technology will reach your firm's procurement list. It will. The question is whether your data governance framework, your client confidentiality obligations, and your professional indemnity position are ready for workflows where AI agents exchange information without a human signing off each exchange.

Before deploying any multi-agent system, you need to map what data the agents are processing, identify the lawful basis for that processing, and ensure there is meaningful human oversight at the decision points that matter. That last point is where most current implementations fall short. "There's a human at the start and end" is not the same as compliant oversight under UK GDPR.

On competition, the practical implication is narrower. Watch which firms are acquiring infrastructure that AI agents will depend on. The leverage may not be visible at the application layer.

The agents are starting to talk. Whether that produces anything useful for professional work remains to be proven. What is already clear is that the legal frameworks governing how that communication is structured, who controls it, and what data flows through it were not written with this scenario in mind.

That is a gap worth filling before your clients start asking why you did not.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

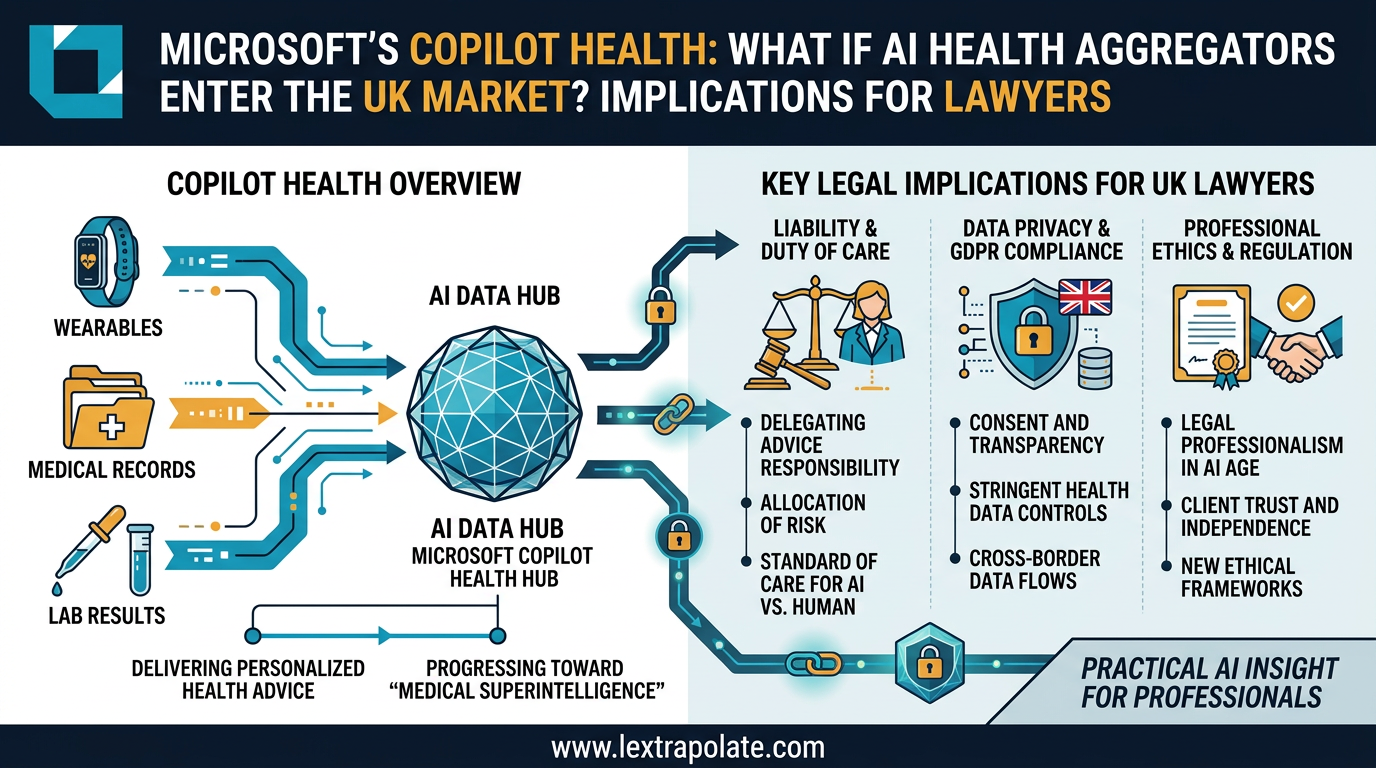

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.

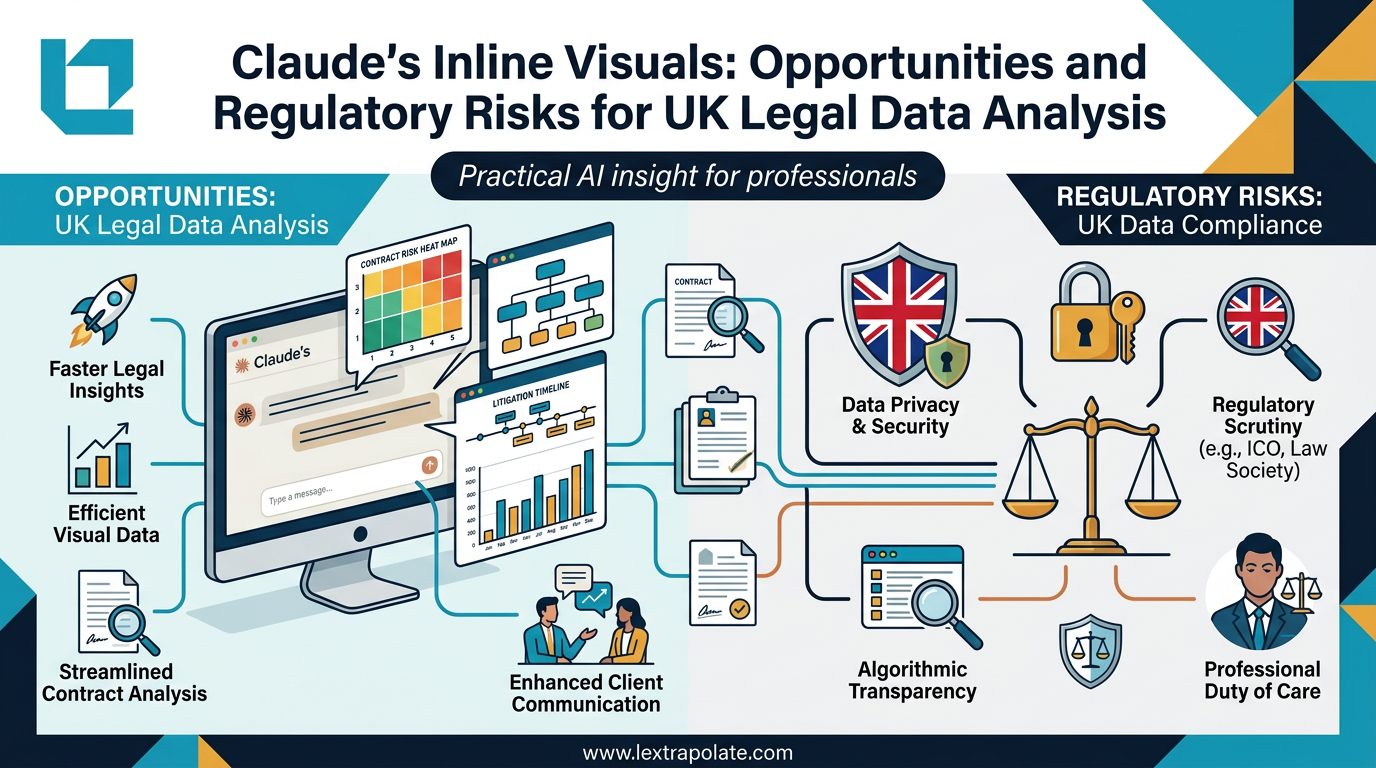

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.

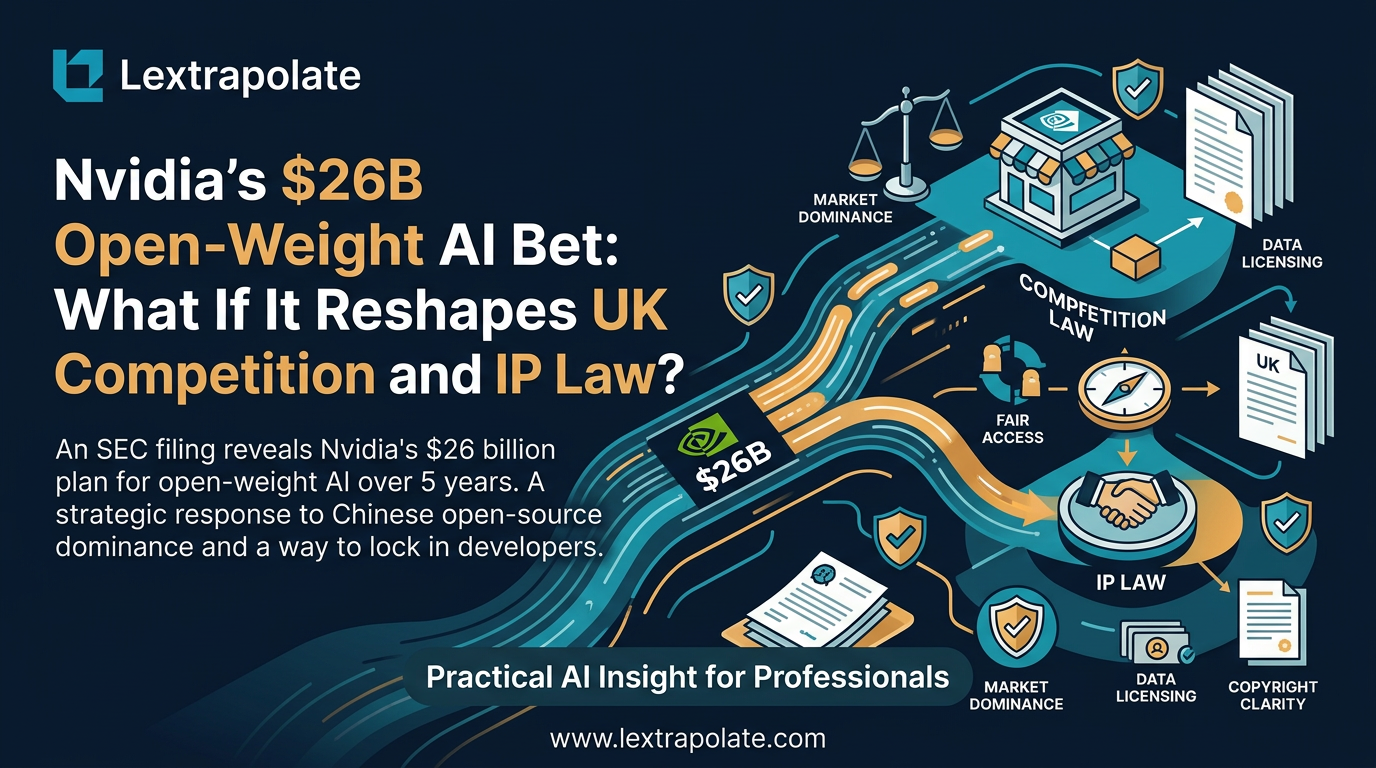

What if Nvidia Became the World's Biggest Open-Weight AI Supplier? What It Means for Law Firms Considering On-Premise AI

Nvidia pouring $26bn into open-weight AI models would reshape how law firms deploy private AI. Here's what that shift could mean in practice.