AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

You get a set of financial data from a client on a Thursday afternoon. Counsel's opinion is needed by Monday. The analysis is straightforward, but presenting it clearly will take half a day in Excel and another half in PowerPoint. That half-day is the problem.

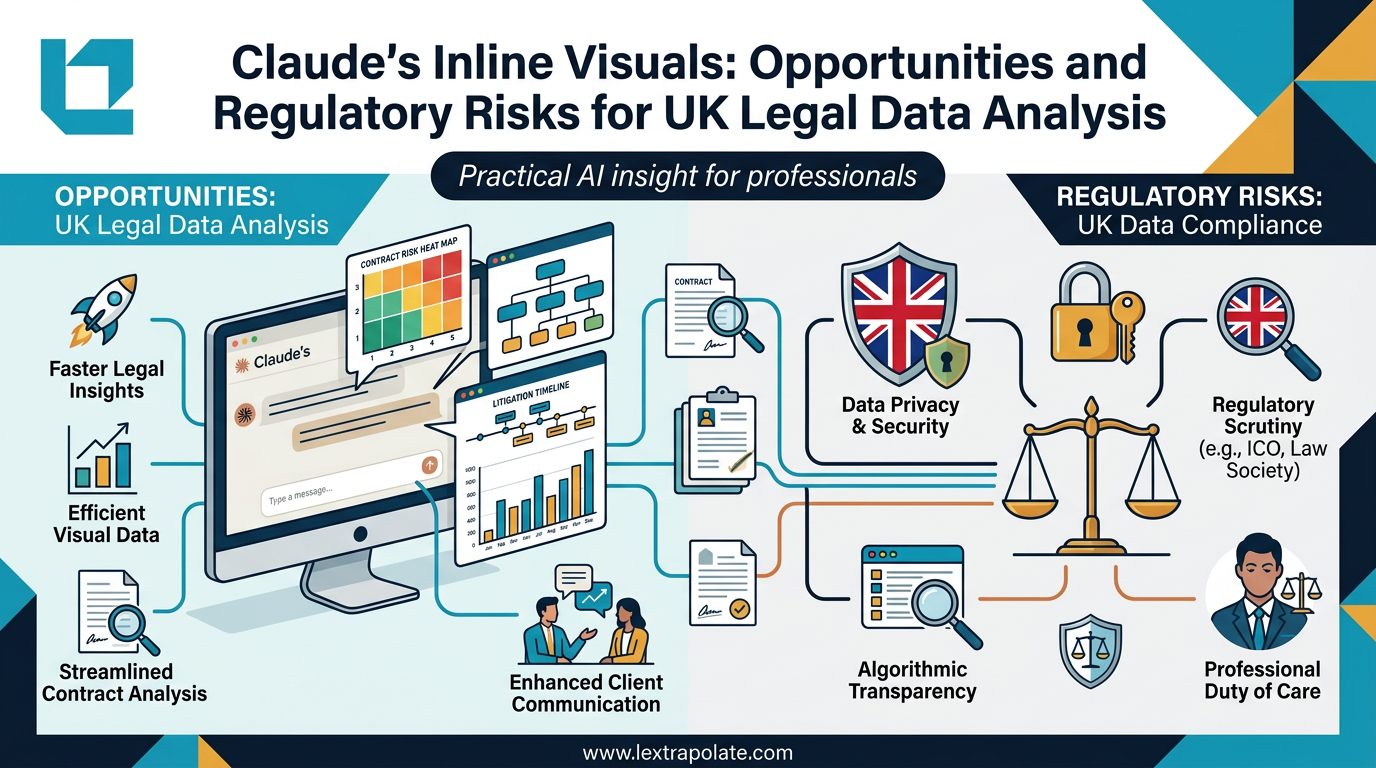

AI tools that generate visual outputs inline, without exporting to a separate application, promise to compress that process significantly. On 12 March 2026, Anthropic launched a beta feature enabling its Claude model to produce interactive charts, diagrams, and visualisations directly within a conversation. The model auto-detects when a visual would help or generates one on request. Initial hands-on coverage, including a review by TechRadar, confirms the basic functionality works. We have not tested it ourselves.

The specific product is not really the point. What matters is that AI-generated visualisation is now a practical option for legal and advisory work, not a theoretical one. That warrants a careful look at both the opportunity and the obligations it creates.

What Changes When AI Generates the Chart

The conventional workflow involves a human constructing a chart: choosing axes, selecting chart types, deciding what to include, spotting anomalies. That process forces the analyst to interrogate the data. It is slow, but the slowness has analytical value baked into it.

AI-generated charts short-circuit that process. The model decides what to display and how. For a busy litigator or a consultant preparing a board pack, the speed gain is real. For a lawyer who needs to stand behind an analysis presented to a court or a client, the question is whether they understood what the model decided and why.

This is not a hypothetical concern. In Harber v Commissioners for HMRC [2023] UKFTT 1007 (TC), the First-tier Tribunal criticised AI-assisted submissions where the lawyer could not explain the reasoning behind them. Visual outputs generated by AI carry the same professional responsibility risk. If you present a chart to a client or in proceedings, you are presenting it. The model is not.

The capability is genuinely useful. Used properly, it could allow a junior fee-earner to produce client-ready analysis in an hour rather than half a day. But "used properly" is doing significant work in that sentence.

The Data Protection Position

The obvious risk that most commentary skips past is this: to get a useful chart from an AI model, you have to give it data. In legal practice, that data is often personal.

Consider a personal injury firm analysing claimant data to identify settlement patterns. Or a family practice looking at financial disclosure across a cohort of cases. Or a corporate team mapping counterparty exposure across a transaction. In each case, uploading data to a third-party AI tool engages UK GDPR obligations under the UK GDPR and the Data Protection Act 2018.

The key questions are familiar but matter more when data moves to an external model. Is there a lawful basis for processing? Has the data been adequately pseudonymised before it leaves the firm? What does the AI provider's data processing agreement say about retention and training? These are not new questions, but the frictionless nature of inline visualisation makes it easier to paste in a spreadsheet without thinking them through.

The ICO's guidance on AI and data protection, updated in 2024, is clear that purpose limitation applies to AI processing just as it does to any other form. Data collected for litigation purposes cannot simply be fed into an AI tool for analytical convenience without a proper basis. Firms that have invested in clean data governance for their core systems sometimes forget to apply the same standards to ad-hoc AI use.

Anthropic's data handling policies, like those of most frontier AI providers, give enterprise users contractual assurances but the details matter and vary by plan. This is not a reason to avoid the capability. It is a reason to establish firm-level data handling rules before individual fee-earners start experimenting.

Transparency Obligations and the AI Regulatory Direction of Travel

The UK AI regulatory framework is principles-based rather than prescriptive, at least for now. The AI Safety Institute and the previous government's pro-innovation AI strategy left sectoral regulators to develop their own guidance. The SRA has been cautious but consistent: AI use in practice is permissible provided the solicitor retains competence, oversight, and client disclosure where relevant.

What does that mean for AI-generated visuals? In client-facing work, the position is relatively straightforward: if a material part of your analysis was generated by AI, your client ought to know. Not a lengthy disclaimer, but disclosure that the output was AI-assisted. Most clients will not object. Some will want to understand more. A few will not consent. That is their right.

In proceedings, the position is tighter. The disclosure obligations under CPR Practice Direction 57AC on trial witness statements, and the courts' increasingly robust treatment of AI-generated content (see Ayinde and its aftermath), suggest that presenting AI-generated visual analysis without flagging its provenance is professionally risky. Courts are not uniformly hostile to AI-assisted work, but they require transparency about process.

The longer-term regulatory direction supports this. The EU AI Act, which UK firms will encounter in cross-border work, classifies AI systems used in the administration of justice as high-risk. UK equivalents may follow. Firms that build disclosure and oversight habits now will be better placed if and when formal requirements arrive.

What This Actually Means

If you want to use AI-generated visualisation in practice this week, three things need to be in place before you start.

First, data hygiene before upload. Strip or pseudonymise personal data before it goes anywhere near an external AI tool. This is not optional and it is not slow if you build it into the workflow from the start.

Second, review the output with the same critical eye you would apply to work from a trainee. The chart may look professional. That does not mean it is accurate, or that it has captured the right variables, or that it has not silently omitted outliers that would change the picture. You are the analyst. The model is the tool.

Third, document your process. If a visual output forms part of advice or appears in proceedings, keep a record of what data you provided, what prompt you used, and what review you applied. That record protects you professionally and demonstrates the competence the SRA expects.

The broader point is that AI visualisation is a productivity capability, not an analysis capability. It can compress the time between data and presentation. It cannot replace the judgement that determines what the data means and how it should be presented. That judgement remains yours, and so does the professional responsibility if it goes wrong.

The concept is promising. Treat the current tools as capable assistants, not trusted experts, and the productivity gains are real. Skip the governance and you create risk for yourself, your firm, and your clients.

If you are developing AI policies for legal practice or knowledge-intensive work, Lextrapolate offers independent advisory support on governance, training, and implementation. Details at lextrapolate.com.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

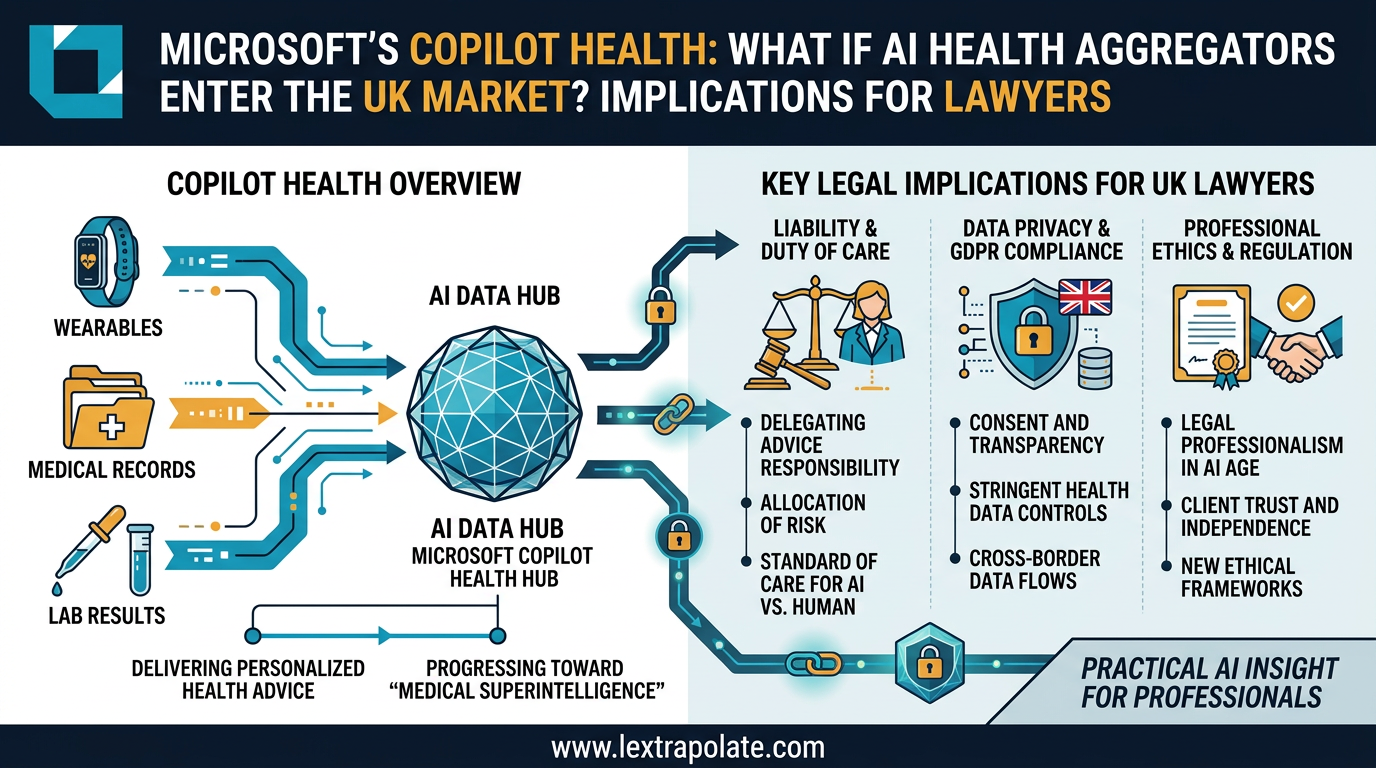

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.

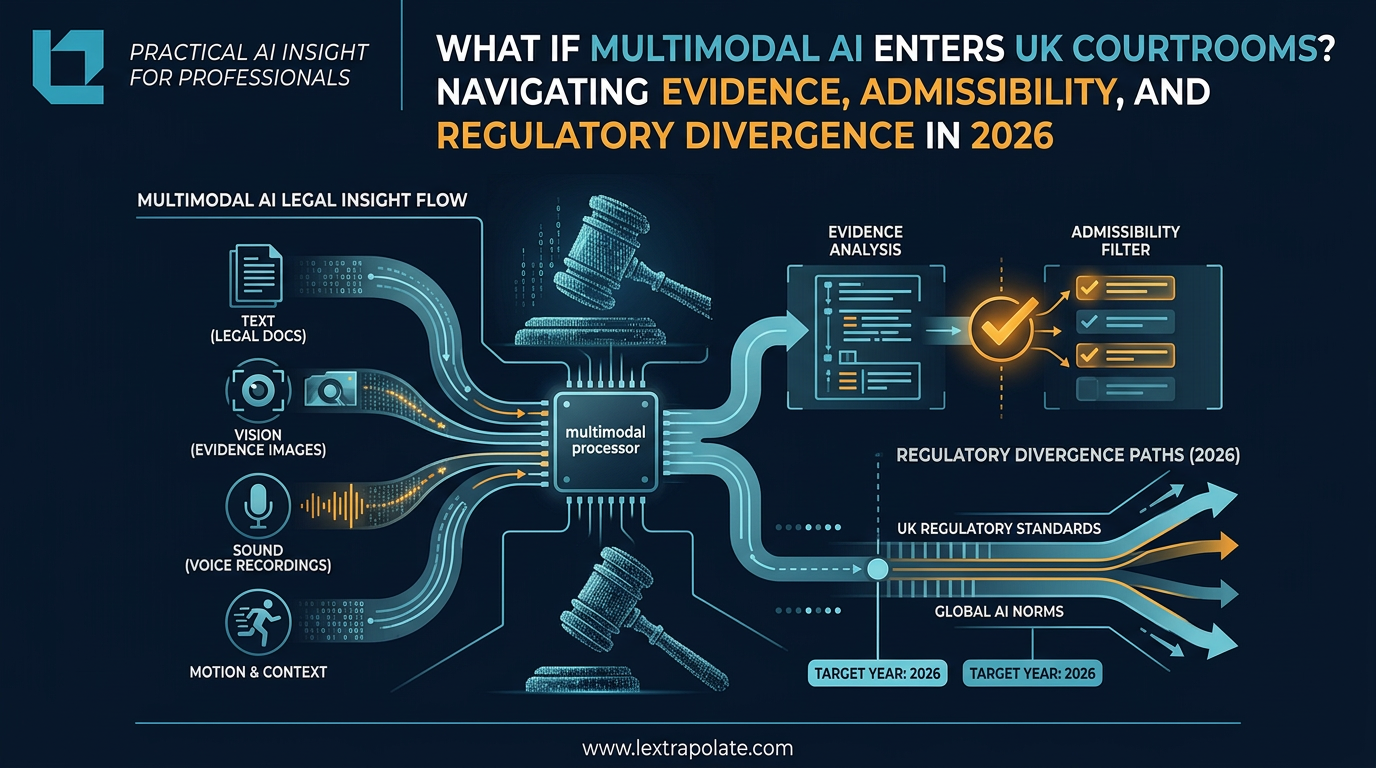

What If Multimodal AI Enters UK Courtrooms? Navigating Evidence, Admissibility, and Regulatory Divergence in 2026

Multimodal AI can see, hear and reason across modalities. UK courts are not ready. Here is what lawyers need to understand before this lands on their desk.

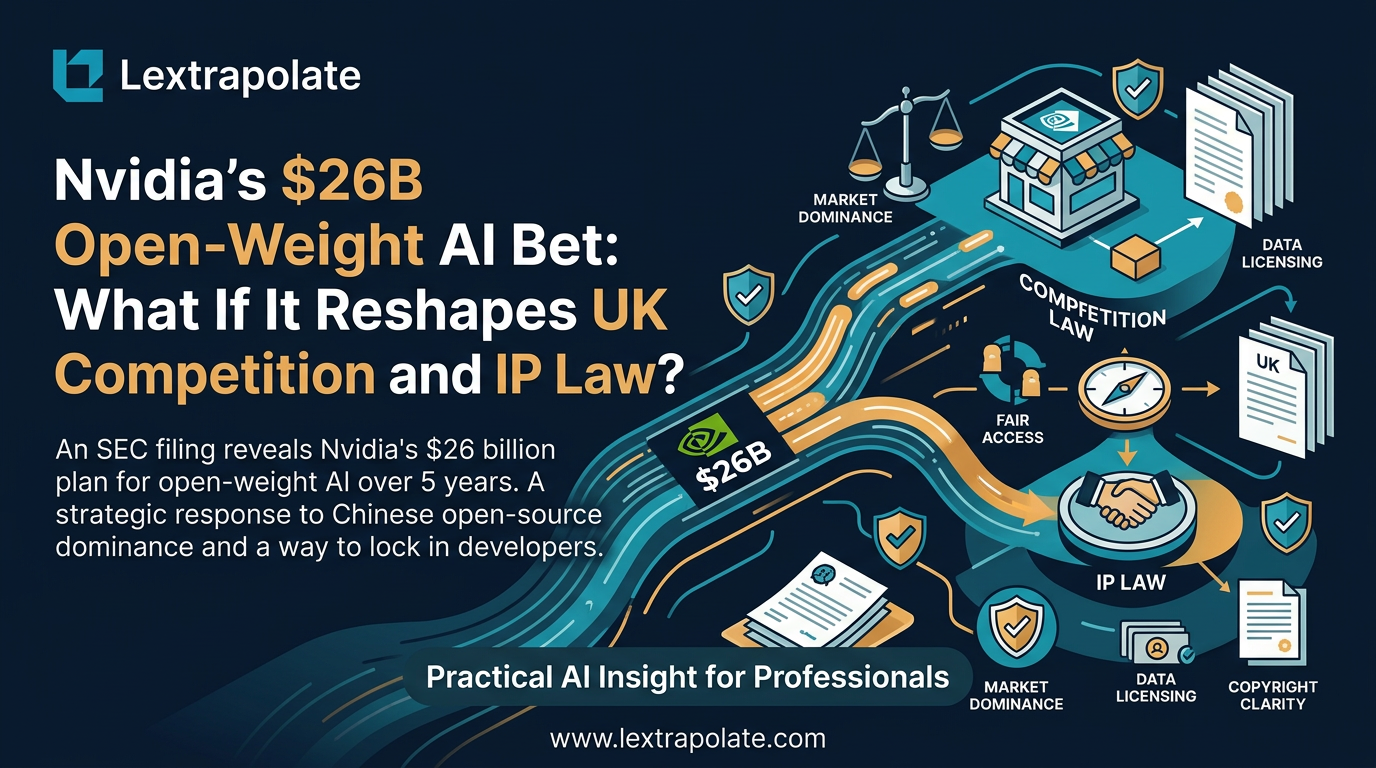

What if Nvidia Became the World's Biggest Open-Weight AI Supplier? What It Means for Law Firms Considering On-Premise AI

Nvidia pouring $26bn into open-weight AI models would reshape how law firms deploy private AI. Here's what that shift could mean in practice.