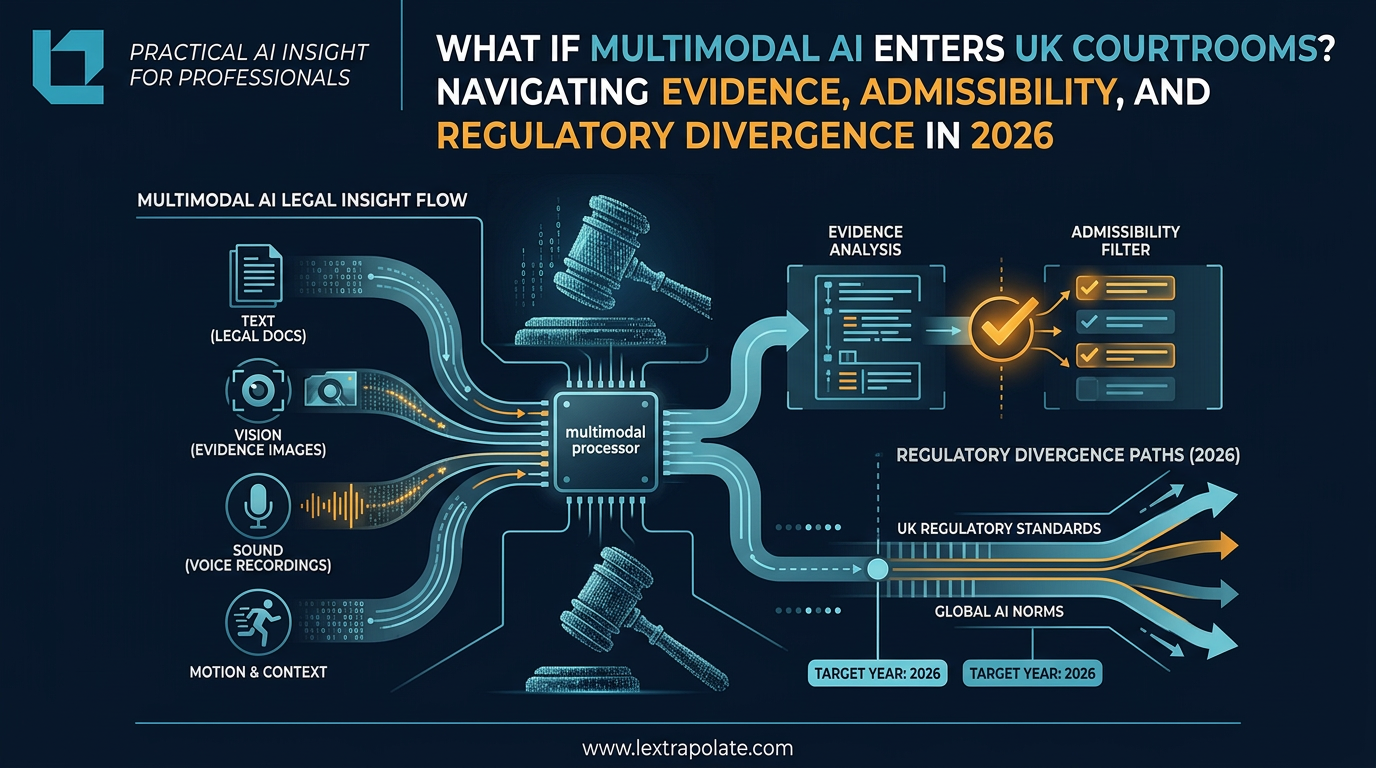

What If Multimodal AI Enters UK Courtrooms? Navigating Evidence, Admissibility, and Regulatory Divergence in 2026

Imagine a road traffic case in 2027. The prosecution's principal exhibit is a multimodal AI analysis combining dashcam footage, acoustic data from the vehicle's sensors, and real-time traffic telemetry. The system synthesised all three streams and concluded the defendant was driving dangerously. No human expert reviewed the raw feeds. The AI did the perceiving, the reasoning, and the conclusion. Counsel for the defence asks: on what basis is this admissible?

That question does not yet have a clean answer in English law. It will need one soon.

From Text to Perception: What Multimodal AI Actually Does

Earlier AI systems processed language. They read documents, summarised contracts, answered queries. Multimodal AI goes further. These systems integrate text, vision, audio, motion data, and increasingly tactile information, processing them simultaneously rather than sequentially. The system does not read about a scene. It perceives it, or something functionally close enough to perception to create genuine legal difficulty.

The global market for multimodal AI is projected at $3.43 billion in 2026, reaching $12.06 billion by 2030. That growth rate reflects the breadth of deployment, not a niche experiment. These systems are already in healthcare diagnostics, financial surveillance, insurance claims processing, and employment screening. Regulated sectors, all of them. Sectors where UK law has sharp teeth.

What matters for lawyers is the architectural shift underneath the market numbers. The most significant development is not simply that AI can now process images alongside text. The more consequential shift is towards systems with genuine continual learning built into their core architecture rather than bolted on. Earlier systems learned during training and then froze. Increasingly, systems update as they encounter new data in deployment. That distinction matters enormously when you need to explain, in cross-examination, exactly what the system knew and when.

The Admissibility Problem Under English Law

English evidence law has no settled doctrine for AI-generated evidence. Courts have addressed expert evidence, hearsay exceptions, and documentary records, but a multimodal AI system sits awkwardly across all three categories and fits none of them neatly.

The closest analogy is expert opinion evidence under the Civil Evidence Act 1995 and the Criminal Justice Act 2003. Expert evidence is admissible where the witness has expertise the court lacks. But an AI system is not a witness. It cannot be cross-examined. It cannot explain its reasoning in terms a court can interrogate. When the AI's conclusion rests on processing thousands of simultaneous data streams through a neural architecture that its own developers cannot fully articulate, the reliability threshold under Kennedy v Cordia Services [2016] UKSC 6 becomes very difficult to satisfy.

There is also a data protection dimension that practitioners are underestimating. Where a multimodal AI system processes biometric data, location data, or audio recordings of identifiable individuals, it is processing special category data under UK GDPR. Article 22 restricts solely automated decision-making that produces legal or similarly significant effects. A prosecution built substantially on an AI's perceptual analysis of a defendant's behaviour is, on any reasonable reading, a significant effect. The Information Commissioner's Office has not yet addressed this directly in the context of criminal evidence. That silence should not be read as permission.

The Human Rights Act 1998 adds a further layer. Article 6 guarantees a fair trial, including the right to examine evidence against you. Where the evidence is the output of a system whose reasoning is opaque even to its creators, meaningful examination becomes difficult to guarantee. Strasbourg has not ruled on this configuration. UK courts will eventually have to.

Regulatory Divergence: Why US Compliance Is Not UK Compliance

UK lawyers advising clients who develop or deploy multimodal AI systems need to understand that compliance with US state AI frameworks does not transfer. Colorado, Texas, California, and Illinois have all introduced or enacted AI governance requirements in 2026. These focus substantially on algorithmic impact assessments and bias auditing, with varying transparency obligations. They do not map onto UK law.

The UK's approach remains sector-led, operating through the ICO, the FCA, the CQC, and the Equality and Human Rights Commission rather than a single AI statute. That fragmentation creates genuine compliance complexity. A multimodal AI system used in insurance underwriting might satisfy FCA principles on explainability while simultaneously failing UK GDPR requirements on automated decision-making, depending on how the system is configured. These regulators do not co-ordinate automatically.

The specific risk for 2026 is that organisations importing AI systems designed and validated under US regulatory frameworks assume UK compliance follows. It does not. UK sector-specific risk thresholds, particularly in healthcare and financial services, can be considerably more demanding on transparency and human oversight than comparable US requirements. A system lawful under Colorado's AI Act may face substantive scrutiny from the ICO the moment it processes personal data about UK subjects.

The Continual Learning Complication

Baked-in continual learning makes this harder, not easier. If a multimodal AI system in deployment updates its parameters as it processes new cases, the version of the system that generated a disputed output in January may not be functionally identical to the system running in March. In litigation, provenance matters. So does consistency.

The obligation under the Civil Procedure Rules to disclose the basis of expert evidence, and the broader obligation of candour in proceedings, will require lawyers to grapple with questions about model versioning, audit logs, and whether the system can reproduce its reasoning on demand. Most organisations deploying these systems cannot currently answer those questions. That is a litigation risk, not a theoretical one.

Monday Morning

If you advise clients in healthcare, financial services, insurance, or employment, or if you conduct litigation in which AI-generated analysis might appear as evidence, there are practical steps to take now.

First, ask your clients what AI systems they are using and whether any of them produce outputs that could be used in regulatory or legal proceedings. Most clients do not know the answer without being pushed.

Second, examine the data governance documentation for any multimodal system already in use. Is there a model card? An audit log of parameter updates? A clear account of what data the system processed and when? If not, that is a gap to close before a disclosure obligation crystallises.

Third, if you are instructed on a case where AI-generated perceptual analysis forms part of the evidence, treat it as you would any contested expert opinion. Commission independent technical review. Identify the assumptions. Find the points where a court can test reliability. Do not accept the output as a neutral fact just because it comes wrapped in apparent precision.

The law on this is unresolved. That is an opportunity for the lawyers who prepare now, and a serious risk for those who assume resolution will come before the cases do.

I work with legal and professional services teams on exactly these questions through Lextrapolate. If your firm or your clients are starting to encounter multimodal AI in practice and want a structured framework for managing it, get in touch.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

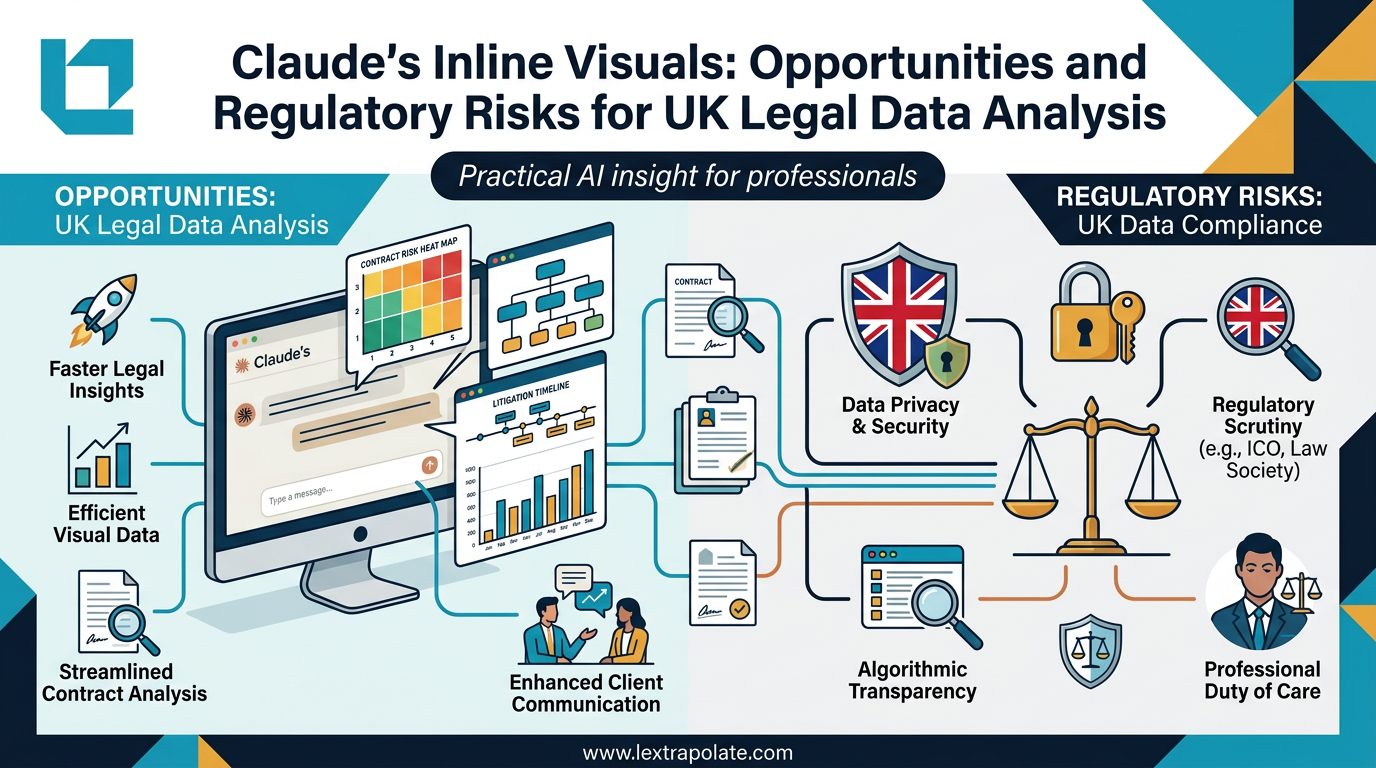

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.

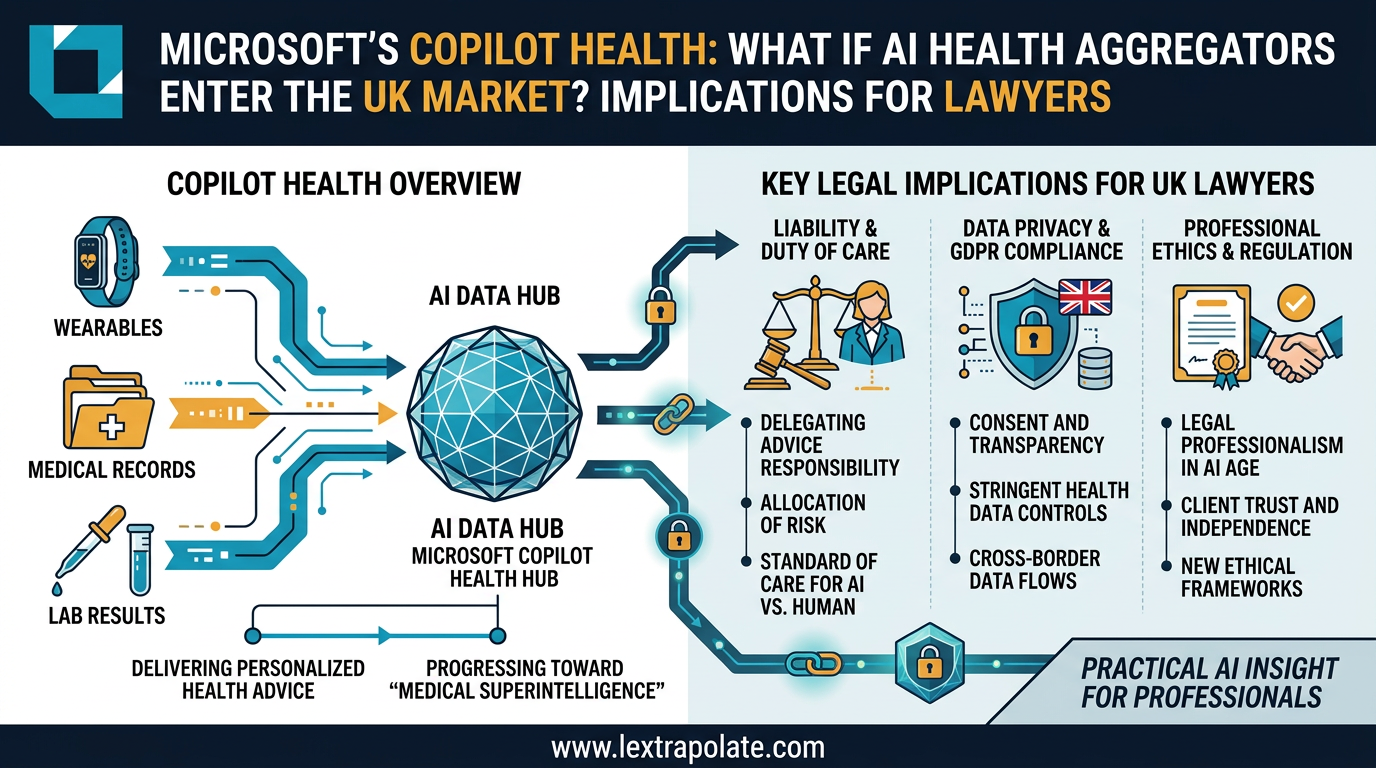

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.

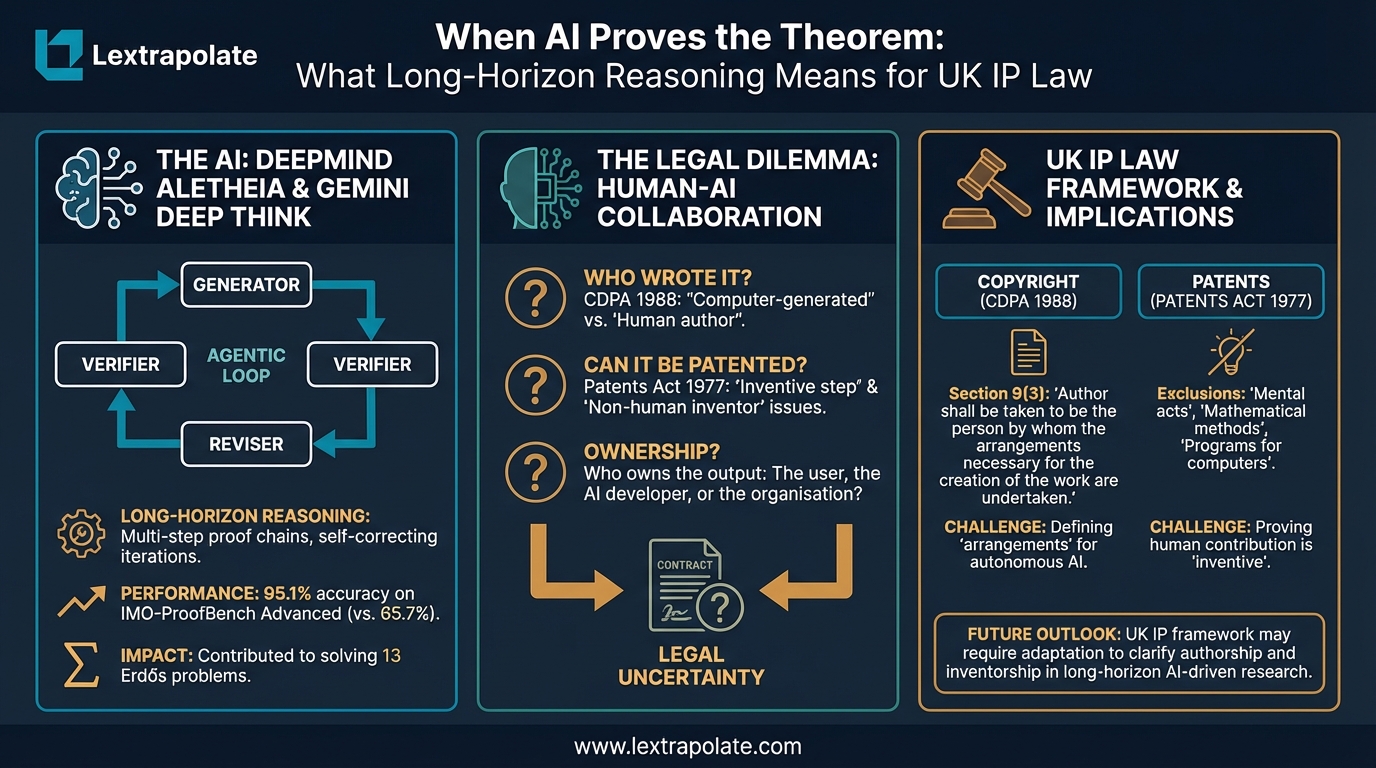

When AI Proves the Theorem: What Long-Horizon Reasoning Means for UK IP Law

AI systems can now generate and verify complex mathematical proofs autonomously. UK copyright and patent law is not ready for what that means.