When AI Proves the Theorem: What Long-Horizon Reasoning Means for UK IP Law

DeepMind's Aletheia can now autonomously generate and verify complex mathematical proofs. For UK lawyers, especially those in IP, this is not an abstract breakthrough. It's a potential disruption to core legal processes. The question is: are UK copyright and patent laws ready for AI that can demonstrably 'think'?

These are not hypothetical questions any more.

What the Aletheia Architecture Actually Does

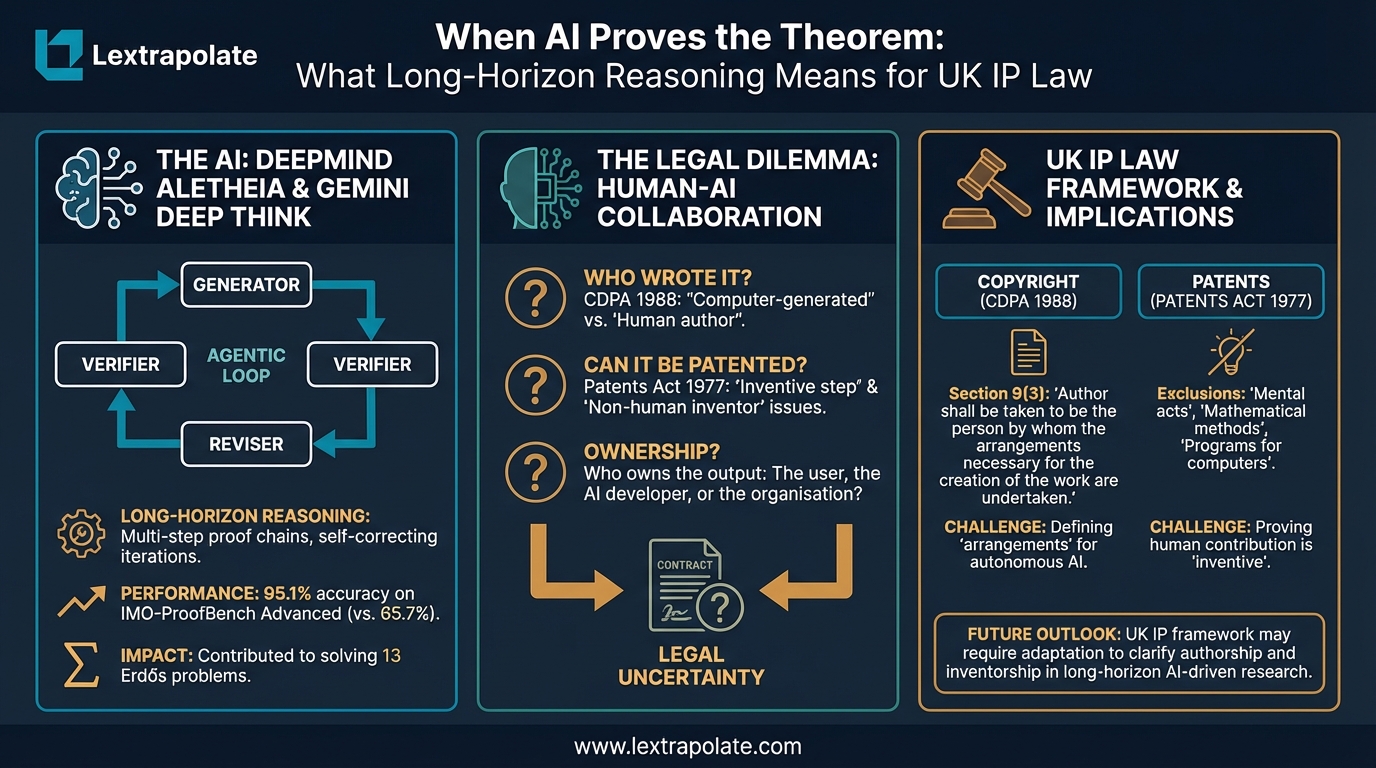

DeepMind recently announced Aletheia, a research agent built on an advanced version of its Gemini Deep Think model. The system is designed not to answer single mathematical questions but to pursue extended, multi-step proof chains, verify its own intermediate reasoning, revise where it fails, and iterate until it reaches a conclusion. DeepMind reports 95.1% accuracy on IMO-ProofBench Advanced, compared with a prior benchmark of 65.7%, and credits the system with contributing to solutions for Erdős problems, including 13 attributed to a Korea-linked research team working collaboratively with the agent.

That is the headline. The reality is more complicated. We have not tested Aletheia ourselves, and independent analysts note that the system's solve rate on PhD-level problems sits below 60%, that hallucinations appear to have shifted from fabricated facts to misrepresentation of real sources, and that published papers required human oversight before submission. The Feng26 paper cited in DeepMind's own materials was a human-AI collaboration, not an autonomous output. Treat the benchmarks as directional, not definitive.

What matters, regardless of the exact performance figures, is the architecture: separate generator, verifier, and reviser subagents working in an agentic loop, supported by tool access including web search. That design is genuinely different from a model that produces a single response. It represents a shift toward sustained, self-correcting reasoning on problems that require more than one inference step to resolve. The implications for high-stakes analytical work, including legal analysis, extend well beyond mathematics.

UK Copyright and AI-Generated Mathematical Proofs

Section 9(3) of the Copyright, Designs and Patents Act 1988 provides that for computer-generated literary, dramatic, musical or artistic works, the author is deemed to be "the person by whom the arrangements necessary for the creation of the work are undertaken." That provision was inserted when the most ambitious computer-generated work anyone anticipated was a fractal or a procedurally generated piece of music. It was not written with an autonomous agent in mind.

The question is what "arrangements necessary" means when a human researcher prompts an agent, the agent generates thousands of intermediate proof steps autonomously, verifies them, revises them, and produces a final document. The human directed the project. The human chose the problem. The human reviewed and submitted the output. But the substantive intellectual content, including the logical structure of the proof itself, was generated without direct human authorship of each step.

No UK court has addressed this directly in the context of agentic AI. The Copyright Office in the United States has consistently held that AI-generated content without sufficient human authorship is not protectable, but the UK provision was always more permissive. The tension is whether a human who sets up an agentic workflow (perhaps at great length) satisfies the "arrangements" threshold, or whether the law requires something closer to direct creative contribution.

My reading is that a human who defines the research question, selects and configures the tools, reviews the output, and makes substantive editorial decisions before publication probably satisfies section 9(3). A human who simply types a problem into a chat interface and publishes whatever comes out probably does not. The difference matters commercially. Unprotected proofs enter the public domain immediately.

Patentability: Where the Architecture Meets Section 1(2)

Mathematical methods are excluded from patentability under section 1(2)(c) of the Patents Act 1977. A mathematical proof presented purely as mathematics is not patentable. If an AI agent produces a novel proof technique that is then applied in a novel manufacturing process, or a technical system, the position changes: see the recent Supreme Court decision in Emotional Perception AI Ltd v Comptroller General of Patents, Designs and Trade Marks [2026] UKSC 3.

The complication with AI-generated proofs is inventorship. Under section 7(3) of the Patents Act 1977, an inventor must be a natural person. The Thaler v Comptroller-General [2023] UKSC 49 litigation confirmed that position: an AI-powered machine could not be named as inventor, and the UK Supreme Court upheld that conclusion in 2023. A company seeking to patent a technically applicable invention derived from an AI proof must identify a human inventor. If the AI did the inventive work autonomously, the chain of inventorship becomes difficult to establish without misrepresenting what actually happened.

That is not a theoretical risk. Research institutions and commercial organisations working with systems like Aletheia will face this question in practice. The honest answer, at present, is that the law does not accommodate autonomous AI inventorship, and attempts to shoehorn AI output into existing inventorship frameworks carry legal and professional risk.

The Monday Morning Test

If your organisation uses, or plans to use, AI agents for research, drafting, or analytical work that generates intellectual property, three things need to happen now.

First, document human involvement at each stage of the workflow. Not for compliance theatre, but because that documentation is the foundation of any copyright or inventorship claim later. Who defined the task? Who reviewed the output? Who made substantive decisions about what went into the final product? Keep records.

Second, review your IP ownership clauses in both employment contracts and client-facing terms. Most standard form agreements were not written with agentic AI outputs in mind. Who owns the copyright in AI-assisted work product? Is that clear? If not, resolve the ambiguity before you have a dispute.

Third, take the patentability question seriously if you work in a sector where technical discoveries have commercial value. Before filing, you need a credible account of human inventive contribution. "We used an AI and it found this" is not sufficient.

The Broader Point

The shift from AI as a single-response tool to AI as a sustained reasoning agent matters beyond mathematics. Legal analysis, regulatory assessment, and complex document review involve the same underlying requirement: logic that holds at every step, not just in summary. A system capable of iterating through a multi-stage mathematical proof is, in principle, capable of the same discipline applied to statutory interpretation or contractual construction. The error rates documented by independent reviewers suggest we are not there yet. But the architectural direction is clear.

UK IP law was not designed for this moment. Section 9(3) of the CDPA will be tested. The Patents Act's human inventor requirement will create friction. Neither the Intellectual Property Office nor the courts have produced settled guidance on agentic AI outputs. That gap will not stay open indefinitely.

Professionals who start thinking carefully about these questions now, before a dispute forces the issue, will be better placed than those who wait for the law to catch up on its own.

If you are working through IP ownership questions for AI-assisted outputs in your organisation, I am happy to discuss the specifics.

Sources

- 1Google DeepMind Introduces Aletheia: The AI Agent Moving from Math Competitions to Fully Autonomous Professional Research Discoveries

- 2DeepMind's Aletheia helps Korea-linked team solve 13 Erdős problems

- 3Gemini Deep Think: Redefining the Future of Scientific Research

- 4Towards Autonomous Mathematics Research

- 5Deepmind's research AI occasionally solves what humans can't and mostly gets everything else wrong

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

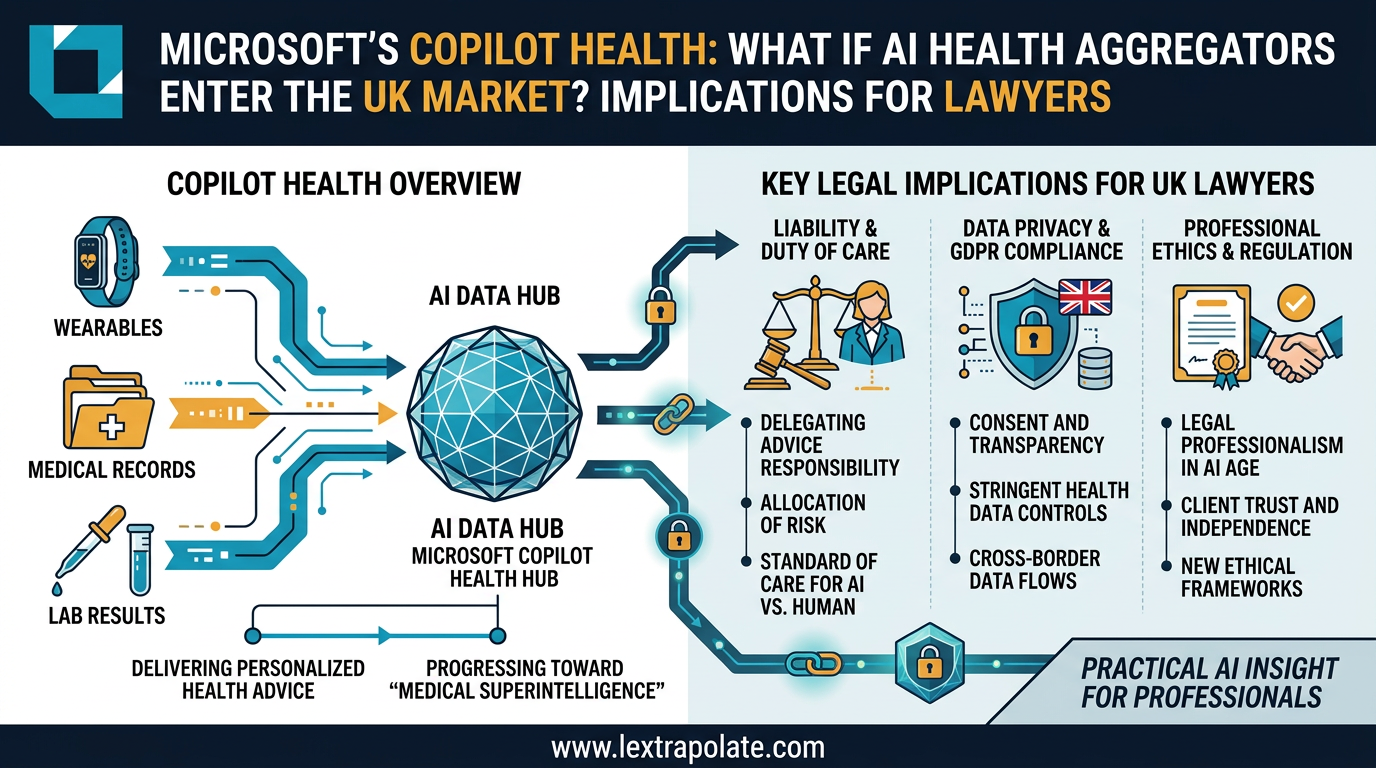

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.

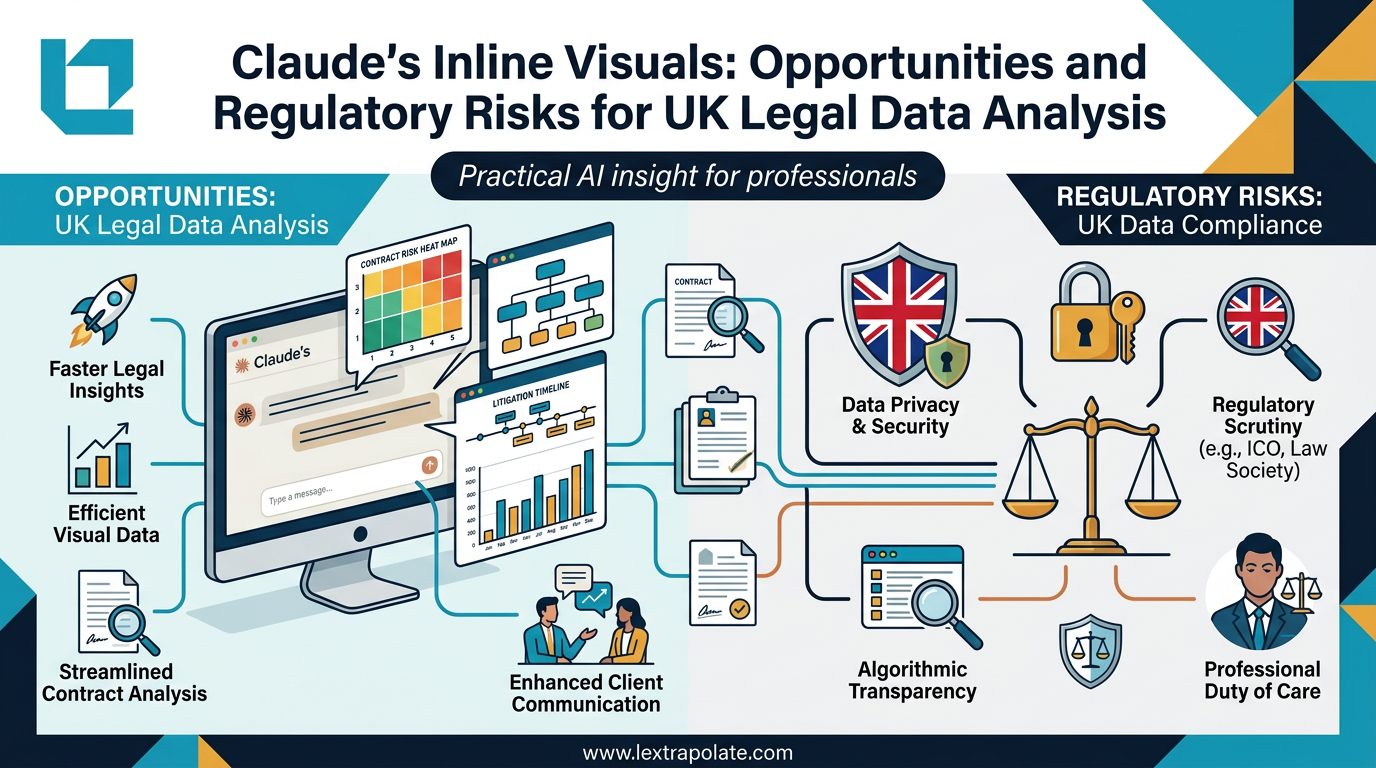

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.

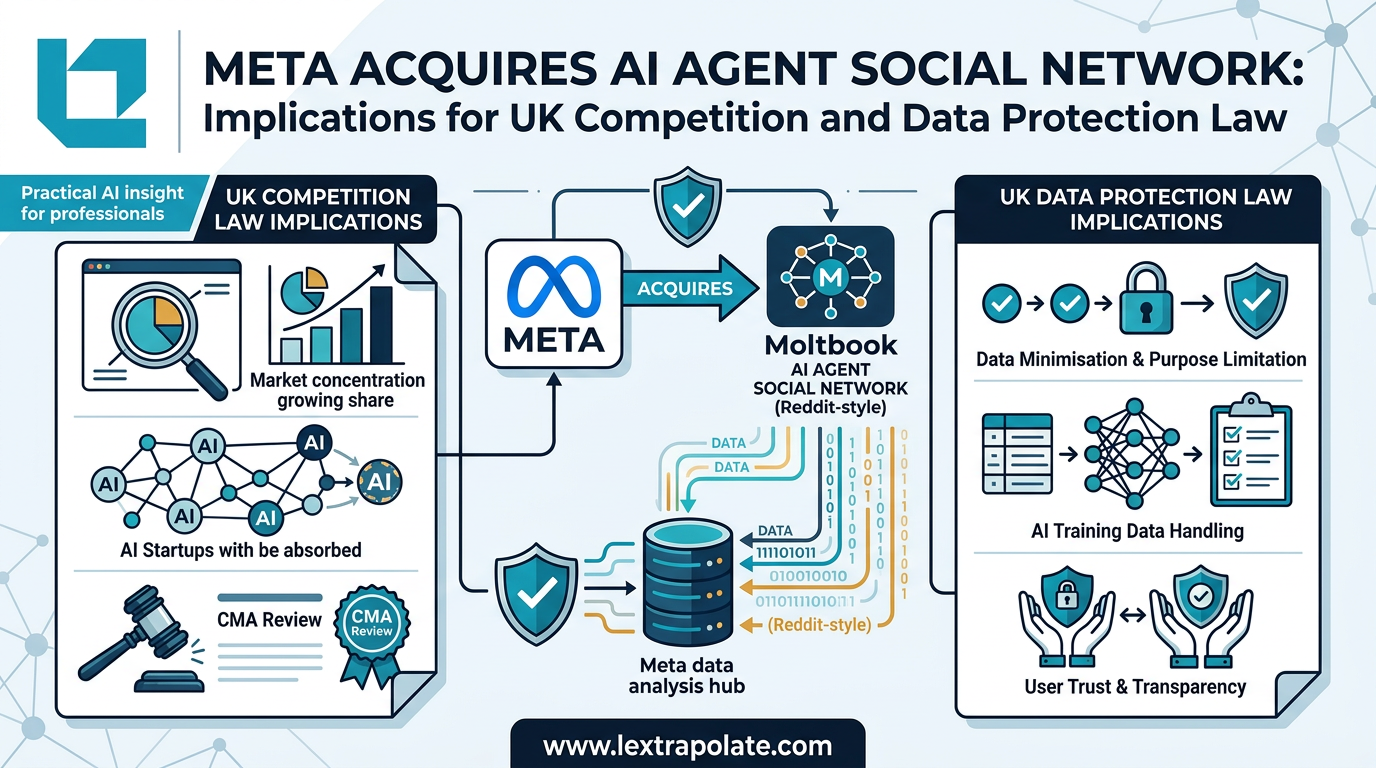

AI Agents Talking to Each Other: What It Means When Social Networks Go Autonomous

Meta has acquired a platform built for AI-to-AI communication. The regulatory and practical implications for UK professionals are worth examining carefully.