What If AI Transforms Hiring Overnight? UK Legal and Ethical Risks as Automated Interviews Scale

UK employers deploying AI interview tools are currently acquiring legal liabilities under the Equality Act 2010 and UK GDPR that remain largely unmapped. While platforms like CodeSignal and Eightfold.ai promise bias reduction, the legal reality for a London law firm or UK HR department is that 'algorithmic neutrality' is not a statutory defence.

This is not speculative. It is happening now, at scale, at companies you have heard of.

Platforms such as CodeSignal and Eightfold.ai have built automated interview products that conduct technical, behavioural, and role-play assessments without human involvement at the screening stage. Their clients reportedly include major technology companies. The vendors claim their tools reduce bias, improve consistency, and create a better candidate experience. Those claims may or may not be accurate. Independent validation is thin. What is clear is that UK employers adopting these tools are acquiring legal obligations they may not have fully mapped.

For candidates, the shift is equally significant. If a meaningful part of the hiring process is now a technical performance task evaluated by a machine, treating it as an interpersonal conversation is the wrong frame entirely.

The UK Legal Exposure Is Not Theoretical

UK employers deploying AI interview tools face genuine regulatory risk under existing law, before any new AI-specific legislation reaches the statute book.

The Equality Act 2010 is the primary concern. An AI system that produces disparate impact across candidates sharing a protected characteristic, race, gender, disability, age, is capable of giving rise to indirect discrimination liability. The employer owns that liability. The fact that a vendor's algorithm produced the outcome does not transfer responsibility. Vendors claiming their systems reduce bias are making an empirical assertion. Without independent audit data, that assertion is not a legal defence.

The Data Protection Act 2018, which implements the UK GDPR, adds a second layer. Article 22 of the UK GDPR restricts solely automated decisions that produce legal or similarly significant effects on individuals. A hiring decision filtered entirely by an AI system, with no meaningful human review, sits uncomfortably close to that boundary. Candidates have the right to obtain human review of automated decisions, to express their point of view, and to contest the outcome. Employers who cannot explain how the system reached its conclusion, in terms a candidate can actually understand, are not compliant.

The Information Commissioner's Office has published guidance on AI and data protection. It is not soft guidance. Employers conducting data protection impact assessments before deploying AI hiring tools are acting prudently. Those skipping that step on the basis that the vendor has "handled compliance" are taking a risk they may not have priced.

What the Vendors Say and Why Scepticism Is Warranted

The marketing around AI interview platforms is consistent. Automated assessment is fairer than human screening because humans carry unconscious bias. AI evaluates responses, not demographics. The candidate experience is improved because scheduling is frictionless and feedback is faster.

Some of this is plausible. Structured, consistent questioning does outperform unstructured interviews on predictive validity. That is well-established in occupational psychology. The problem is that automating consistency does not automatically eliminate bias. It can encode it at scale, consistently.

The training data matters. If historical hiring decisions, used to calibrate what "good" looks like, reflected historical bias, the model inherits it. If the behavioural signals being evaluated, fluency, eye contact, speech cadence, correlate with socioeconomic background, regional accent, or neurodivergence, the system is not neutral. It is measuring something other than what it claims to measure.

Eightfold.ai and CodeSignal present themselves as solving for this. I have not tested either platform. Neither have I seen independent peer-reviewed analysis of their bias-reduction claims. Until that evidence exists, UK employers should treat vendor assurances as a starting point for due diligence, not a substitute for it. Conducting your own bias audit before deployment is not excessive caution. It is standard data protection practice and, in the employment context, a sensible step towards a defensible position under the Equality Act.

Accessibility compounds the risk. A candidate with a visual impairment, speech impediment, or processing disorder may be materially disadvantaged by an AI system that was never tested against that profile. Reasonable adjustment obligations under the Equality Act do not pause because the interviewer is a piece of software.

The Practical Shift: Interviews as Technical Performance Tasks

If you are a knowledge worker in the job market, the strategic implication is direct.

An AI interview system is not listening for warmth or reading the room. It is processing signal: the words you use, the structure of your answers, how closely your language maps to the competencies the system has been calibrated to reward. Preparing for one of these interactions the way you would prepare for a conversation with a thoughtful partner is a category error.

What works instead is methodical. Find out whether the platform uses a particular competency framework. STAR (Situation, Task, Action, Result) format still tends to produce evaluable structure. Practise speaking to a camera without the social feedback loop that human interaction provides, because you will not get any. Test your technology setup in advance, audio quality, lighting, background: not for aesthetics, but because degraded signal inputs produce degraded outputs.

If you are asked to record asynchronously, with no live interviewer, treat each answer as a discrete deliverable. You are producing a document that will be processed. Structure it accordingly.

For employers on the other side of this, particularly HR teams and talent leads at law firms and professional services businesses, the Monday morning question is simpler: who in your organisation owns the liability question for AI hiring tools you are currently using or piloting? If the answer is "the vendor", that answer is wrong. Map the data flows, conduct the impact assessment, document your human review process, and make sure it is genuinely human rather than a rubber stamp on a machine recommendation.

The Broader Point

Automated interviewing is not inherently problematic. Applied carefully, with proper auditing and meaningful human oversight, it could produce more consistent and less biased early-stage screening than the status quo. The status quo involves humans who are tired, distracted, and prone to forming views in the first thirty seconds of a conversation for reasons that have nothing to do with competence.

But "could" is doing significant work in that sentence. The question UK employers need to answer before deployment is not whether the technology is interesting. It is whether they can demonstrate, with evidence, that it is fair, lawful, and accessible in their specific context. Vendor marketing material does not answer that question. An independent audit gets you closer.

Candidates, meanwhile, face a practical adaptation challenge that professional development programmes have been slow to address. AI-led screening is becoming a fixture of hiring for professional roles. Treating it as a technical performance task rather than a conversation is not cynical. It is accurate.

If you are a lawyer or consultant and you have encountered AI-led interview processes, whether as a candidate or advising an employer, I would be interested to hear how those interactions have played out in practice.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

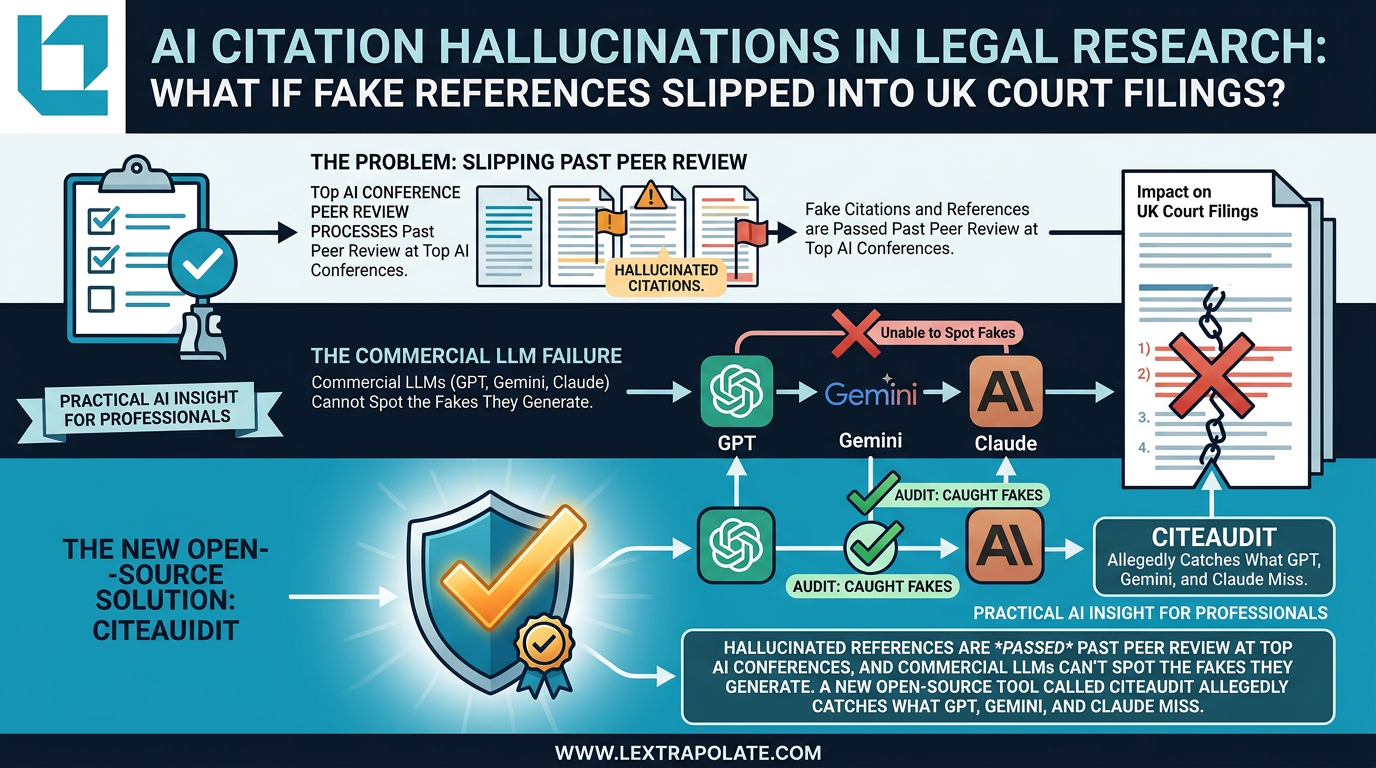

AI Citation Hallucinations in Legal Research: The Verification Problem Nobody Has Solved Yet

Fake citations are slipping past peer review at AI conferences. If that's happening in academia, what's the risk in legal practice?

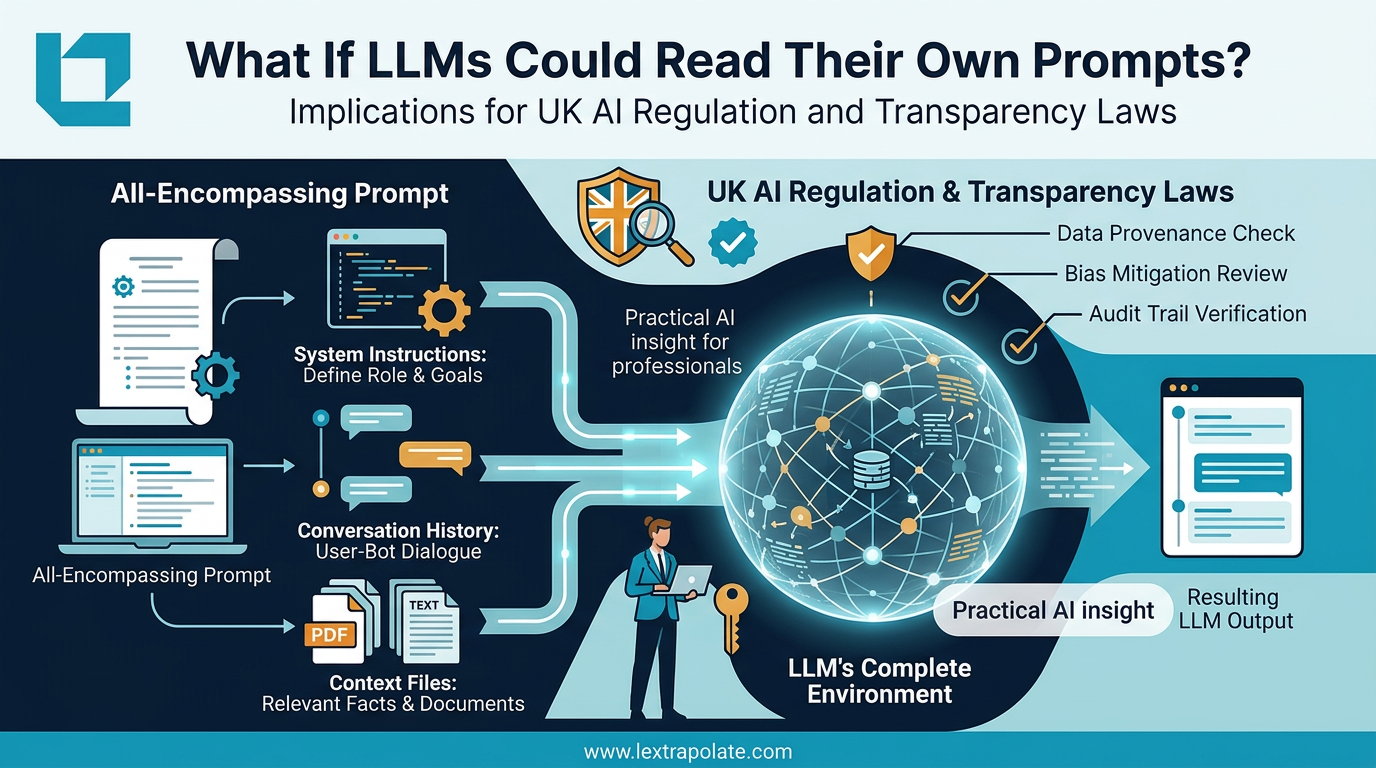

What if the invisible context window is the most important thing you cannot see?

Understanding what the AI actually sees when you prompt it is the difference between controlled output and expensive guesswork.

What If AI Agents Became Team Members? UK Legal Risks in AI-Native Operations

AI-native companies are treating autonomous agents like colleagues. UK law firms watching this shift need to understand what it means before they follow suit.