What If AI Agents Became Team Members? UK Legal Risks in AI-Native Operations

Under the SRA Code of Conduct, a solicitor must 'properly supervise' work carried out on their behalf. But when an autonomous AI agent triages a 1,400-document disclosure set at 3:00 AM without human intervention, the definition of 'supervision' undergoes a radical, and potentially litigious, shift.

That question is no longer hypothetical.

The Shift From Tool to Colleague

A cluster of technology companies have moved beyond using AI to assist humans. They are building workflows where AI agents operate with a degree of autonomy that makes the word "tool" feel inadequate.

Linear, the project management software, integrates with Factory's AI agents, marketed as "Droids", to handle engineering tasks autonomously. Ramp, the financial operations company, has embedded AI across revenue functions. Factory publishes case studies claiming significant acceleration in software development. These are vendor-originated claims and should be read as such. We have not independently tested any of these products, and the underlying evidence is largely self-published promotional material.

But strip away the marketing, and the underlying concept is real and accelerating: autonomous agents being assigned work, given access to systems, and producing outputs that feed into business processes. Call it what you like. The operational reality is that organisations are integrating AI agents into workflows in ways that look, structurally, a lot like onboarding a new colleague.

Law firms watching this trend need to think carefully before they follow it.

What UK Law Actually Says About AI Agents in Your Workflow

Data Protection: The Agent Has to Go Somewhere

Every AI agent that handles work inside an organisation is processing data. If that work touches client matters, that data is likely personal data under UK GDPR. The moment an agent reviews a contract, processes a disclosure bundle, or summarises correspondence, you have a data processing activity that needs to be accounted for.

The questions that follow are not hypothetical. Who is the data processor? If you are using a third-party AI agent integrated into your matter management system, that third-party is almost certainly a processor under Article 28 UK GDPR. You need a Data Processing Agreement. You need to know where data goes, how long it is retained, and whether it is used to train models. Most firms asking these questions of off-the-shelf AI tools are finding the answers uncomfortable.

There is also a risk assessment obligation. Where AI agents are making decisions that affect individuals, or processing special category data, a Data Protection Impact Assessment is not optional. The ICO's guidance on AI and data protection has been clear on this for some time. The firms that have not updated their DPIAs to reflect AI agent deployments are already out of step with their obligations.

Employment Law: The Ghost in the Headcount

No UK statute treats an AI agent as a worker. The Employment Rights Act 1996 and the definitions that flow from it require a human. So there is no direct employment law exposure from running an AI agent on your document review queue.

The indirect exposure is more interesting, and more pressing.

If AI agents are handling work that was previously done by paralegals or junior associates, the impact on those roles is a restructuring question. Redundancy processes, consultation obligations under the Trade Union and Labour Relations (Consolidation) Act 1992 where numbers trigger collective consultation, and the duty to consider suitable alternative employment do not disappear because the reason for reduced headcount is technological. Firms announcing AI-driven efficiency programmes without thinking through the employment law implications are storing up problems.

There is also a supervision question that sits at the intersection of employment law and professional conduct. The SRA Standards and Transparency Rules require fee earners to be properly supervised. An AI agent producing a first draft is fine if a qualified lawyer checks it. An AI agent that has been configured to send outputs directly to clients, without human review, is a different matter entirely. The agent does not hold practising certificates. The firm does.

IP Ownership: Who Owns What the Agent Produces?

Factory markets its agents as producing code. Law firms integrating similar tools into document drafting or contract generation workflows face the same question that software companies are grappling with.

Under the Copyright, Designs and Patents Act 1988, computer-generated works are protected by copyright, with the author deemed to be the person who made the necessary arrangements for the work to be created. That is section 9(3). In practice, this means the firm or the individual who configured and deployed the agent is likely the first owner of copyright in the output.

But this is unsettled territory. The question of whether AI-generated work attracts copyright at all is live in UK courts and policy discussions. The Intellectual Property Office ran a consultation on this in 2021 and the government response left the section 9(3) regime in place for now, with a caveat that it would be kept under review. Firms building AI-generated document templates or precedent libraries need to understand that the ownership position is less certain than it appears on the face of the 1988 Act, and that relying on that copyright against a counterparty who challenges it carries risk.

The Monday Morning Question

If you are managing a law firm and you are considering, or have already deployed, AI agents in document review, project management, or client-facing workflows, there are four things worth checking before the week is out.

First, identify every AI tool in use that processes personal data and confirm you have a current DPA in place with the vendor. If you cannot name the vendor's data retention policy off the top of your head, you do not have adequate oversight.

Second, review your DPIAs. If they were drafted before you deployed AI tools, they are out of date.

Third, check your supervision model. Who is reviewing AI outputs before they leave the firm? If the answer is "it depends" or "usually someone", that is not a supervision model.

Fourth, if AI deployment is affecting headcount or role structure, get employment law advice before you announce anything. The obligation to consult does not arise when the redundancies happen. It arises when the employer proposes to dismiss.

A Concept Worth Taking Seriously, With Care

The idea of AI agents as operational colleagues rather than discrete tools reflects something real about how advanced deployments are working. The distinction matters because it changes how you think about accountability, supervision, and risk. A tool sits on a desk. An agent operates in your systems, touching your clients' data, producing outputs that carry your firm's name.

UK law has not caught up with this framing. That is precisely why firms need to.

The companies cited in the trade press as exemplars of AI-native operations are largely selling software. Their case studies are marketing. The underlying operational model they describe, autonomous agents integrated into core workflows, is where serious firms are heading, and the legal profession is not exempt from that trajectory.

The firms that will manage this well are not the ones moving fastest. They are the ones who understood their existing obligations before they started automating against them.

If your AI deployment strategy does not yet include a legal risk review, that review is overdue.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

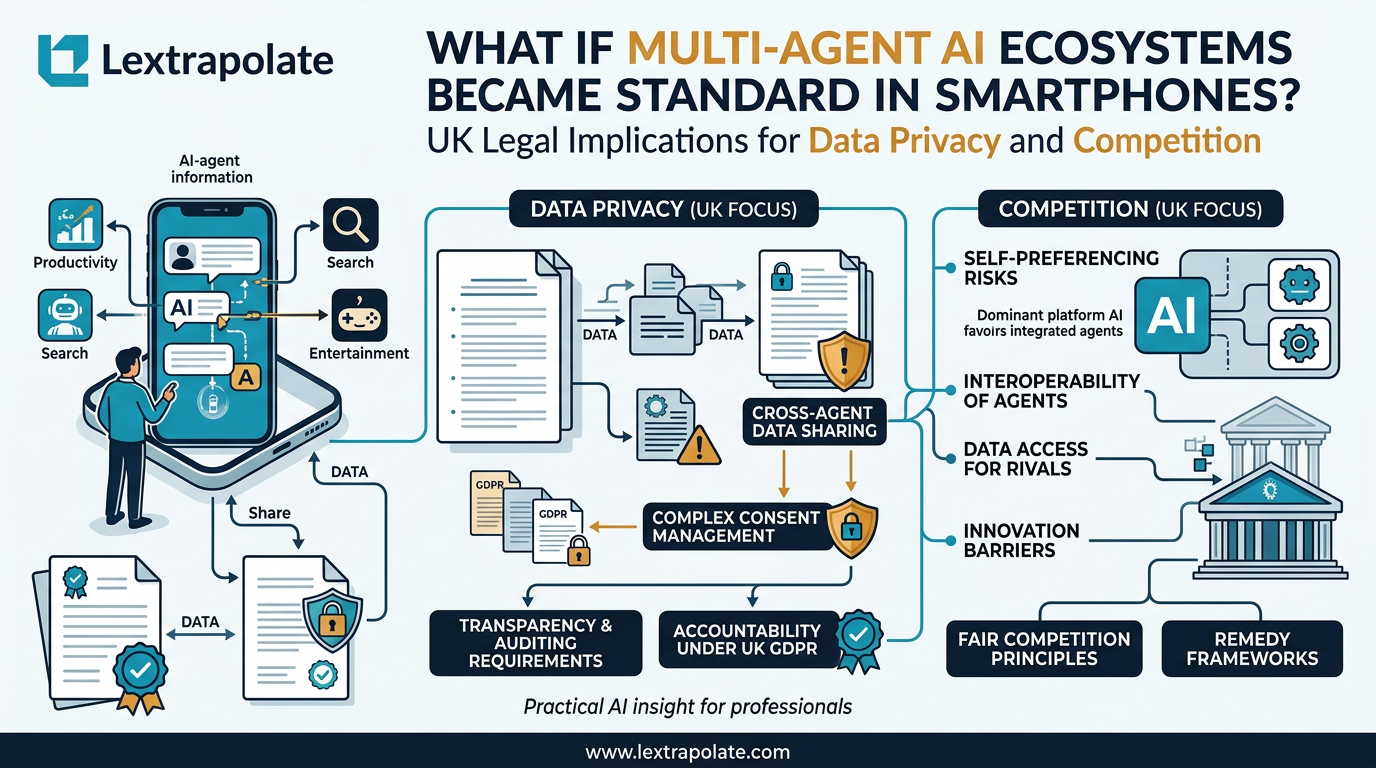

When Your Phone Becomes a Research Assistant: The Legal Questions Multi-Agent AI Raises

AI agents embedded at OS level change how professionals research on the move. The concept is significant. The legal questions it raises are more significant still.

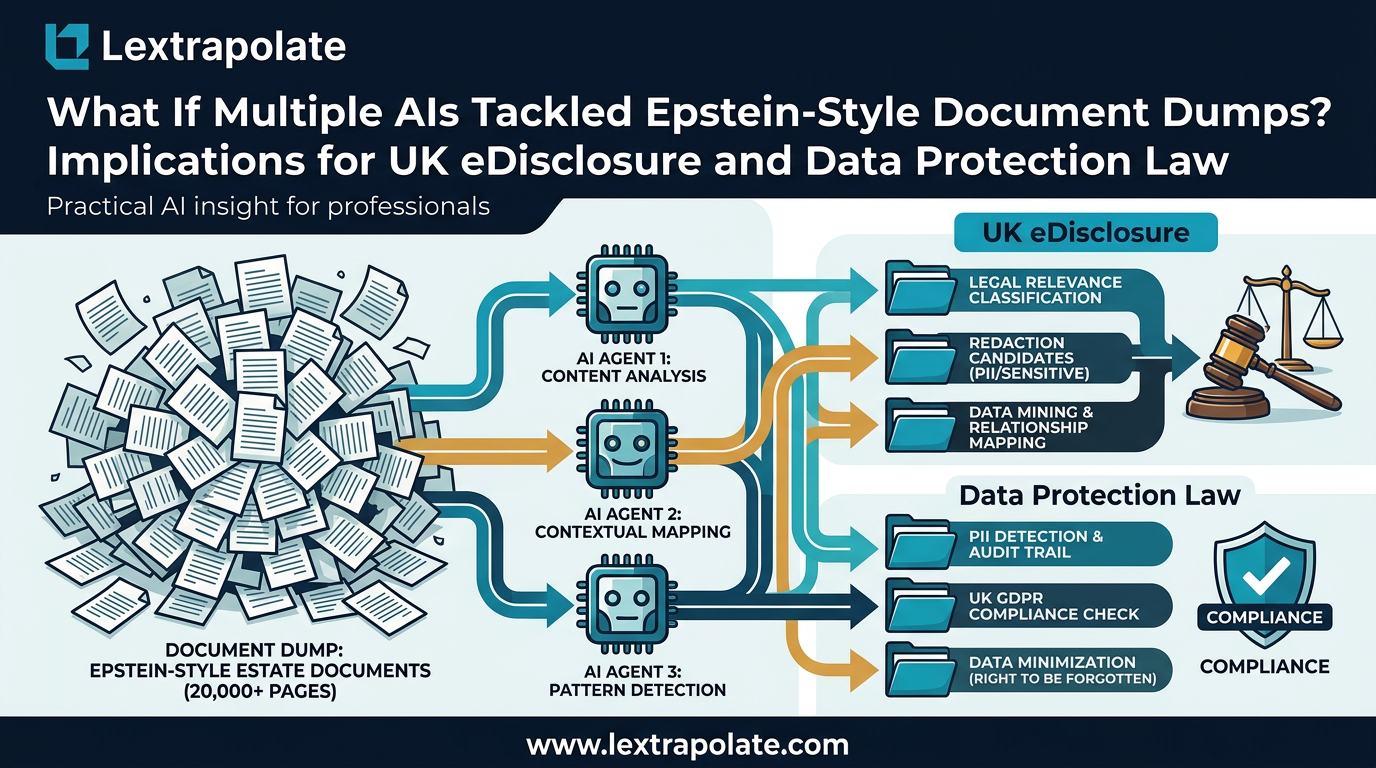

What If Multi-Agent AI Could Search Three Million PDFs Before Breakfast?

PDFs are still the dominant friction point in legal discovery. Multi-agent AI is changing that fast. Here is what practitioners need to understand now.

What If AI Transforms Hiring Overnight? UK Legal and Ethical Risks as Automated Interviews Scale

AI avatars are conducting job interviews at scale. UK employers using these tools face real legal exposure they may not have mapped yet.