When Your Phone Becomes a Research Assistant: The Legal Questions Multi-Agent AI Raises

Multi-agent AI smartphones allow a barrister to dictate a post-hearing note that is automatically cross-referenced against the latest UK Supreme Court updates via Perplexity AI at the OS level. This shift from siloed apps to ambient infrastructure represents a fundamental change in legal knowledge work. While the efficiency gains are obvious, the implications for UK GDPR and professional liability are unprecedented.

That is the direction of travel. Whether the specific implementation delivering it is any good is another question entirely.

Samsung announced in late February 2026 that its Galaxy S26 series will allow users to invoke multiple AI assistants at the OS level, each via its own hotword. Perplexity, a real-time web search tool that cites its sources, joins Bixby and Gemini as a system-level agent with access to device functions including Notes, Clock, and Gallery. Samsung is calling this a "multi-agent ecosystem." The idea is that different agents handle different tasks based on their strengths, and the user switches between them by voice rather than by opening an app.

I have not tested this hardware. It has not shipped. What I can assess is the concept, and the concept deserves serious attention from anyone who does knowledge work on a phone.

What Multi-Agent AI at OS Level Actually Means

Most current AI use on smartphones is siloed. You open ChatGPT. You ask a question. You copy the answer somewhere else. The AI operates inside an app boundary.

OS-level integration breaks that boundary. An agent with system-level access can read what is on your screen, interact with other apps, trigger device functions, and act on multi-step instructions without the user manually bridging each step. This is meaningfully different from a chatbot in a browser tab.

For knowledge workers, the practical shift is significant. Research that currently requires switching between a search engine, a notes app, an email client, and a document becomes a single-step voice interaction. Whether any given implementation actually delivers that cleanly remains to be seen. But the architecture makes it possible in a way that was not realistic two years ago.

The multi-agent dimension adds further nuance. Different AI systems have different strengths. A real-time, source-cited search tool is useful for finding current information. A reasoning-focused model may be better for drafting or analysis. A multi-agent framework, if implemented well, means the right tool is called for the right task without the user having to consciously choose.

That said, "if implemented well" is doing a lot of work in that sentence.

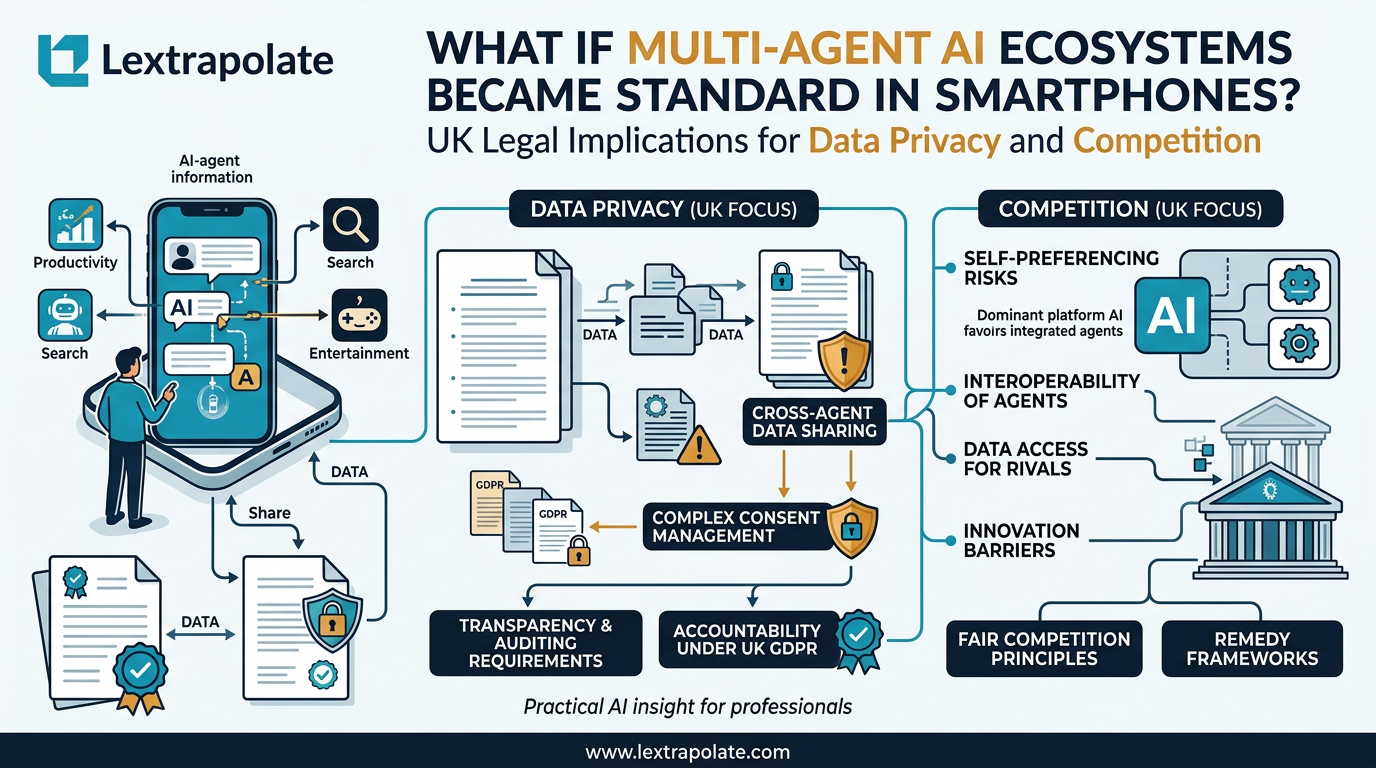

The UK GDPR Questions That Follow Directly

When multiple AI agents operate at OS level, each from a different commercial provider, the data protection questions multiply fast.

Take a straightforward scenario. A solicitor asks a voice assistant to summarise the key points from a client email and add them to a case note. To do that, the agent needs to read the email. Depending on which agent is invoked, that email content may be processed by Perplexity's servers, Google's, Samsung's, or some combination. The user may not know which. The client certainly does not.

Under UK GDPR, the solicitor's firm is likely a data controller in respect of that client data. Processing it through a third-party AI agent requires a lawful basis, a data processing agreement with the provider, and sufficient transparency about what happens to the data. Article 28 of UK GDPR is clear that processors must be bound by contract. When the "processor" is a cloud-based AI agent operating via a consumer smartphone, those obligations do not disappear; they just become harder to discharge.

Law firms subject to SRA regulation face additional obligations. The SRA's guidance on use of technology in legal practice requires firms to consider data security and client confidentiality. Using a consumer-grade AI agent to process client data without understanding what the agent does with that data is unlikely to satisfy that standard.

None of this means multi-agent AI on smartphones is off-limits for professionals. It means the governance work has to happen before the tool becomes part of the workflow, not after.

Competition and Interoperability Under the DMCC Act 2024

The multi-agent model raises a different set of questions under competition law.

Samsung's approach, at least as described in its announcements, appears to be relatively open. Multiple agents, multiple hotwords, user choice. That framing aligns with the direction the Digital Markets, Competition and Consumers Act 2024 is pushing. The DMCC Act gives the Competition and Markets Authority new powers to designate firms with "strategic market status" and impose conduct requirements, including requirements around interoperability and fair dealing with third parties.

The CMA has already indicated that AI is a priority area. Its ongoing work on foundation models and AI in digital markets signals that how platforms control access to AI functionality will be scrutinised.

The risk, with any multi-agent platform, is that openness at launch becomes closure over time. A device manufacturer that controls which agents receive system-level access, and on what terms, controls the market for AI services on that device. A framework that starts with three agents could become a closed list. Preferential treatment for proprietary agents over third-party alternatives would be exactly the kind of conduct the DMCC Act is designed to address.

For now, the legal framework exists. The question is whether regulators will use it proactively or wait for harm to crystallise.

What This Means on Monday Morning

If you are a lawyer or knowledge worker, the practical implication is not "should I buy this phone." The practical implication is that AI-assisted research is moving from something you deliberately choose to do to something embedded in the tools you already use. That shift requires a governance response, not just a technology response.

Three things worth doing now.

First, find out what your firm's policy says about using consumer AI tools to process client or matter data. If there is no policy, that is the answer you needed.

Second, if you use voice-activated AI on your phone for anything work-related, trace where that data goes. Read the privacy policy of whichever agent you invoke. It will not make comfortable reading, but you need to know.

Third, when evaluating any AI tool for professional use, ask whether it is designed for consumer convenience or professional compliance. Those are not the same product, even when they look identical from the outside.

The idea of multiple specialised AI agents working at OS level, each handling different types of task, is architecturally sound. It reflects how professionals actually work, moving across different types of information and different types of task throughout a working day.

But the legal and professional obligations that govern knowledge workers do not soften because a tool is convenient. They apply with equal force when the tool is a voice command on a smartphone as when it is a dedicated piece of enterprise software.

The technology is moving quickly. The governance frameworks, in most firms, are not keeping pace. That is the gap worth closing.

If you are thinking through how to build AI governance that actually works in practice, rather than just on paper, get in touch. That is the work we do.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

What If AI Agents Became Team Members? UK Legal Risks in AI-Native Operations

AI-native companies are treating autonomous agents like colleagues. UK law firms watching this shift need to understand what it means before they follow suit.

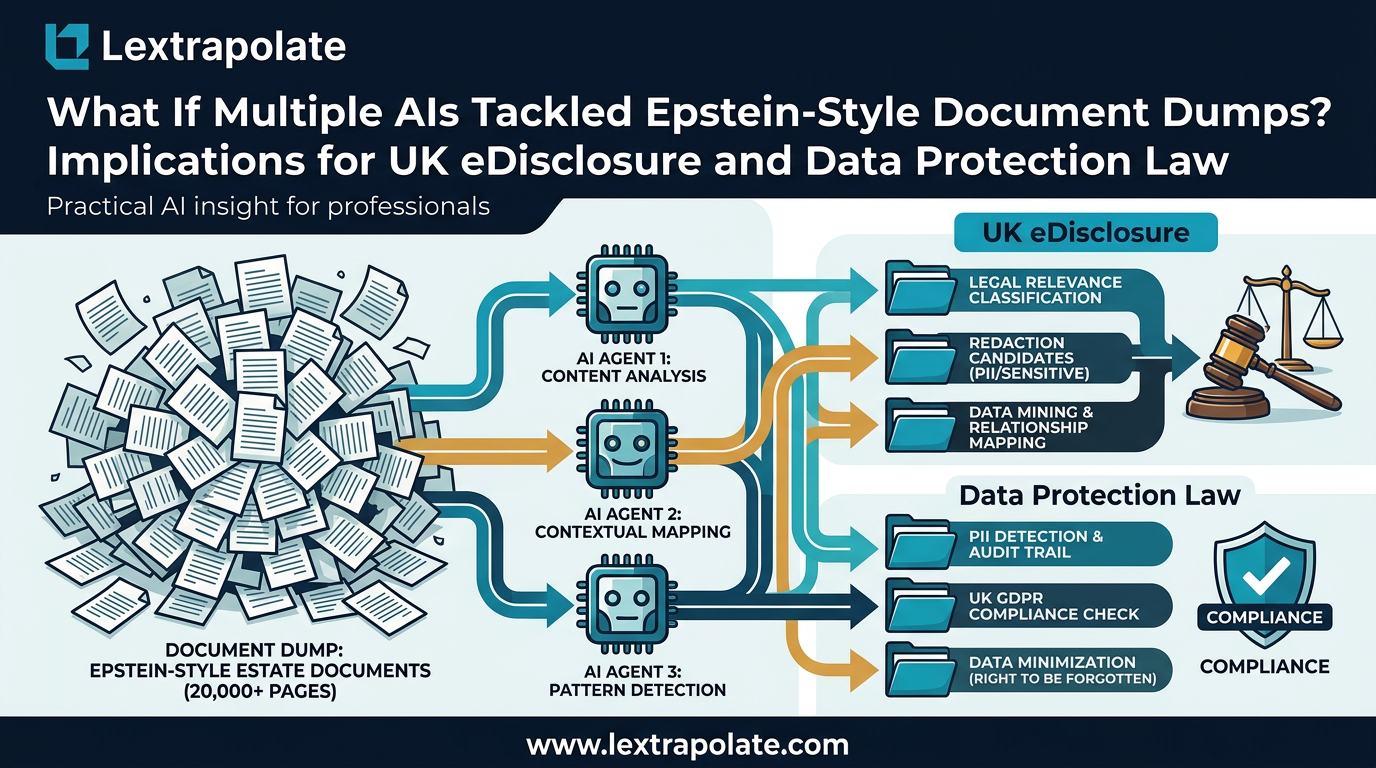

What If Multi-Agent AI Could Search Three Million PDFs Before Breakfast?

PDFs are still the dominant friction point in legal discovery. Multi-agent AI is changing that fast. Here is what practitioners need to understand now.

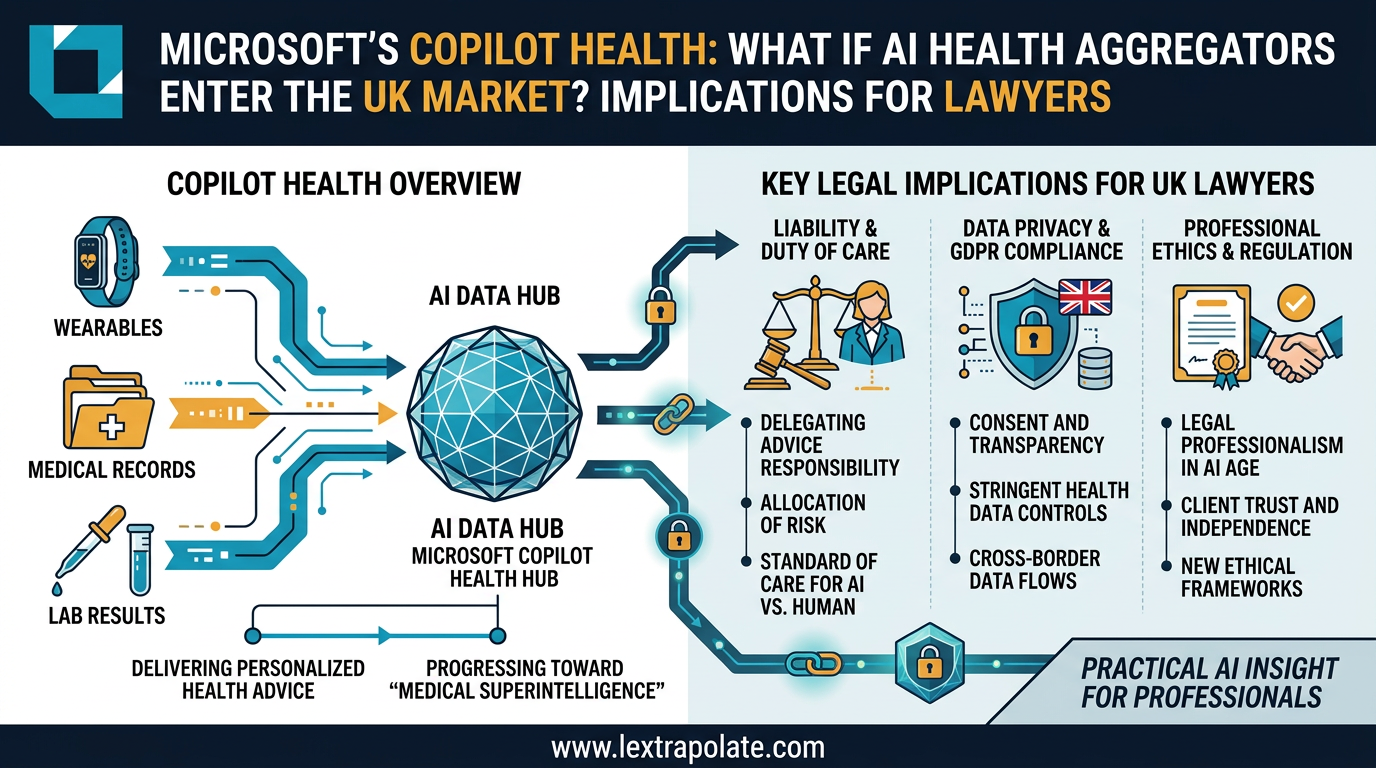

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.