AI Hallucinations in Court: Why UK Lawyers Face Criminal Liability and How to Avoid SRA Sanctions

A paralegal drafts a skeleton argument using ChatGPT. It includes three case citations. Two of them do not exist. The barrister signs the document without checking. The judge notices. That is not a hypothetical. By November 2025, UK courts had recorded 24 documented incidents of AI-generated fabricated citations being submitted. The High Court has warned that submitting AI-fabricated legal authorities could amount to contempt of court or perverting the course of justice. Are your lawyers technically literate enough to spot these errors before they reach the judge?

That is not a hypothetical. By November 2025, the UK had recorded 24 documented incidents of AI-generated fabricated citations being submitted to courts or tribunals. Globally, the count exceeded 700. And the consequences have moved well beyond embarrassment. Thanks to Matthew Lee for continuing to monitor these incidents systematically.

The High Court has warned, in terms, that submitting AI-fabricated legal authorities could amount to contempt of court or perverting the course of justice. That is criminal liability for what many practitioners still treat as a workflow shortcut. The October 2025 judicial guidance refresh from the Judiciary of England and Wales signals that the bench is not waiting for the profession to catch up. It is codifying expectations now.

The trajectory is clear. What began as cautionary Bar Council warnings in January 2024 has escalated into active enforcement and the prospect of regulatory referral. The gap between how lawyers are actually using generative AI and how the courts expect them to use it is widening, not narrowing.

The regulatory and criminal exposure is real

Start with Felicity Harber v HMRC [2023] UKFTT 1007 (TC). The First-tier Tribunal found that fabricated case citations had been submitted. The judgment established that this constitutes grounds for sanctions, regulatory referral, and potential criminal liability. It was an early marker. It will not be the last.

The SRA's Standards and Regulations require solicitors to act with competence (Principle 2) and honesty (Principle 5). Citing an authority that does not exist breaches both. It does not matter that the fabrication originated from a machine rather than the lawyer's imagination. The solicitor's name is on the document. The duty to verify is theirs.

The BSB's position is equivalent. Core Duty 3 requires barristers to act with honesty and integrity. Core Duty 7 requires them to provide a competent standard of work. A barrister who submits a skeleton argument containing hallucinated case law has, on the face of it, failed both duties.

VinciWorks' analysis frames this correctly as a professional indemnity insurance problem too. Bar Council warnings treat hallucination misuse as potential gross negligence. If your insurer classifies your conduct as grossly negligent rather than merely negligent, the coverage implications are significant. Policy exclusions may bite. Excesses may increase. Renewal terms may harden.

The criminal angle deserves separate emphasis because it is still underappreciated. Contempt of court carries a maximum sentence of two years' imprisonment under the Contempt of Court Act 1981. Perverting the course of justice is a common law offence with a maximum of life imprisonment. These are not theoretical risks deployed for rhetorical effect. The High Court raised them deliberately. When a judge tells you in open court that fabricated citations might constitute a criminal offence, the prudent response is to take that seriously.

Why training remains the central problem

The Law Society's guidance on generative AI is helpful but general. It tells practitioners to verify outputs and understand limitations. What it cannot do is make people actually do those things.

The persistent pattern across documented incidents is the same. Junior lawyers or paralegals use consumer-grade AI tools. They do not understand why large language models hallucinate. They do not know that LLMs generate statistically plausible text rather than retrieving verified facts. They treat the output as a research result rather than a draft that requires independent verification against primary sources.

Supervising lawyers compound the problem by not checking. The work product looks polished. The citations are formatted correctly. The legal reasoning reads plausibly. The whole point of LLM-generated text is that it sounds authoritative, whether or not it is accurate. That is the feature that makes hallucinations dangerous rather than merely annoying.

The Barrister Group's analysis rightly identifies this as a systemic training failure. Firms are allowing or encouraging AI adoption without ensuring that users understand the technology's fundamental characteristics. You would not hand a trainee a new case management system without training. Generative AI is more consequential and more likely to produce errors that are invisible on their face.

Professional competence now requires a technical understanding of LLM limitations. That is not a technology opinion. It is a regulatory reality, given the judicial and SRA expectations already in force.

The burden the courts are absorbing

There is a dimension to this that gets less attention than it should. Every fabricated citation that reaches a tribunal forces a judge to verify it independently. That takes time. It consumes judicial resources that are already stretched. The Upper Tribunal has expressed concern about this explicitly.

The systemic cost falls on the state and on other court users. Cases are delayed. Listings are disrupted. Judges who should be deciding disputes are instead checking whether Smith v Jones [2019] EWCA Civ 1234 actually exists. (It does not.)

Litigants in person face a particular problem. They are the most likely to use consumer AI tools for legal research and the least equipped to verify the output. The Society for Computers and Law has documented cases where unrepresented litigants submitted hallucinated citations in good faith. The judicial management challenges are real, and they create potential appeal points on procedural fairness grounds that represented parties will need to deal with.

For the courts, this is not just an individual disciplinary matter. It is a systemic threat to the integrity of the litigation process.

What to do on Monday morning

Concrete steps. No generalities.

1. Verify every citation against a primary source. BAILII, ICLR, Westlaw, LexisNexis. If you cannot find the case on a reputable legal database, it does not exist. Do not cite it.

2. Never treat LLM output as research. Treat it as a first draft that needs independent confirmation of every factual and legal claim. This applies whether you are using ChatGPT, Claude, Copilot, or a legal-specific AI tool. Hallucination rates vary between models and between queries, but no current LLM is hallucination-free.

3. Train everyone who touches AI. Not a one-hour webinar twelve months ago. Ongoing, practical training that covers what hallucinations are, why they occur, and how to catch them. Paralegals. Trainees. Associates. Partners. If they use the tools, they need the training.

4. Establish a firm-wide verification protocol. Document it. Make it mandatory. Audit compliance. When the SRA asks what controls you had in place, "we told people to be careful" will not be sufficient.

5. Supervisors must check AI-assisted work. If you sign a document, you own it. The Harber case and the High Court's warnings make clear that delegation does not discharge the duty. Review the citations yourself. Every time.

6. Consider whether consumer AI tools are appropriate for legal work at all. Legal-specific AI tools with retrieval-augmented generation (RAG) architectures, connected to verified legal databases, reduce hallucination risk. They do not eliminate it, but they materially improve reliability compared to general-purpose chatbots.

The position is straightforward

AI is a useful tool for legal work. I use it daily. I advise others on how to use it effectively. But a useful tool used without competence becomes a liability, and the consequences in this context are not just professional. They are criminal.

The judiciary has told you what it expects. The SRA and BSB have the regulatory tools to enforce those expectations. The case law is accumulating. The question for every practitioner and every firm is whether their training and verification protocols match the standard the courts now require.

If you are unsure about the answer, fix it before a judge asks you the question.

Sources

- 1The increasing legal liability of AI hallucinations: Why UK law firms face rising regulatory and litigation risk

- 2Oops! AI Made a Legal Mistake: Now What? AI Hallucinations in Legal Practice

- 3Artificial Intelligence (AI) – Judicial Guidance (October 2025)

- 4False citations: AI and 'hallucination' - Society for Computers & Law

- 5Generative AI – the essentials

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

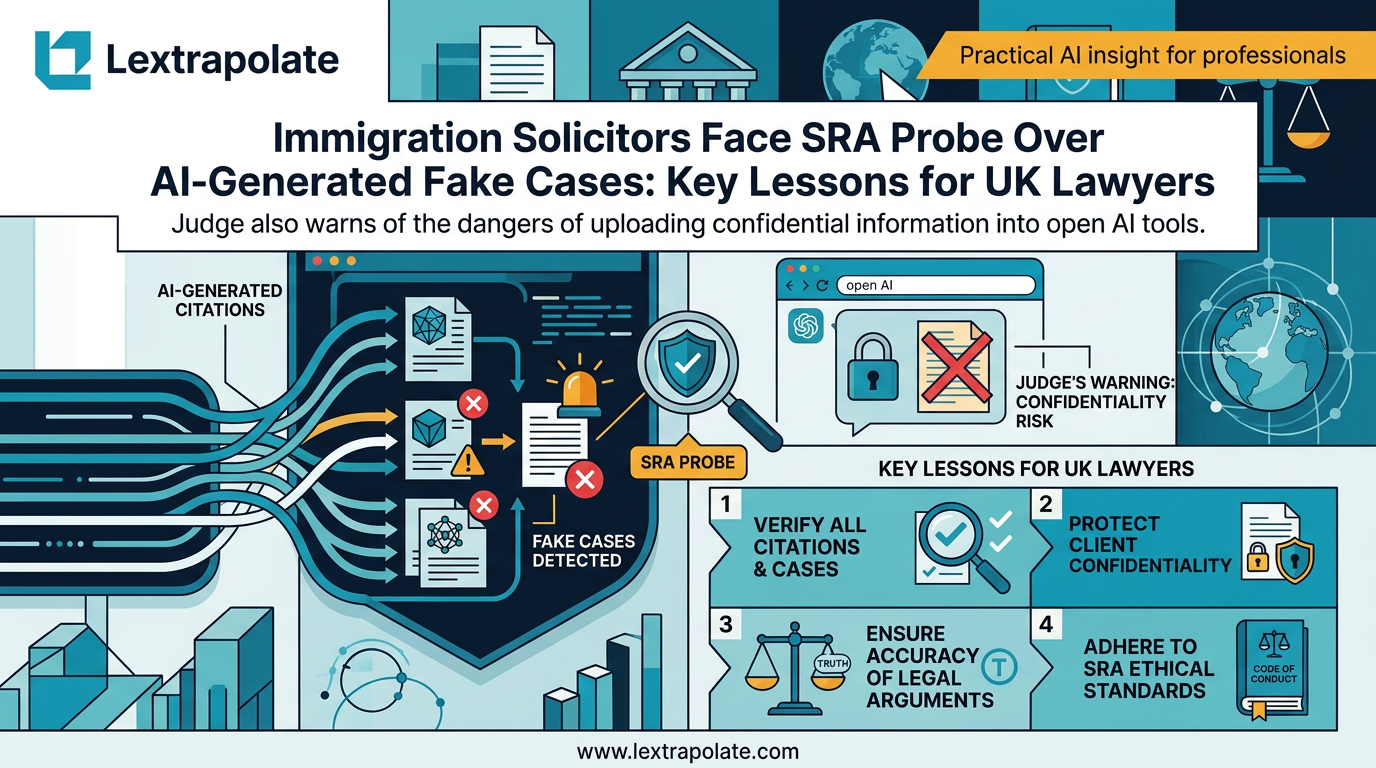

What If Your AI Tool Just Ended Your Career? The Citation Trap Lawyers Must Take Seriously

Two solicitors face SRA investigation after fake AI-generated case citations reached an Upper Tribunal. Here is what every lawyer needs to understand.

What If AI Training Became a Professional Obligation for Lawyers?

Hotshot and Legora's partnership raises a sharper question: when does AI training shift from good practice to regulatory requirement?

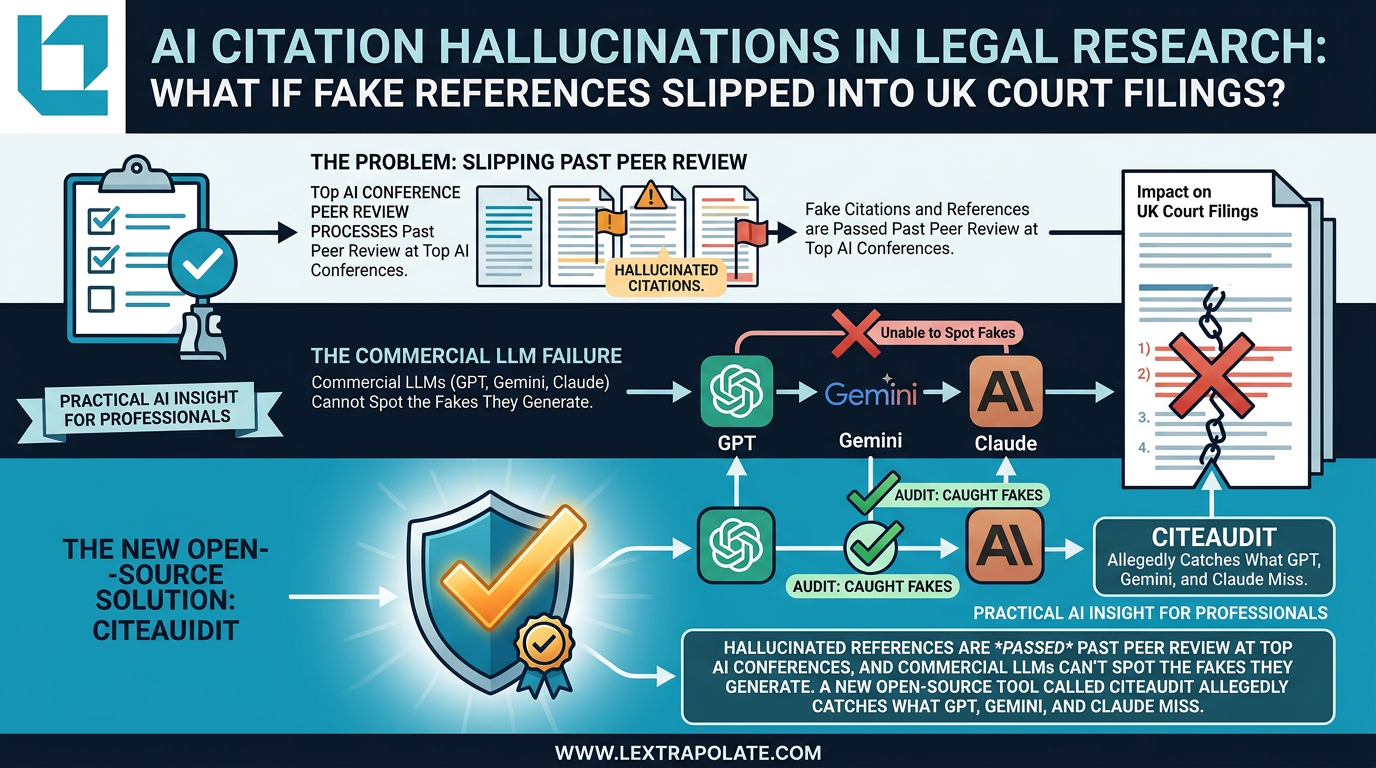

AI Citation Hallucinations in Legal Research: The Verification Problem Nobody Has Solved Yet

Fake citations are slipping past peer review at AI conferences. If that's happening in academia, what's the risk in legal practice?