How Many Lawyers Actually Understand AI? Fewer Than You Think.

Ask a room full of lawyers whether they use AI and most hands go up. Ask them to explain what a large language model actually does, how it generates text, where it hallucinates, why it confidently invents case citations, and the room goes quiet.

That silence should worry us all.

The gap between adoption and understanding

There is a growing disconnect in the legal profession. Firms are subscribing to AI tools. Lawyers are experimenting with ChatGPT and Claude. Some are drafting witness statements, summarising bundles, and researching points of law with AI assistance. But very few have any structured understanding of how these tools work, where they fail, or what the professional obligations around their use actually are.

This is not about becoming a computer scientist. Nobody expects a barrister to understand transformer architecture at the mathematical level. But there is a baseline of AI literacy that every practising lawyer now needs, and most do not have it.

Why this matters on Monday morning

Consider the practical risks. A solicitor uses AI to draft a letter before action. The tool inserts a reference to a case that does not exist. The solicitor does not check. The letter goes out. That is not a technology failure. That is a competence failure.

Or a barrister uses AI to summarise a lengthy expert report for a skeleton argument. The summary omits a critical caveat. The barrister relies on the summary without reading the original. Opposing counsel raises it at trial.

These are not hypothetical scenarios. They are happening now. And the regulatory framework is clear: the professional is responsible for the output, regardless of the tool used to produce it.

What the regulators expect

The SRA has been characteristically measured but unambiguous. Solicitors must ensure they are competent to use the technology they deploy. The BSB takes a similar position for barristers. If you are using AI in your practice, you need to understand it well enough to identify when it goes wrong.

Practice Direction 57AC already requires lawyers to confirm the process by which witness statements were prepared. It is only a matter of time before similar disclosure obligations extend to other AI-assisted documents.

The direction of travel is clear. Ignorance is not a defence.

Three things every lawyer should know about AI

You do not need a PhD. You need to understand three things.

First, large language models predict the next word. They do not "know" anything. They generate statistically plausible text based on patterns in training data. This is why they can produce beautifully written nonsense, and why you must verify everything.

Second, AI tools have no concept of truth. They cannot distinguish between a real case citation and a plausible-sounding invented one. The confidence of the output bears no relation to its accuracy.

Third, context windows and training data have limits. An AI tool may not have access to the most recent case law. It may not understand the nuances of a particular jurisdiction. It is a starting point, not an answer.

What good looks like

The firms getting this right are investing in structured training, not just tool subscriptions. They are creating AI acceptable use policies. They are building review workflows that treat AI-generated content as a first draft requiring professional verification.

They are also talking openly about failures. The lawyer who catches an AI hallucination and flags it to colleagues is more valuable than the one who quietly deletes it and hopes nobody noticed.

The opportunity

Here is the encouraging part. Lawyers who genuinely understand AI, not just how to use it but how it works and where it breaks, have an enormous competitive advantage. They are faster. They are more efficient. And critically, they are safer.

The profession needs fewer people who say "I use AI" and more who can say "I understand AI well enough to use it responsibly."

That is not a high bar. But it is one that most of the profession has not yet cleared.

If your firm is in that position, that is not a criticism. It is an invitation to do something about it.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

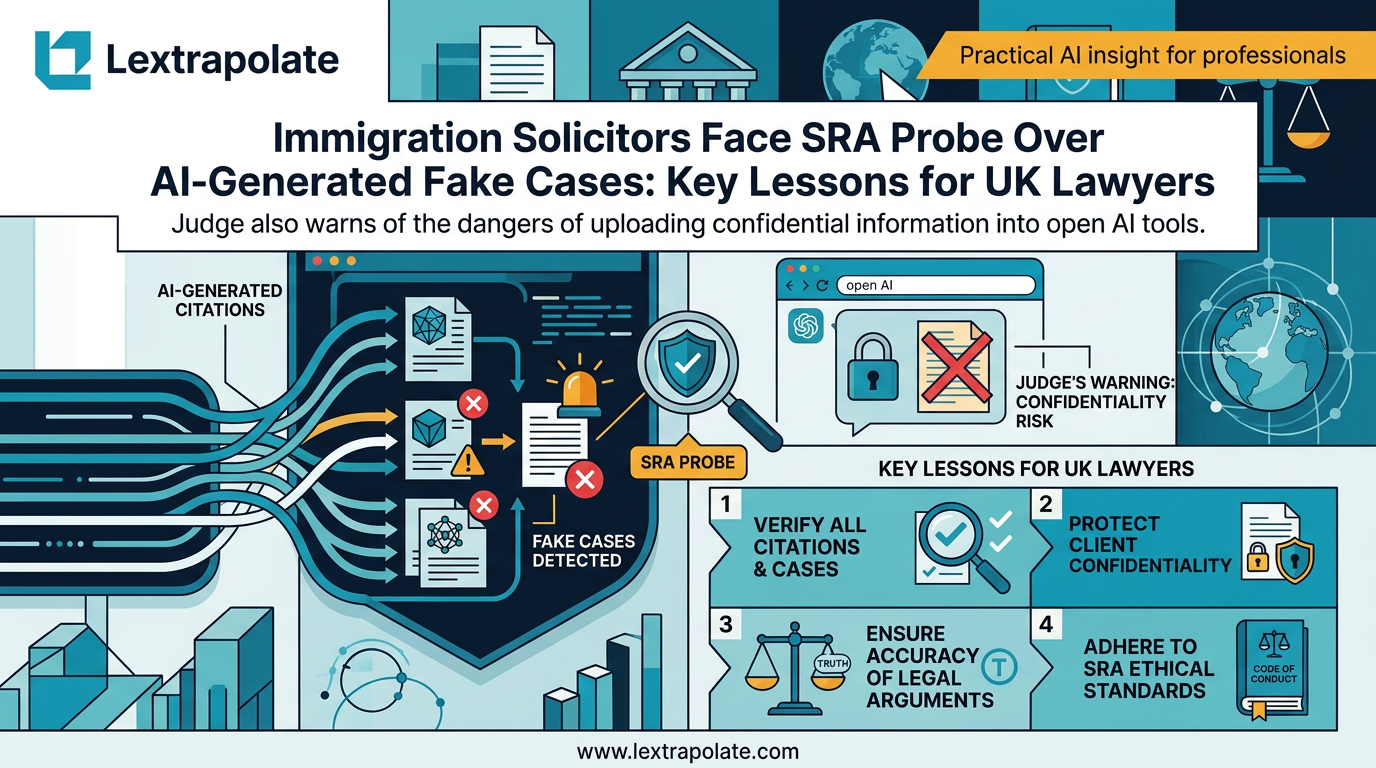

What If Your AI Tool Just Ended Your Career? The Citation Trap Lawyers Must Take Seriously

Two solicitors face SRA investigation after fake AI-generated case citations reached an Upper Tribunal. Here is what every lawyer needs to understand.

What If AI Training Became a Professional Obligation for Lawyers?

Hotshot and Legora's partnership raises a sharper question: when does AI training shift from good practice to regulatory requirement?

AI Hallucinations in Court: Why UK Lawyers Face Criminal Liability and How to Avoid SRA Sanctions

UK lawyers citing AI-fabricated cases risk contempt of court charges. With 24 documented incidents and counting, professional competence now demands technical literacy.