What if you could let AI audit your code before deploying it? Claude Code Security and what it means for lawyer-builders

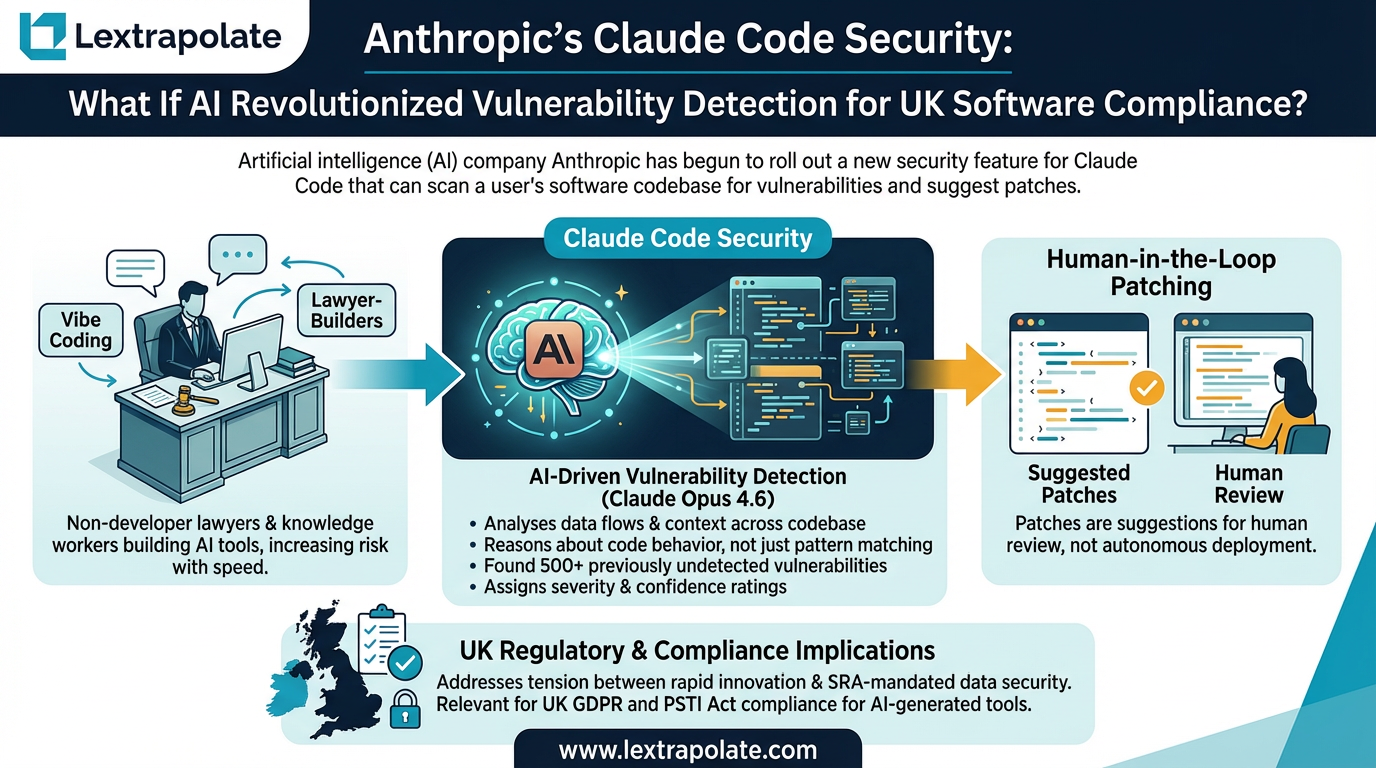

Claude Code Security is a vulnerability scanning tool by Anthropic designed to detect and patch bugs in AI-generated codebases. For UK lawyers 'vibe coding' their own applications, this feature addresses the critical tension between rapid innovation and SRA-mandated data security.

A growing cohort of lawyers, consultants, and knowledge workers are building their own lightweight AI tools without a professional developer in sight. Anthropic calls it "vibe coding" (a phrase coined, I believe, by OpenAI founder Andrej Karpathy early in 2025) . The productivity gains are real. So are the risks. Claude Code Security is Anthropic's attempt to address the second problem without killing the first.

This is a vendor announcement at this stage, and the feature is in limited research preview for Enterprise and Team customers. Independent testing does not yet exist. But the underlying concept is worth taking seriously, and the implications for non-developer builders in regulated professions are significant enough to think through now.

What Claude Code Security actually does

Traditional code scanners work by pattern matching. They look for known bad patterns in known places. They miss novel vulnerabilities because novel vulnerabilities, by definition, do not match existing patterns.

Claude Code Security takes a different approach. According to Anthropic, it uses Claude Opus 4.6 to analyse data flows and context across a codebase, reasoning about how code behaves rather than matching it against a list of signatures. The company claims it found over 500 previously undetected vulnerabilities in open-source projects during internal testing, including work with the Pacific Northwest National Laboratory. It assigns severity and confidence ratings to findings, applies multi-stage verification to reduce false positives, and suggests patches for human review.

That last part matters. The patches are suggestions. A human reviews them. This is not an autonomous fix-and-deploy system. Whether that framing holds in practice, as the feature matures and time-pressured users are tempted to approve suggestions quickly, is a different question.

The market reacted sharply to the announcement. Cybersecurity stocks fell. Investors read it as AI commoditising a function that specialist vendors have charged significant fees to perform. That reaction is itself informative: if professional investors believe this could replace enterprise security tooling, the capability is not trivial.

The legal exposure for lawyer-builders

Most lawyers who build internal tools are not thinking about the Product Security and Telecommunications Infrastructure Act 2022. They probably should be.

The PSTI Act imposes security obligations on manufacturers, importers, and distributors of "relevant connectable products". The scope is not unlimited, and internal tools that never leave an organisation sit in different territory from consumer products. But the Act reflects a broader legislative direction: software that connects to networks, handles data, or forms part of a service chain carries security obligations that cannot simply be delegated to the AI that helped write it.

If a lawyer-built tool suffers a breach, the question of liability for the AI-generated code will land somewhere. The tool's creator is the obvious candidate. The fact that Claude wrote the original code, or that Claude Code Security suggested the patch that failed, does not transfer responsibility. You built it. You deployed it. You are accountable for it.

UK GDPR adds a separate layer. If your codebase touches personal data, which most legal tools do, then scanning it with a third-party AI service raises data processor questions. Anthropic's enterprise terms will need to be reviewed before you feed client-adjacent code through any scanning feature. This is not a reason to avoid the tool. It is a reason to do the due diligence before using it.

The vibe coding problem in regulated professions

Vibe coding is a useful shorthand for AI-assisted development without formal software engineering discipline. It is how most lawyer-builders work. You describe what you want, Claude writes it, you test it informally, you ship it. The feedback loop is fast. The security review is often non-existent.

This matters more in law than in many other sectors. Client confidentiality is not optional. Data minimisation under UK GDPR is a legal obligation, not a best practice. If a tool leaks data because of a vulnerability that a five-minute scan would have caught, "I didn't know how to audit it" is not a defence that will satisfy the ICO or a client whose data was exposed.

Claude Code Security, if it performs as described, makes that excuse harder to sustain and easier to avoid. A tool that can scan your codebase for vulnerabilities before deployment, flag severity levels, and suggest patches is exactly what a non-developer builder needs. It will not replicate a full security audit by a qualified penetration tester. It is not meant to. But it raises the floor for people who currently have no floor at all.

The 500+ zero-day claim is striking. Zero-days are vulnerabilities that were unknown before discovery. Finding them requires reasoning about code behaviour, not just matching against databases. If that capability is genuinely available in a code assistant, the implication is that AI-assisted development and AI-assisted security review are becoming part of the same workflow rather than separate disciplines requiring separate specialists.

What this means

If you are building tools in Claude Code for internal use in a professional services firm, here is the practical question: what is your current security review process?

For most people reading this, the honest answer is "none" or "I looked at it and it seemed fine". Claude Code Security, once it is out of research preview, offers a structured alternative. It will not be a substitute for professional security advice on anything mission-critical. But for the lightweight automation tools that knowledge workers are shipping with increasing frequency, it could meaningfully reduce risk.

Watch for the general availability announcement. When it arrives, treat it as a required step in any deployment checklist, not an optional extra. Build the habit before it is a regulatory requirement rather than after.

If you are an Enterprise customer now, request early access. Run it on something you have already deployed. The findings may be instructive.

One other thing: review your data processing agreements with Anthropic before scanning codebases that contain or touch personal data. This step is not dramatic. It takes an hour. Skipping it could cost considerably more.

The productivity case for lawyer-built tools is established. The security case for those same tools is still being made. Claude Code Security, assuming it delivers on Anthropic's claims, is a meaningful development in that argument. Not because AI-generated security advice is infallible, but because "I had no way to check" is becoming a less credible position with each new capability that lands.

You have the tool. Audit what you have built with it.

Sources

- 1Anthropic unveils Claude Code Security to detect and fix code bugs

- 2Making frontier cybersecurity capabilities available to defenders

- 3What is Anthropic's Claude Code Security and how does it work

- 4Why Anthropic Launching Claude Code Security Is Great News for...

- 5Claude Found 500 Zero-Days. Who Patches Them?

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

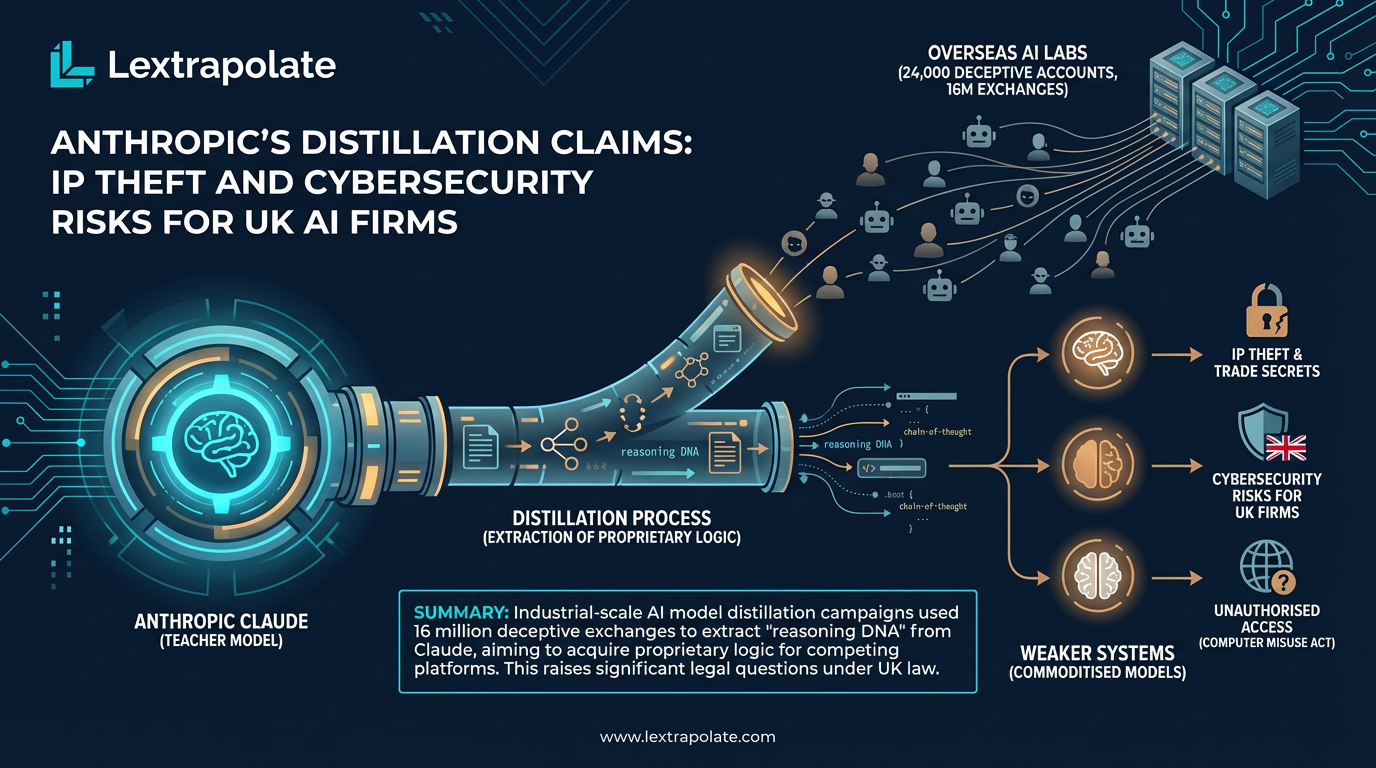

Anthropic's Distillation Claims: IP Theft and Cybersecurity Risks for UK AI Firms

Anthropic identified industrial-scale theft of Claude's capabilities via 16 million fake queries. Here's what it means for UK firms building on proprietary AI.

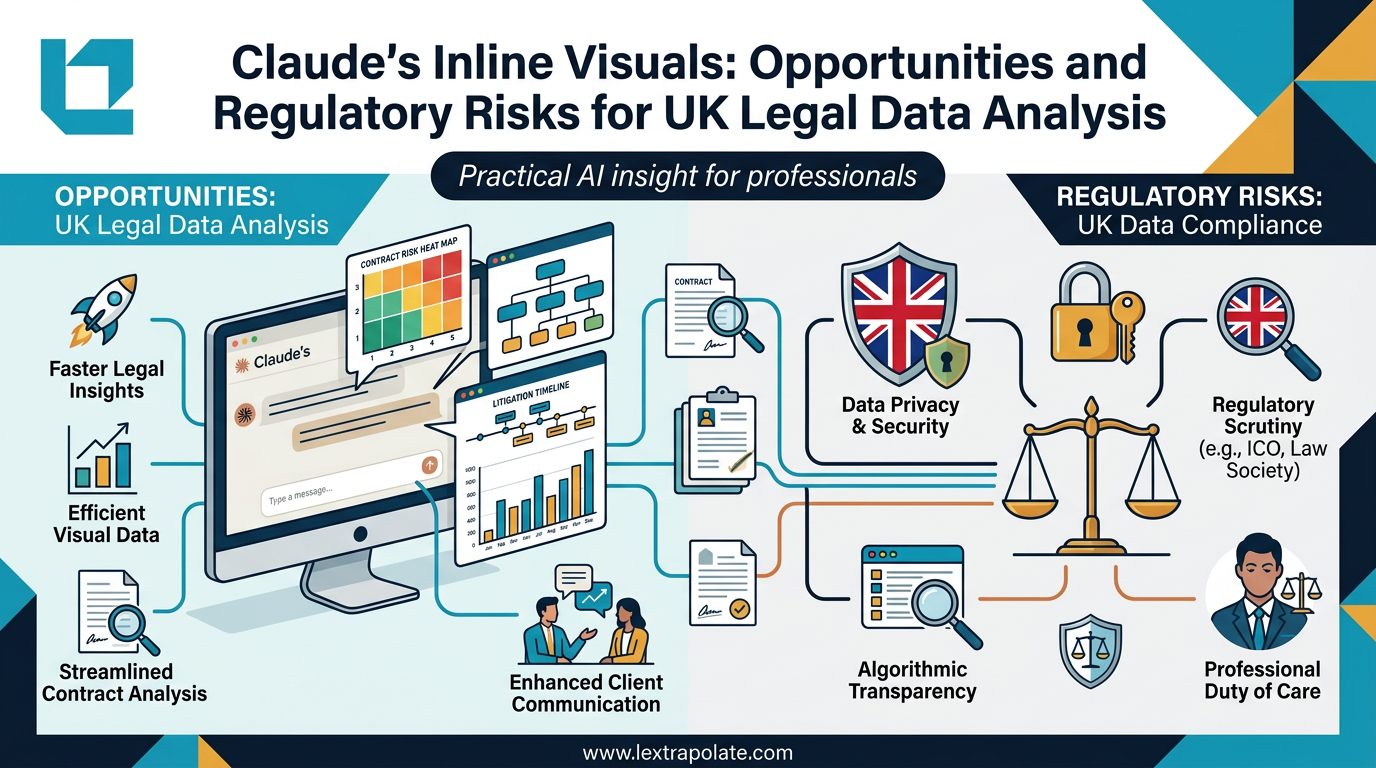

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.

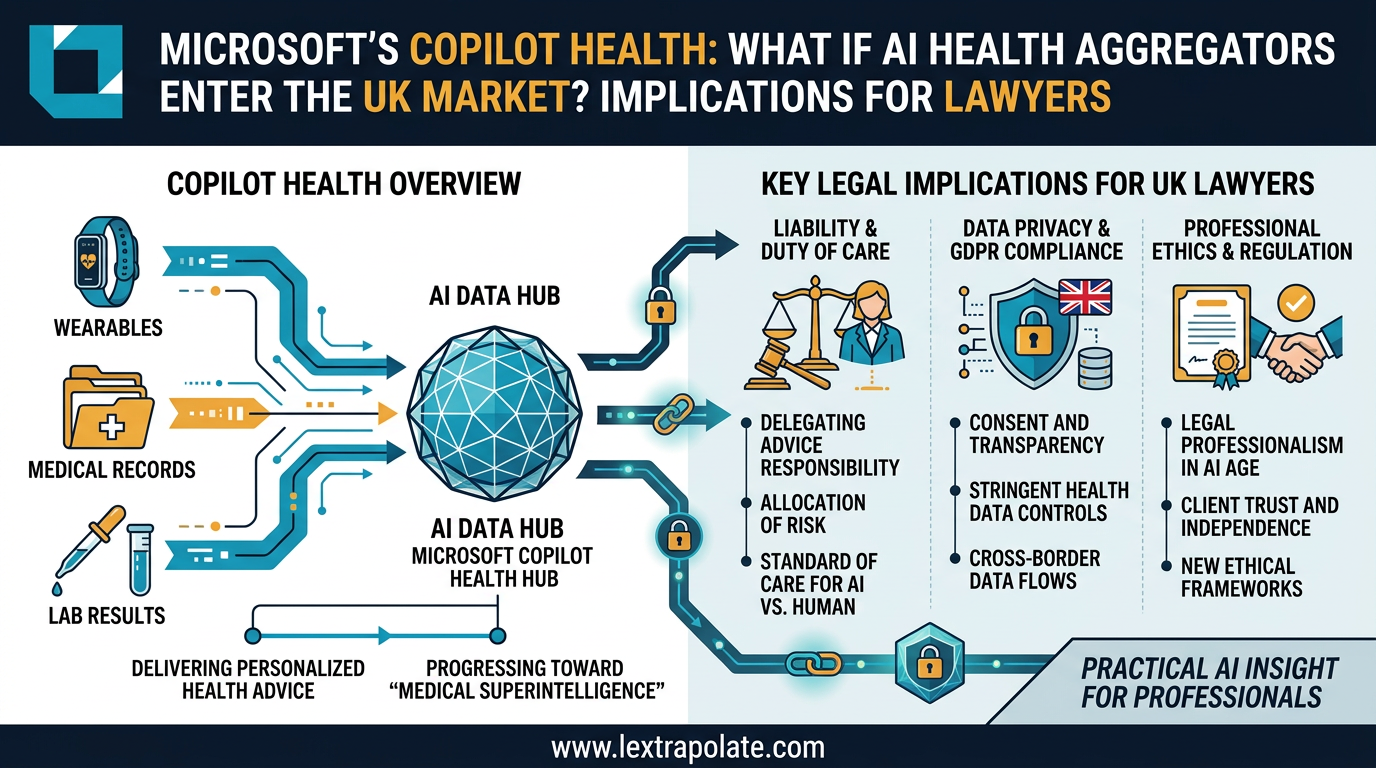

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.