What If a Deepfake Became Your Evidence? The Verification Problem Professionals Can't Ignore

In 2026, the integrity of a UK evidence bundle is no longer a given. Imagine you are three weeks into a commercial dispute in the Rolls Building; your client's position rests on a video recording that opposing counsel suddenly flags as a synthetic deepfake.

That scenario is not far-fetched. It is, in fact, becoming routine.

When conflict breaks out anywhere in the world, AI-generated images and video flood social media within hours. Some are old footage recycled from unrelated events. Some are synthetic, generated from scratch by diffusion models. Some are clips taken from video games like War Thunder, stripped of context and passed off as documentary evidence of real events. Journalists trained in verification know to check. Most professionals are not.

This matters for lawyers, investigators, compliance officers, and anyone whose work depends on the integrity of digital material.

The Scale of the Problem in 2026

Deepfake technology has matured faster than most professionals expected. The computational cost of generating convincing synthetic video has collapsed. Tools that required specialist knowledge two years ago are now accessible to anyone with a mid-range laptop and an afternoon to spare.

Detection has improved too, but the gap between generation and detection remains uncomfortably wide. AI forensic analysis can identify pixel-level artefacts, unnatural blinking patterns, inconsistent lighting across facial geometry, and metadata anomalies that betray synthetic origin. Multi-modal analysis, checking whether audio and visual tracks share a consistent provenance, adds another layer. Liveness detection, originally developed for biometric authentication, is being repurposed for video verification. Tools such as Intel's FakeCatcher and the suite reviewed by CloudSEK offer benchmark-tested detection rates, but none achieve certainty, and sophisticated adversarial generation can defeat them.

PwC's 2026 fraud analysis identifies synthetic identity fraud as one of the fastest-growing threat vectors facing financial institutions. The same underlying technology that generates fake identities for account fraud generates fake evidence for professional deception. The attack surface is identical.

Why Professionals Are Exposed

Most professional workflows were not designed with synthetic media in mind. A document management system that ingests a PDF applies version control and audit trails. The same system ingesting a video file typically does nothing equivalent. There is no standard provenance check. There is no hash verification against an original. There is no mandatory metadata review.

Legal practitioners are particularly exposed because the rules of evidence have not kept pace. Under the Civil Evidence Act 1995, documents are broadly admissible, with weight left to the tribunal. Video evidence sits within that framework. There is no statutory requirement to authenticate digital media before relying on it, and the courts have not yet developed consistent practice on how challenges to synthetic media should be handled.

The Online Safety Act 2023 imposes duties on platforms to address misinformation, but that regime addresses distribution, not the downstream professional reliance on material that has already spread. The Fraud Act 2006 reaches those who create and deploy synthetic media to deceive, but it does not help the professional who has already been deceived and is now presenting tainted material in good faith.

Defamation practitioners will see the exposure most clearly. A deepfake of a named individual making damaging statements is actionable under the Defamation Act 2013, but only once the synthetic origin is identified. If a claimant's solicitor relies on that same footage as evidence of context or background, without verification, the professional consequences sit alongside the legal ones.

What Newsrooms Do That Professionals Should Copy

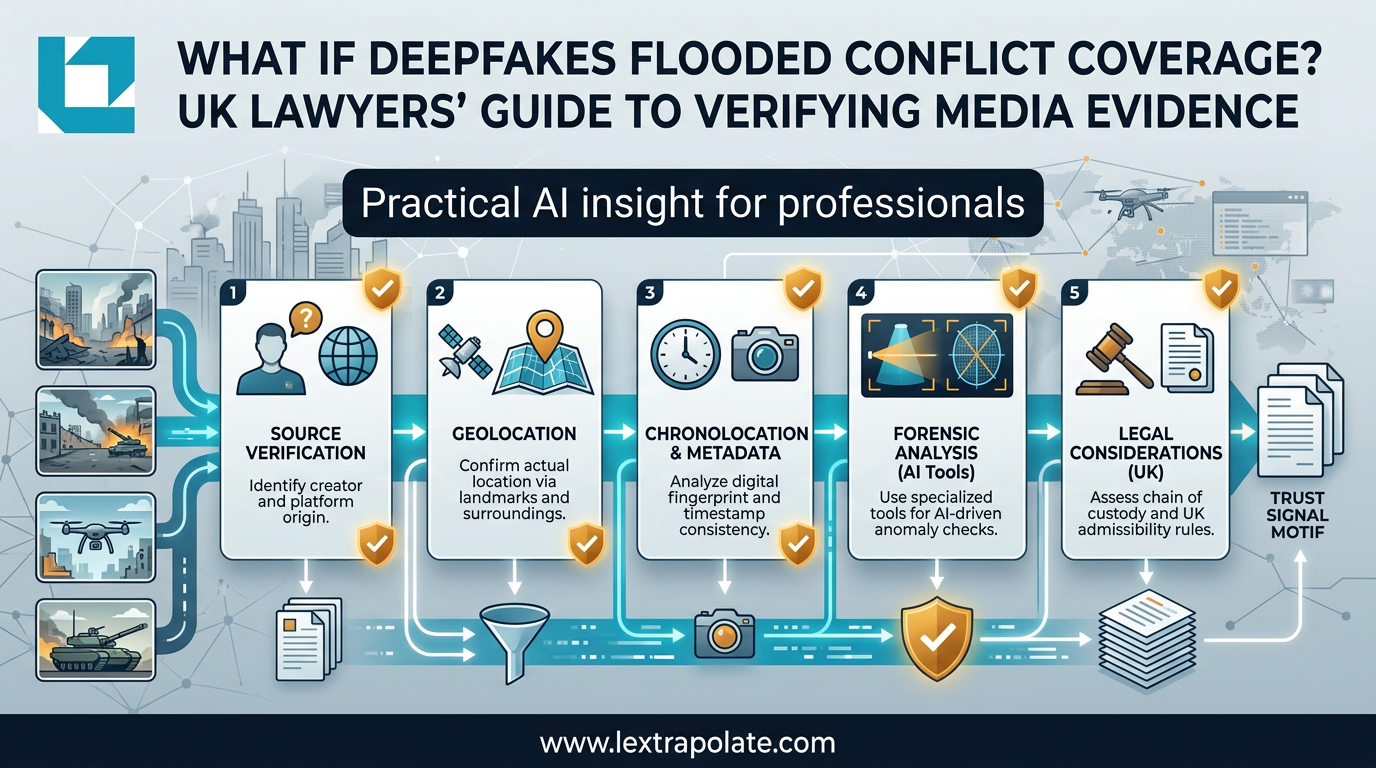

Investigative desks at serious news organisations have developed verification protocols that translate directly to professional practice. They are not technically demanding. They require discipline, not expertise.

Reverse image and video search. Before relying on any image or short video clip, run it through Google Reverse Image Search, TinEye, and InVID or WeVerify. These tools check for prior appearances of the material online and flag recycled footage. This takes two minutes. It catches a significant proportion of circulating fakes because most bad actors reuse existing material rather than generate fresh content.

Metadata inspection. Every digital file carries metadata. Photographs carry EXIF data including camera model, GPS coordinates, and timestamp. Video files carry container metadata. Discrepancies between claimed provenance and embedded metadata are a red flag. Tools such as ExifTool are free and require no specialist knowledge. If the metadata has been stripped entirely, that is itself a signal worth noting.

Geolocation verification. If a video claims to show a specific location, check it. Google Earth, Google Street View, and specialist resources like Bellingcat's geolocation guides allow any professional to verify whether the background, architecture, vegetation, and topography are consistent with the claimed location. This is particularly relevant in contentious disputes where location matters.

Source chain analysis. Where did the material first appear? Who uploaded it? What is the account history? A video that claims to document an event from three years ago but was uploaded by an account created last week warrants scrutiny. This is not sophisticated; it is methodical.

AI detection tools as a secondary check. Tools such as those reviewed by UncovAI and EkasCloud offer detection of common deepfake artefacts. They are not infallible and should not be the first or only check. Use them to corroborate concerns raised by the steps above, not as a standalone verdict.

The Monday Morning Test

If you receive digital media that you intend to rely on professionally, ask yourself four questions before proceeding.

First: do you know where this material originated, and can you trace it back to a primary source? If the answer is no, find out before relying on it.

Second: have you checked the metadata? If it has been stripped or is inconsistent with the claimed provenance, flag it.

Third: have you run a reverse search to check whether the material has appeared elsewhere in a different context? If not, do it now.

Fourth: if the material shows a person doing or saying something material to your work, have you considered whether it could be synthetic? Deepfake detection tools are imperfect, but a quick run through a detection tool costs minutes. Not running one when the stakes are high is a decision worth being able to justify.

None of this requires a forensic expert. It requires the same scepticism a good journalist applies before publication, and the same professional discipline a good lawyer applies before pleading a fact.

The volume of synthetic media in circulation will not decrease. The tools to generate it will not become harder to access. The professional obligation to verify the material on which you rely is not new, but the way you discharge it must be.

Treat unverified digital media the way you would treat an unsigned, undated document from an unknown source. You would not rely on that without checking it. The fact that it moves and has a soundtrack should not lower your guard. It should raise it.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

What If AI Transforms Hiring Overnight? UK Legal and Ethical Risks as Automated Interviews Scale

AI avatars are conducting job interviews at scale. UK employers using these tools face real legal exposure they may not have mapped yet.

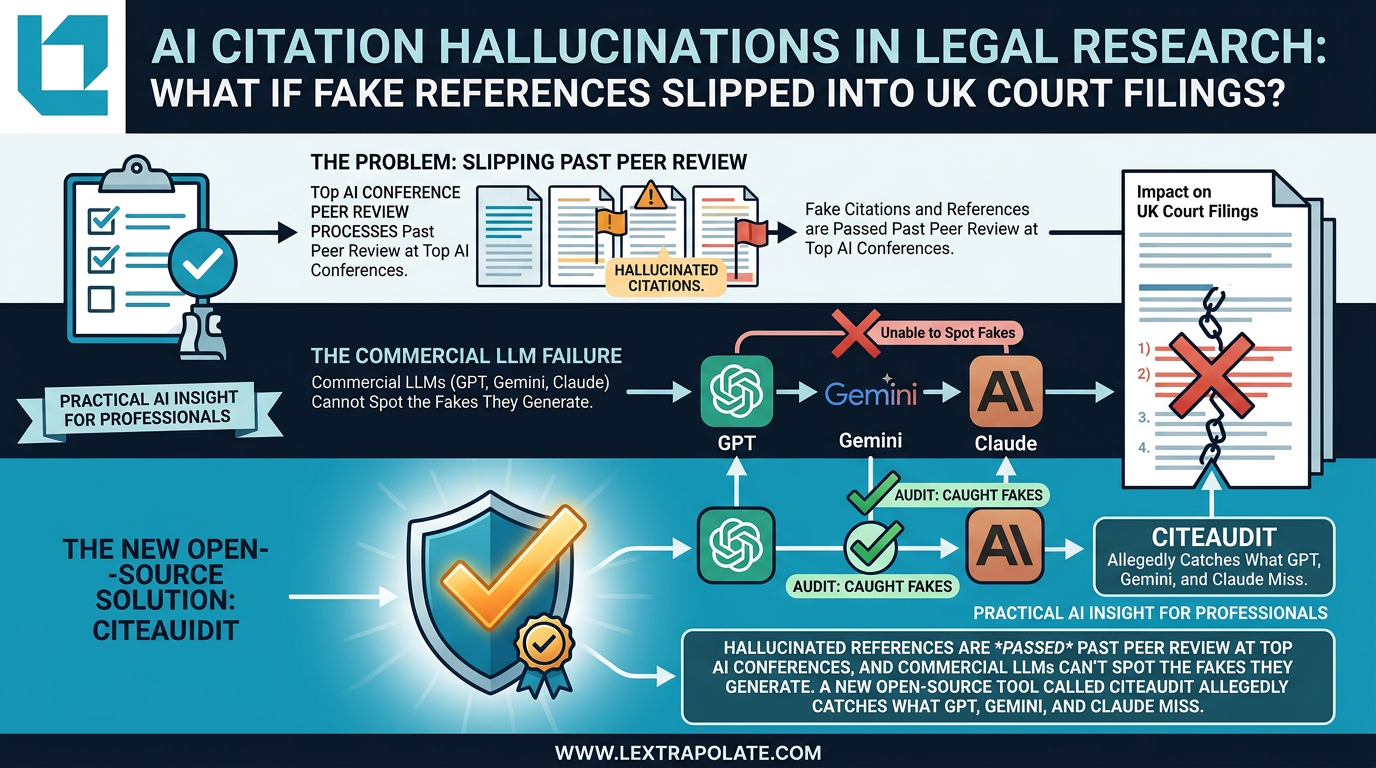

AI Citation Hallucinations in Legal Research: The Verification Problem Nobody Has Solved Yet

Fake citations are slipping past peer review at AI conferences. If that's happening in academia, what's the risk in legal practice?

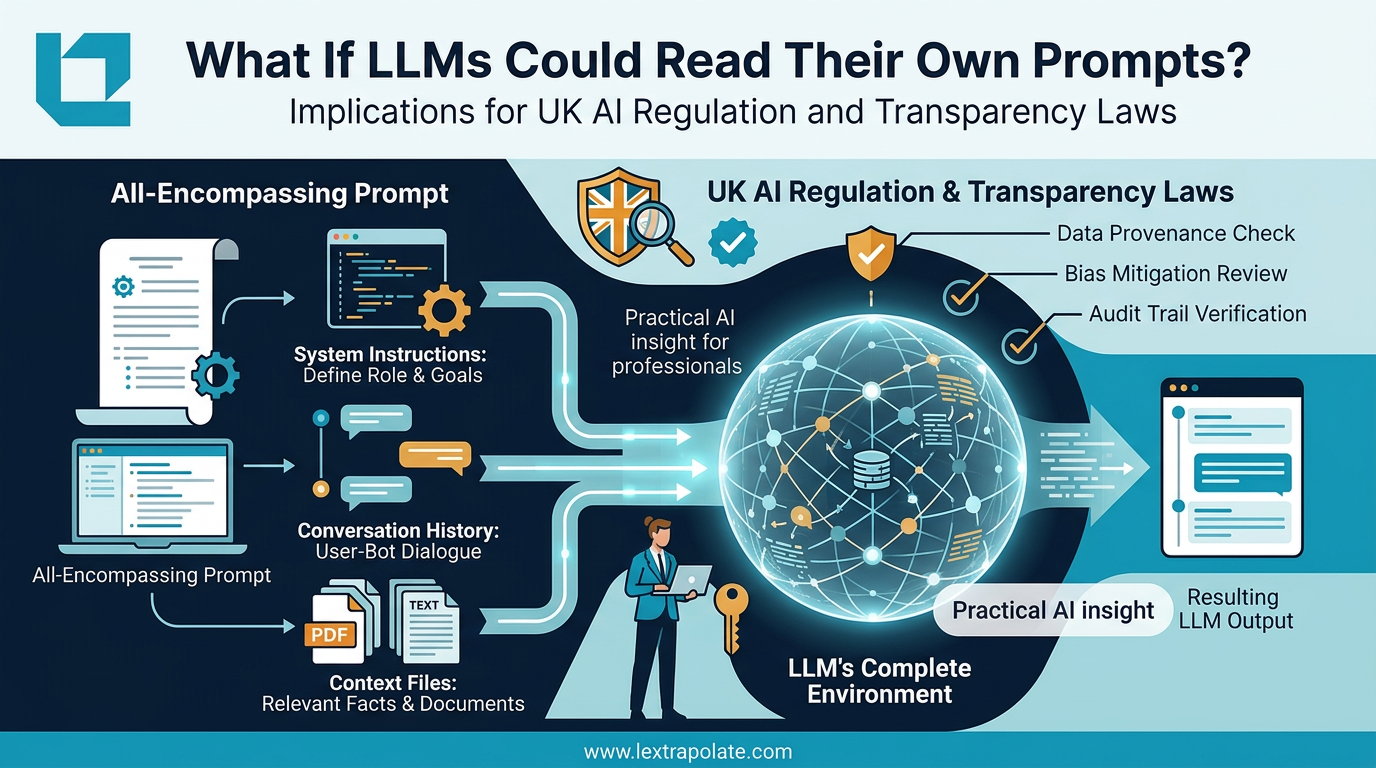

What if the invisible context window is the most important thing you cannot see?

Understanding what the AI actually sees when you prompt it is the difference between controlled output and expensive guesswork.