When Inference Costs Collapse: What Cheap AI Processing Means for Legal Workflows

Imagine your firm could analyse ten times the documents for the same cost. Would you jump at the chance, or worry about hidden risks? That scenario is fast becoming reality. MiniMax's new M2.5 models claim a 95% drop in inference costs, with performance rivaling Claude Opus 4.6 (a near-enough state of the art model) on some tasks. Chris Jeyes, a practising barrister and founder of Lextrapolate, unpacks what this means for UK law firms.

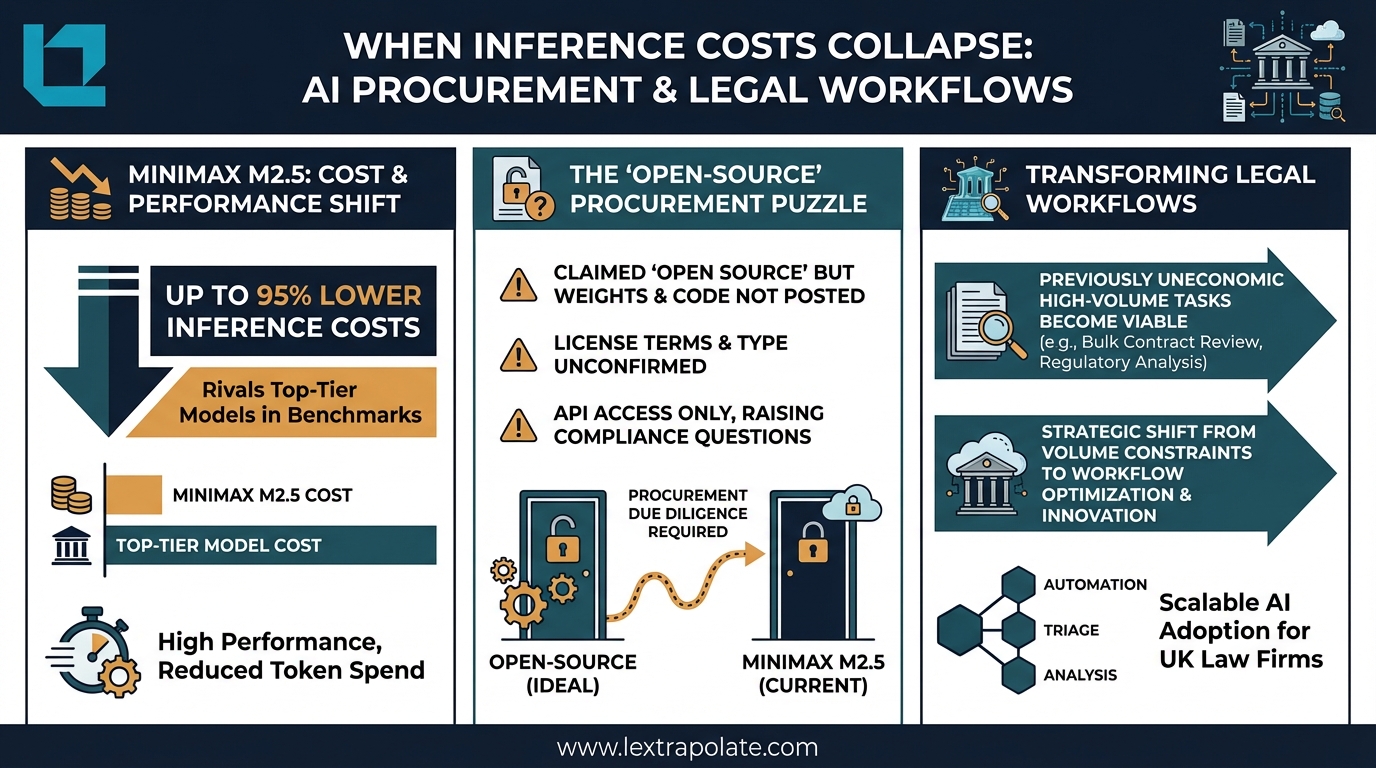

That question is becoming less hypothetical. The trend in AI inference pricing is consistently downward, and the latest models emerging from competitive development cycles are accelerating it. One data point worth examining: MiniMax released its M2.5 and M2.5-Lightning models in February 2026, claiming inference costs reduced by as much as 95% against comparable top-tier models, with benchmark performance said to rival Claude Opus 4.6 on certain tasks. Those are striking numbers. Whether the claims hold up under independent scrutiny is a separate question, and one that matters considerably before any procurement decision.

The broader story, though, is not about MiniMax specifically. It is about what happens to legal workflows when AI processing gets cheap enough that volume stops being the constraint.

The Economics Are Changing Faster Than Most Firms Have Noticed

Legal AI has historically been priced in ways that made high-volume use prohibitive for all but the largest firms. Processing thousands of contracts or disclosure documents through a frontier model carried real per-token costs that firms either absorbed into client billing or avoided by restricting use to lower-volume, higher-value tasks.

That calculus is shifting. The current generation of competitive models is driving inference costs down through a combination of architectural efficiency and straightforward competitive pressure between providers. When one credible model undercuts the market significantly, others follow or explain why they should not. For firms that have built workflows around constrained AI budgets, this creates options they may not have planned for.

The practical effect for legal work is significant. Automated first-pass contract review, due diligence triage, disclosure analysis, bulk regulatory document processing: these are all token-intensive tasks. A 90% reduction in per-token cost does not just make existing workflows cheaper. It makes previously uneconomic workflows viable.

What 'Open Source' Actually Means in a Procurement Context

Here is where caution is warranted. MiniMax's M2.5 has been described in third-party coverage as open source, and the model is accessible via Ollama's distribution platform, which typically hosts models with publicly available weights. However, as of publication, MiniMax's own documentation does not confirm that weights have been publicly released or specify the exact licence terms. That matters.

The phrase "open source" carries specific legal meaning. It implies a published licence, accessible weights or code, and rights to use, modify, and redistribute under defined conditions. Without those elements being documented, the description is at best premature and at worst misleading. We have not tested MiniMax M2.5 ourselves, and firms should not rely on third-party characterisations of its licence status. Request the actual licence terms directly from the vendor before making any deployment decision.

This is not pedantry. Under UK software law, the rights you have to use, fine-tune, or build derivative systems on a model depend entirely on what the licence grants you. If a firm trains a derivative model on M2.5 in the reasonable belief that it is freely licensed, and that turns out to be incorrect, the IP position of anything built on top of it becomes uncertain. That is a risk that costs nothing to avoid by asking the right questions upfront.

API Access, Data Processing, and the GDPR Overlay

The second procurement issue is less about the model and more about how you access it. There is a meaningful difference between running a model on your own infrastructure and sending data to a vendor's API. For UK law firms, that difference has a name: UK GDPR.

When legal documents, or anything containing personal data, pass through a third-party API, that third party becomes a data processor. You need a Data Processing Addendum. You need to satisfy yourself about where data is stored, for how long, and whether it is used for model training. These requirements apply regardless of how competitive the pricing is.

MiniMax is a Chinese-incorporated company. That does not inevitably make it unusable, but it does require firms to do more homework than they might with a European or UK-based provider, and many (including myself) would not use it for legal work. The Information Commissioner's Office has published guidance on international transfers under the UK GDPR, and the standard contractual clauses or equivalent transfer mechanisms need to be in place. If the vendor cannot provide adequate transfer safeguards, self-hosting a locally deployed model is the appropriate alternative, which in turn brings you back to the question of whether the weights are actually available and under what terms.

None of this is novel. It is the same analysis any competent data protection solicitor would apply to any cloud AI service. The novelty is the pricing pressure, which creates a temptation to move quickly. Do not.

The Monday Morning Test

If you are evaluating whether collapsing inference costs should change anything about your firm's AI strategy, these are the questions worth putting on the table this week.

First, identify where volume is currently the constraint. Which workflows are you not running through AI because the per-document cost makes it uneconomic? Those are the tasks to revisit as pricing falls. Due diligence bundles, disclosure reviews, and large contract portfolios are the obvious candidates.

Second, audit your current API dependencies. Do you have signed DPAs with every AI provider your fee earners are using? Do you know where the data goes? If you are not certain, find out before adding new providers.

Third, treat any "open source" claim as a question, not a fact, until you have seen the licence. Ask vendors specifically whether weights are published, what the licence permits, and whether commercial use requires separate terms. This takes one email and saves considerable difficulty later.

Finally, consider the strategic opportunity. If inference costs continue to fall, firms that have already built and tested high-volume AI workflows will have a material efficiency advantage over those still treating AI as a premium add-on. The investment is not in the per-query cost. It is in the time spent designing workflows that work at scale.

Scepticism Is the Right Default, But Inaction Has a Cost Too

The claims around the latest generation of cheap, high-performance models deserve scrutiny. Benchmarks published by vendors are not independent evaluations. Performance on coding tasks does not automatically translate to performance on legal document analysis. And a model that has not shipped its weights is not yet open source in any meaningful sense, whatever the press coverage says.

That said, the direction of travel is clear. Inference is getting cheaper. The gap between frontier and near-frontier model performance is narrowing. The speed of innovation is increasing. Firms that treat every new model release as noise risk missing the structural shift underneath the marketing.

The right response is not to adopt every new model that makes bold claims. It is to have the procurement process, the data protection framework, and the workflow thinking in place so that when a genuinely viable option arrives, the firm can move on it properly rather than scrambling to catch up.

That preparation costs very little. Doing it after the fact costs considerably more.

If you are working through AI procurement decisions at your firm and want a structured approach to vendor evaluation and workflow design, the team at Lextrapolate works with legal and professional services organisations on exactly these questions. Get in touch.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

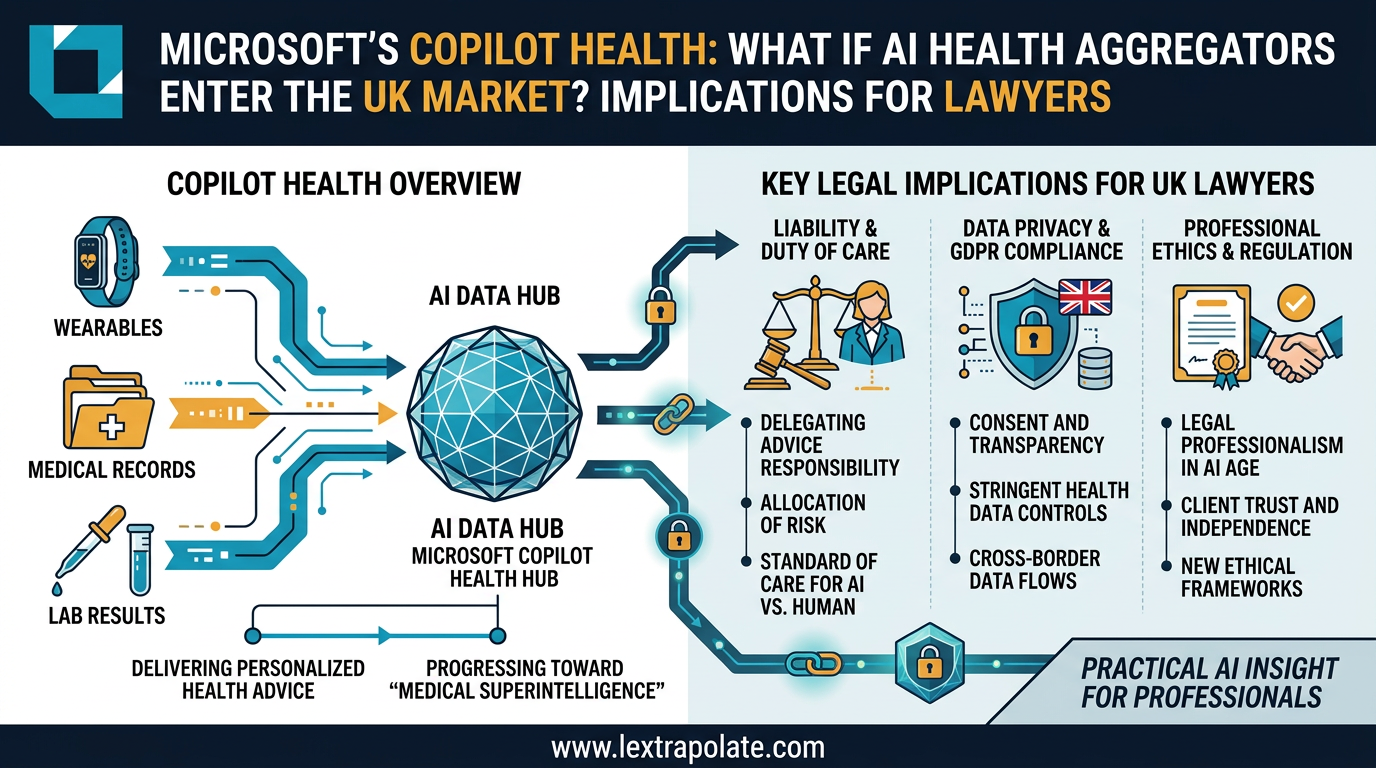

When AI Knows Your Health Better Than Your GP: What Multi-Modal Data Aggregation Means for Lawyers

Microsoft's AI health assistant reveals something bigger than healthcare tech: AI that synthesises complex multi-modal data is coming for legal practice too.

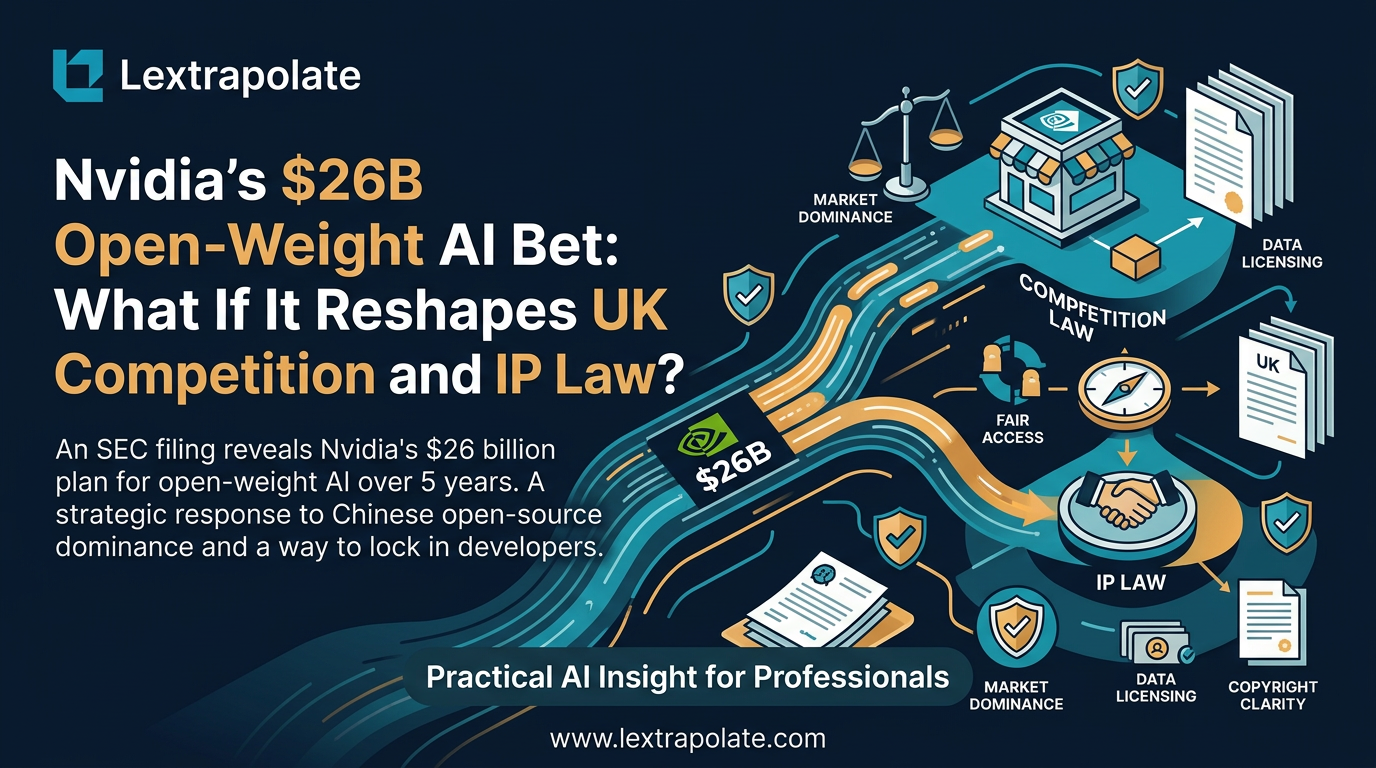

What if Nvidia Became the World's Biggest Open-Weight AI Supplier? What It Means for Law Firms Considering On-Premise AI

Nvidia pouring $26bn into open-weight AI models would reshape how law firms deploy private AI. Here's what that shift could mean in practice.

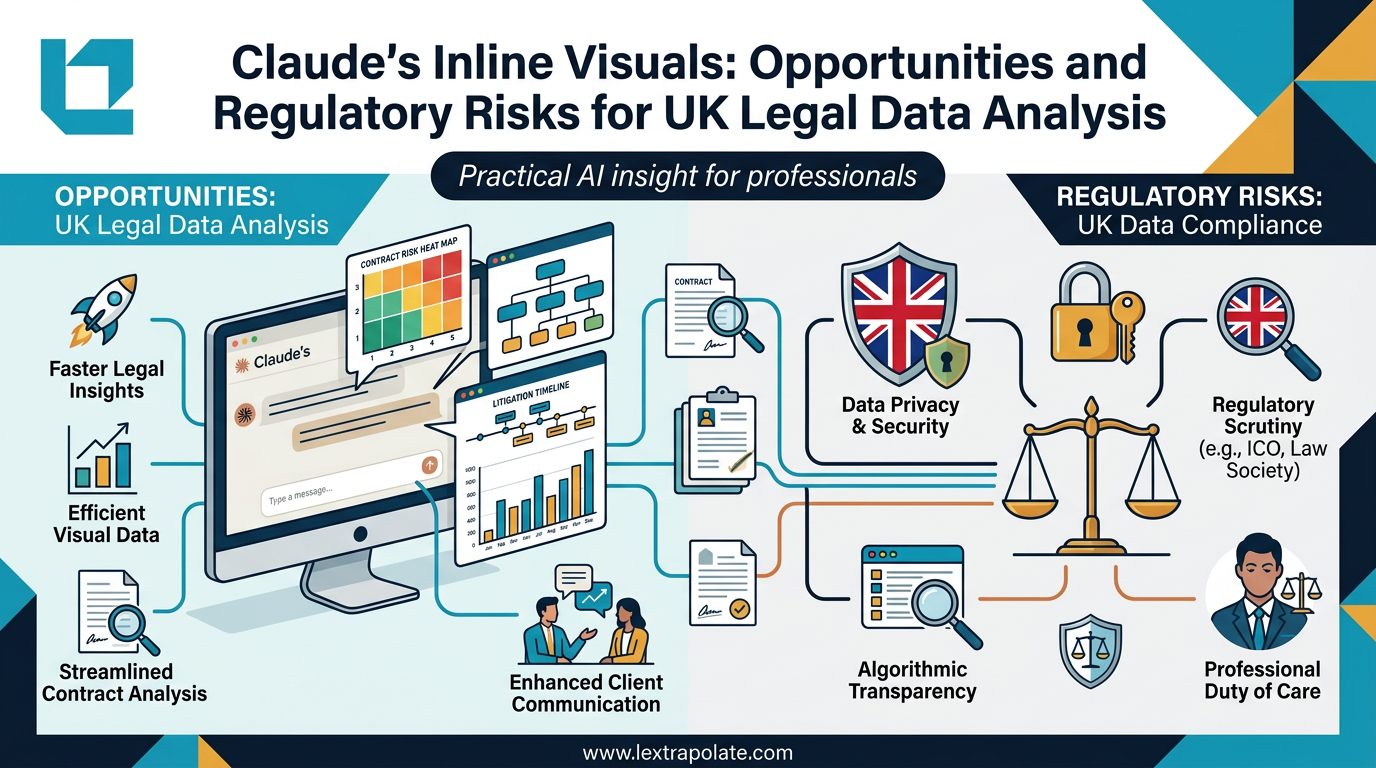

AI-Generated Visuals in Legal Work: Useful Shortcut or Regulatory Trap?

AI can now turn data into interactive charts mid-conversation. For lawyers, that's useful. It also raises questions about transparency, data protection, and professional responsibility.