Vos Warns of AI Claims Surge: UK Courts Must Adapt or Overload

What happens when sixty million people can draft a legal claim for free?

Sir Geoffrey Vos, the Master of the Rolls, answered that question last week. Speaking as part of the Justice for All series at the Old Bailey on 4 February, he warned that generative AI is about to flood the courts with claims. Not in some distant future. Now. As Joshua Rozenberg reported in the Law Gazette, the head of civil justice sees a system approaching a breaking point it has not prepared for.

His core observation is simple and, I think, correct: the bottleneck on litigation used to be cost. Lawyers were expensive. Many claims never materialised because people couldn't afford to bring them. AI has removed that bottleneck. As Vos put it:

"The first port of call used to be a lawyer if one was available and affordable. Now the first port of call is ChatGPT or CoPilot. Whatever answer generative AI gives, the would-be litigant in person can easily use it to transform a mass of documents and personal information into an arguable legal claim."

The word "arguable" is doing heavy lifting in that sentence. We'll come back to it.

The democratisation problem

Let me be clear: broader access to justice is a good thing. For decades, the cost of legal advice has locked out huge numbers of people with legitimate grievances. If AI enables someone with a genuine employment claim or housing dispute to articulate it properly, that is progress.

But Vos is right to flag the systemic consequence. The court system was not designed for this volume. The current civil court structure dates, as he noted, from legislation passed 150 years ago. It was built to serve a much narrower class of litigant. Providing justice for the wealthy members of Victorian society is a different proposition entirely from providing it for 60 million people from hugely diverse backgrounds.

The maths is stark. If AI reduces the friction of claim generation by even 30 or 40 percent, the increase in filings will overwhelm a system already running at capacity. County courts are stretched. Employment tribunals have backlogs measured in years. Family courts are in crisis. Add a wave of AI-generated claims, many of which will be poorly founded, and you have a serious problem.

This is the tension at the heart of the speech: AI democratises access, but the infrastructure to process that access doesn't exist yet.

"Arguable" is not the same as "meritorious"

Here's where practitioners need to pay attention.

Generative AI is remarkably good at producing documents that look professional. It can structure a claim, cite (sometimes real) authorities, and present facts in a coherent narrative. What it cannot reliably do is exercise judgement about whether a claim has merit. It doesn't understand the difference between a grievance and a cause of action. It doesn't know when facts, however unfair they feel, don't give rise to a legal remedy.

The result will be a surge in claims that are superficially competent but substantively weak. That has direct implications under the Civil Procedure Rules. Judges already have case management powers to strike out claims with no reasonable prospect of success under CPR 3.4, and to grant summary judgment under Part 24. But those mechanisms require judicial time. Each application must be read, considered, and determined. If the volume of weak claims doubles, the time spent filtering them doubles too.

There is a real question about whether the CPR framework needs reform to deal with AI-generated claims specifically. Should there be a requirement to disclose AI use in the preparation of court documents? Should there be an enhanced certification requirement for litigants in person, analogous to the statement of truth, confirming they have reviewed and understood the claim rather than simply submitting AI output? These are not hypothetical questions. They need answers soon.

The Article 6 question

Vos went further than diagnosis. He suggested that AI could be "able to provide, or assist in, the provision of justice outcomes, whether civil or criminal, in a tiny fraction of the time that lawyers, judges and human actors require to do so."

This is where it gets constitutionally interesting.

Article 6 of the European Convention on Human Rights guarantees the right to a fair hearing by an independent and impartial tribunal. The word "tribunal" has always been understood to mean a human decision maker. If AI begins to assist in, or partially determine, justice outcomes, the compatibility of that process with Article 6 becomes a live issue.

Vos acknowledged this directly: "Our society needs to consider now, and urgently, what humans need to continue to be doing to preserve the fundamentals of justice for humans in that machine age."

The EU AI Act, particularly Article 14, requires meaningful human oversight of high-risk AI systems. Judicial decision making is about as high-risk as it gets. While the UK is not bound by the EU AI Act post-Brexit, the principles it enshrines will inevitably influence domestic regulation and, critically, any challenges brought under the Human Rights Act 1998. A litigant who loses a case where AI played a significant role in the determination has an obvious line of attack.

For litigators, this means we need to be thinking about AI not just as a tool we use, but as a feature of the system we operate within. If courts adopt AI-assisted triage or case management, understanding how those systems work becomes part of effective advocacy.

What does this mean on Monday morning?

Three things.

First, prepare for opponents who used AI to draft their case. This is already happening, but it will accelerate. Claims from litigants in person will increasingly arrive well-formatted, legally structured, and sometimes factually creative. Do not assume that a polished document reflects a strong case. Scrutinise the underlying facts and legal reasoning with the same rigour you always would. More so, in fact, because AI-generated claims can be internally consistent while being entirely disconnected from reality.

Second, consider your own AI disclosure obligations. The direction of travel is clear. Courts will increasingly want to know whether AI was used in the preparation of documents. Get ahead of this. Develop a firm-wide policy on AI use and disclosure. If you are using AI to draft, summarise, or research, document it. Transparency now will protect you later.

Third, watch the procedural reforms. Vos is not just making observations. He is the head of civil justice. When the Master of the Rolls signals a problem of this magnitude, rule changes follow. Expect consultations on AI-related amendments to the CPR, practice directions on AI-generated documents, and possibly new protocols for managing the anticipated increase in volume. If your practice involves civil litigation, you need to be engaged with these consultations when they come. The rules that emerge will shape your practice for a decade.

The bigger picture

Vos is asking the right question at the right time. The instinct to resist change is understandable. The instinct to embrace it uncritically is dangerous. What we need is structured adaptation: courts that can handle increased volume without sacrificing quality, procedural rules that account for AI-generated claims without creating barriers to access, and a clear constitutional framework for the role of AI in judicial decision making.

The alternative is a system that buckles under the weight of claims it cannot process, where backlogs grow, where justice delayed becomes justice denied, and where the promise of AI-enabled access to justice turns into a cruel irony.

If you are a litigator, this is not a future problem. Vos is telling you it is a present one. Act accordingly.

I work with law firms and chambers on exactly these questions: how to integrate AI responsibly, how to prepare for regulatory change, and how to turn disruption into advantage. If this speech has you thinking about what your practice needs to do differently, get in touch.

Sources

- 1Courts must prepare for a surge in AI-generated claims - Master of the Rolls

- 2Speech by The Master of the Rolls: Justice for all, justice for the accused

- 3Master of rolls predicts surge in AI-generated claims | Law Gazette

- 4Master of the Rolls speech addresses AI integration in civil & criminal justice systems

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

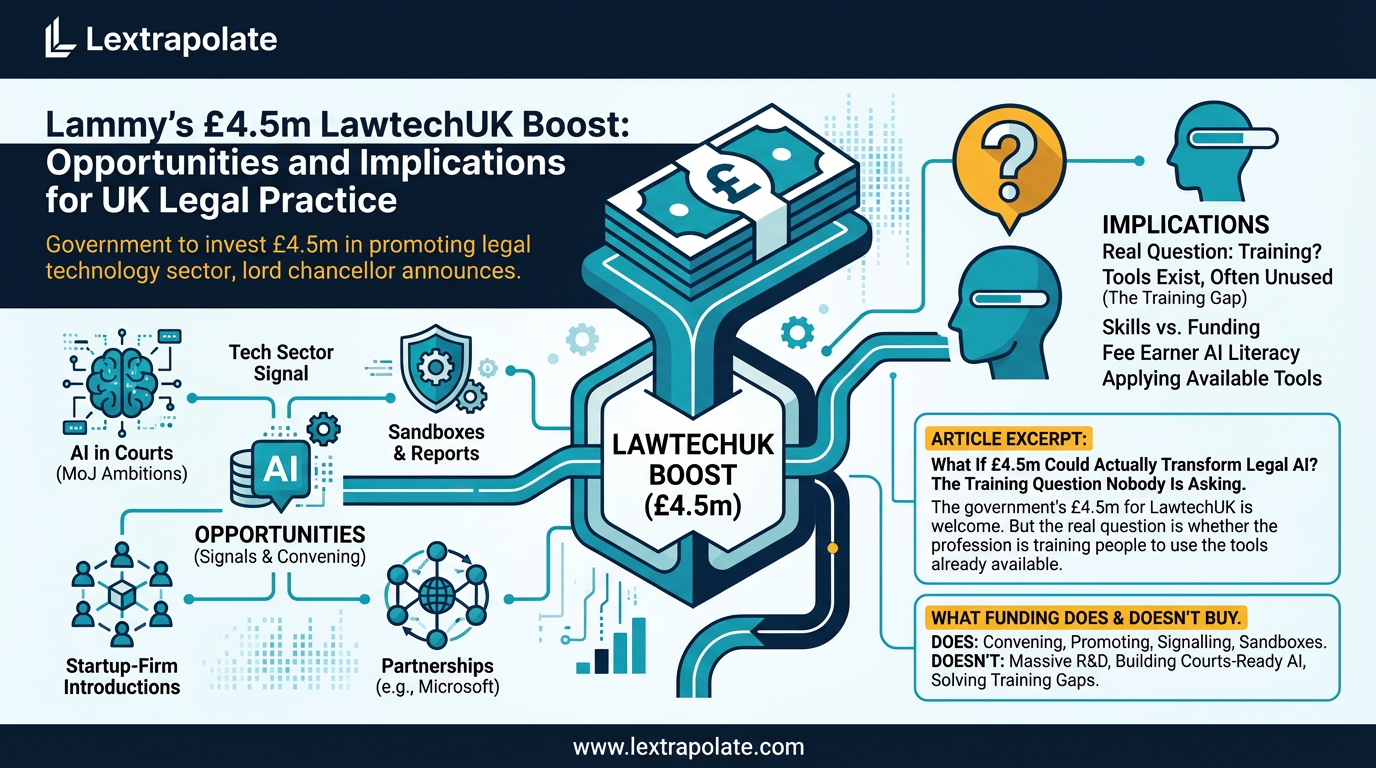

What If £4.5m Could Actually Transform Legal AI? The Training Question Nobody Is Asking

The government's £4.5m for LawtechUK is welcome. But the real question is whether the profession is training people to use the tools already available.

Zach + Richard's Legal AI Insights: What UK Lawyers Need to Know About Anthropic's Legal Push and AI 2.0

Abramowitz and Tromans dissect Anthropic's legal market entry and what AI 2.0 means for UK lawyers. Practical takeaways from their latest Legal AI Adventure.

xAI-SpaceX Merger Exodus: What Departures Mean for UK Competition and Tech Regulation

Co-founders fleeing the $1.25 trillion xAI-SpaceX merger should worry regulators and enterprise buyers alike. The governance questions are serious.