Your AI Is Working Hard. Your Firm Is Not: The Case for Institutional AI in Law

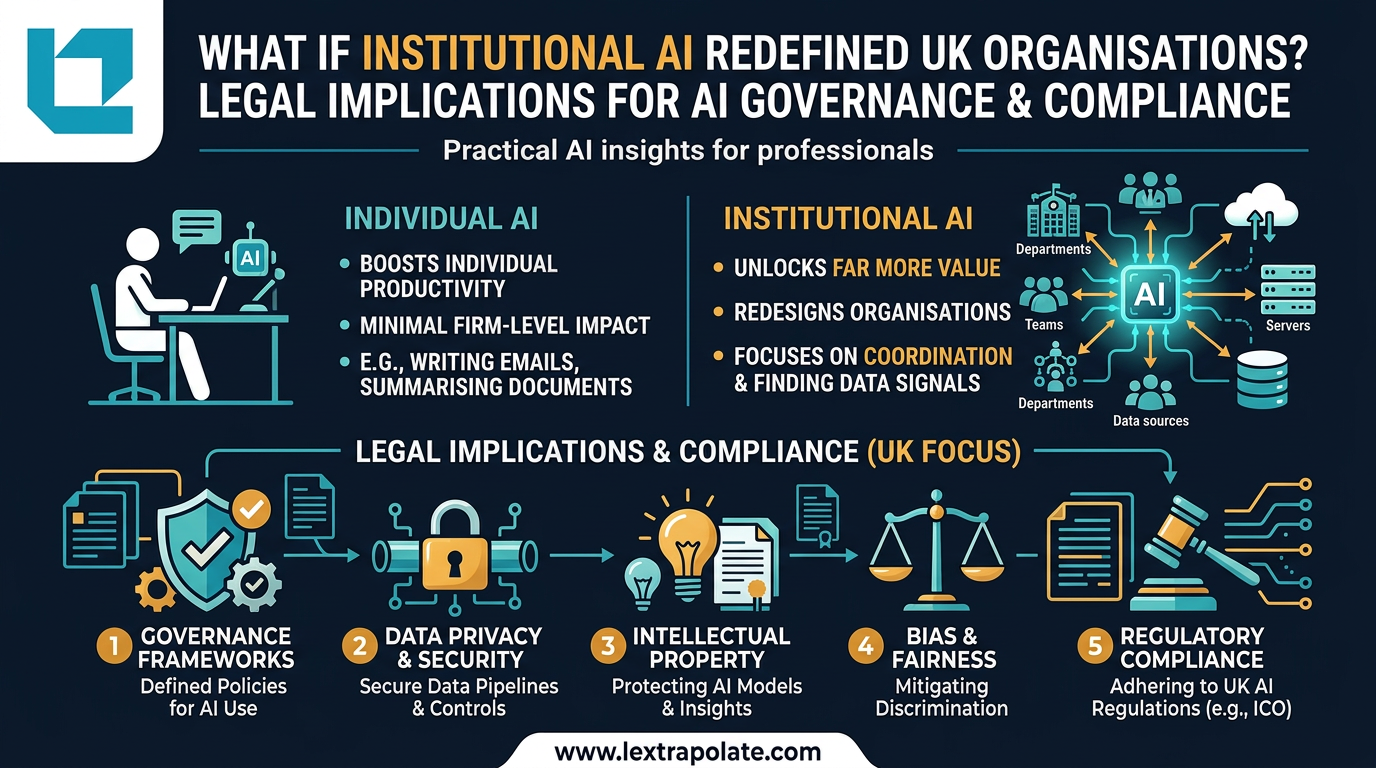

Institutional AI is defined as the redesign of organisational coordination and decision-making through artificial intelligence, moving beyond individual productivity tools. While a16z’s George Sivulka argues this shift is the only way to capture enterprise value, most UK law firms remain trapped in the 'Individual AI' phase—making solicitors faster without changing the underlying business model.

That is the problem George Sivulka, a partner at a16z and founder of enterprise AI company Hebbia, sets out in a March 2026 essay distinguishing individual AI from institutional AI. His thesis, which I want to test against the realities of legal practice, is this: individual AI raises personal productivity but leaves firm value largely untouched. Institutional AI, by contrast, redesigns how organisations coordinate, process information, and make decisions. The firms that pursue only the former while neglecting the latter will find that their competitors' efficiency gains compound, not just individually, but structurally.

I should be clear upfront: Sivulka's essay is vendor-linked analysis, written by someone with a commercial interest in enterprise AI adoption. The productivity claims (AI making individuals "10x more productive") are rhetorical rather than empirically grounded. No independent research has verified his seven-pillar framework. Treat the essay as a provocation, not a finding. But the underlying distinction between individual and institutional AI is worth taking seriously, particularly for law firms trying to work out where their AI investment is actually going.

What Institutional AI Actually Means for a Law Firm

Sivulka's framework identifies coordination as one of institutional AI's primary value levers. In a law firm context, this translates directly. A firm's knowledge does not sit in one place. It lives in the heads of partners, in matter files across multiple systems, in correspondence buried in email threads, in precedents that were last reviewed when a different government was in power. Individual AI cannot fix that. A lawyer with a good AI assistant can work faster on their own matter. They cannot easily draw on what their colleague learned in a related transaction six months ago.

Institutional AI, as a concept, asks a different question: what would need to change about how the firm is structured for AI to create value at the organisational level? That might mean building systems that extract and tag knowledge from completed matters so it becomes searchable and usable. It might mean AI agents that flag when a new instruction resembles a pattern seen in previous disputes. It might mean automated coordination between departments on complex transactions rather than relying on weekly calls between fee-earners who are each operating their own AI tools in isolation.

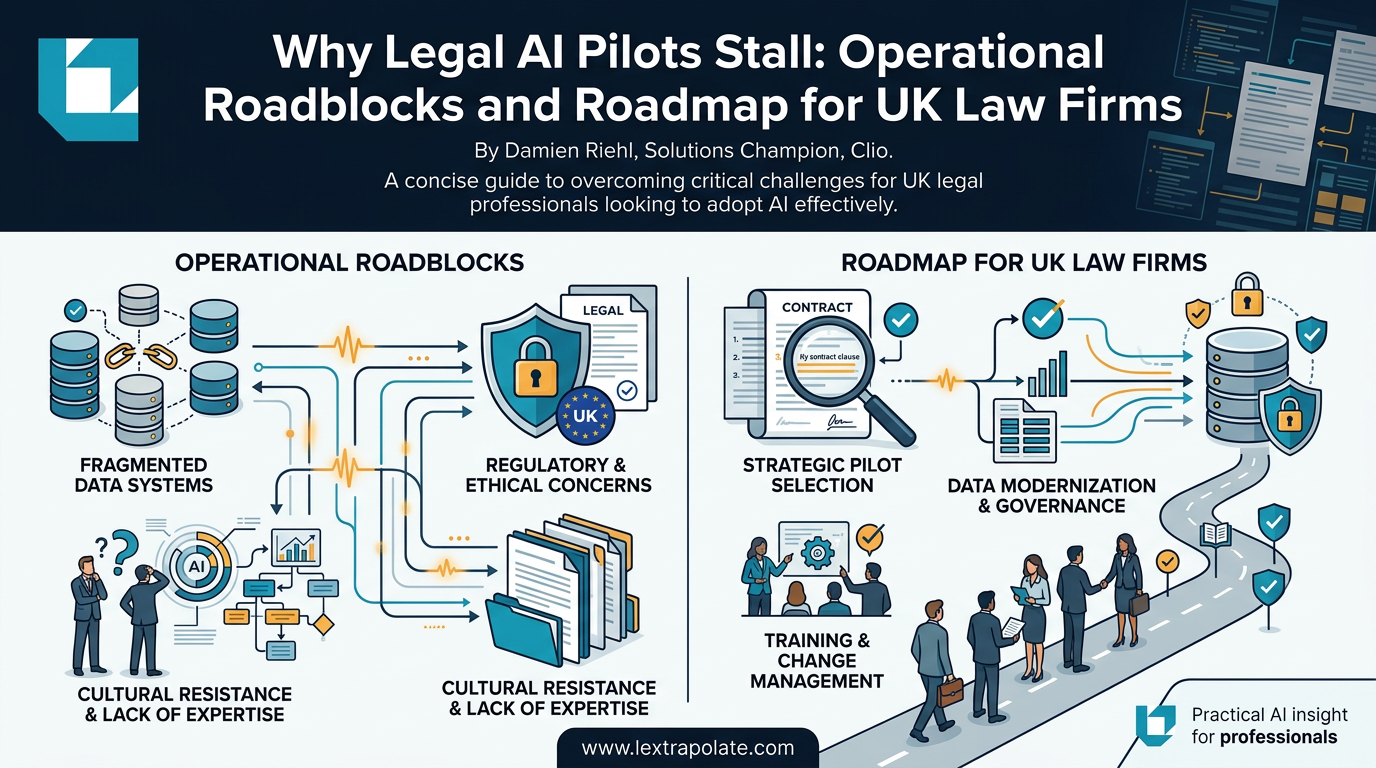

None of that is fanciful. The technology to do most of it exists. What does not yet exist, in most firms, is the operational infrastructure to deploy it, and the willingness to redesign processes rather than simply accelerate existing ones.

The Electricity Analogy and Why It Cuts Both Ways

Sivulka invokes the shift from steam to electric power in industrial manufacturing as an analogy for the institutional AI transition. It is a useful comparison, but it requires care in the legal context.

When factories electrified, the initial gains were modest precisely because factory layouts had been designed around steam-powered central shafts. The transformative productivity gains only came once manufacturers redesigned their assembly lines from scratch to exploit the properties of electric motors. The point is that the technology alone was insufficient. Organisational redesign was what unlocked the value.

Applied to law firms, this suggests that deploying AI tools into existing workflows, without questioning whether those workflows are correctly designed, will only ever produce incremental gains. A due diligence process built around junior associates reviewing documents sequentially does not become fundamentally more efficient just because each associate now has an AI tool. The process itself may need to change: different role allocation, different review structures, different quality checkpoints.

The caveat is that law is not a factory. Legal judgment, client relationships, professional accountability, and regulatory obligations shape what can be automated and how. A manufacturing firm can redesign its assembly line without worrying about whether the conveyor belt owes a duty of care. A law firm cannot outsource professional responsibility to its systems, regardless of how well those systems perform. Any institutional AI redesign in a legal context must account for that constraint from the outset.

UK Regulatory Exposure Firms Are Not Thinking About

If a law firm does move toward institutional AI, particularly AI agents operating with some degree of autonomy across matter coordination, conflict checking, or client-facing outputs, it will encounter regulatory obligations that most current AI strategies are not addressing.

The UK does not yet have sector-specific AI legislation, but the framework is not empty. The ICO's guidance on automated decision-making under UK GDPR applies where AI contributes to decisions with legal or significant effects on individuals. If an AI coordination system flags matters, allocates work, or filters information in ways that affect clients or fee-earners, data protection compliance becomes live. Article 22 UK GDPR restricts solely automated decisions without meaningful human review.

The Solicitors Regulation Authority's position is still developing, but its Technology and Legal Services guidance makes clear that firms remain fully responsible for the outputs of any technology they deploy. The Bar Standards Board takes the same approach. Delegating a task to an AI system does not dilute professional accountability. The Ayinde judgment illustrated starkly what happens when AI-generated content is relied upon without adequate professional oversight. Institutional AI at scale creates more, not fewer, opportunities for that kind of failure if governance structures are not in place.

Firms pursuing institutional AI redesign should also consider the EU AI Act's likely influence on UK regulatory expectations, even post-Brexit. High-risk AI system classifications under the EU Act include systems used in legal contexts and in employment decisions. A UK firm operating across jurisdictions, or advising clients on AI-adjacent matters, will need to understand both regimes.

What This Means in Practice, This Year

The institutional AI argument does not require a firm to rebuild everything simultaneously. It does require a shift in how AI strategy is framed.

Most firms currently ask: which tasks can we use AI to do faster? A better question is: which processes would we design differently if we were building from scratch with AI available? Start with one workflow, a specific practice area's due diligence process, or a defined matter type in litigation. Map it completely. Identify where value is currently lost to coordination failures, information siloing, or repeated work that should be captured institutionally. Then ask what AI could do differently if the process were redesigned around it, not simply dropped into it.

Governance must be built in at this stage, not retrofitted later. Define who is accountable for AI outputs at each decision point. Build audit trails into any AI agent workflow from the beginning. Ensure that human review is genuine, not nominal. The SRA will not accept "the AI flagged it" as a satisfactory answer to a complaint.

Leadership engagement is not optional. Institutional AI redesign requires partners to change how they work, not just how their associates work. If the redesign only touches the work of junior fee-earners, it is not institutional, it is just delegation at scale.

The firms that treat AI as a tool for individual lawyers to use better will find efficiency gains. The firms that treat AI as a reason to rethink how they are organised will find something more durable. That second group, in law at least, is still very small.

Now would be a good time to join it.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

If AI Agents Become Your Workforce, What Happens to the Law Firm?

A hypothetical scenario playing out in China raises a serious question for legal practice: can a solo lawyer with AI agents compete with a mid-sized firm?

What If Your Firm Never Escapes Pilot Purgatory? The AI Adoption Trap Most Law Firms Are Already In

Most legal AI pilots succeed technically and fail operationally. Here is why firms stall after the demo, and what it actually takes to move forward.

The Lawyerless Law Firm: What SRA Approval of Technology-Only Practices Means in Practice

The SRA has now authorised two technology-only law firms. No solicitors, no advice, just automated workflows. That deserves serious scrutiny.