---

title: "What If Your Next Legal Automation Script Wrote Itself in Under a Second?"

slug: what-if-your-next-legal-automation-script-wrote-itself-in-under-a-second

date: 2026-02-15

excerpt: "A coding model running at 1,000+ tokens per second changes what lawyers can build without developers. A thought experiment on what that actually means."

category: "AI Models & Capabilities"

tags:

- AI coding

- legal automation

- workflow scripting

- GPT-5.3-Codex-Spark

- knowledge work

- legal technology

heroImage: /images/blog/what-if-your-next-legal-automation-script-wrote-itself-in-under-a-second/hero.jpg

sources:

- title: "Introducing OpenAI GPT-5.3-Codex-Spark Powered by Cerebras"

url: "https://www.cerebras.ai/blog/openai-codexspark"

- title: "Introducing GPT-5.3-Codex-Spark - OpenAI"

url: "https://openai.com/index/introducing-gpt-5-3-codex-spark/"

- title: "OpenAI swaps Nvidia for Cerebras with GPT-5.3-Codex-Spark"

url: "https://www.techzine.eu/news/analytics/138754/openai-swaps-nvidia-for-cerebras-with-gpt-5-3-codex-spark/"

- title: "A new version of OpenAI's Codex is powered by a new dedicated chip"

url: "https://techcrunch.com/2026/02/12/a-new-version-of-openais-codex-is-powered-by-a-new-dedicated-chip/"

- title: "Introducing GPT‑5.3‑Codex‑Spark - Simon Willison's Weblog"

url: "https://simonwillison.net/2026/Feb/12/codex-spark/"

seoKeywords:

- AI legal automation

- GPT-5.3-Codex-Spark lawyers

- AI coding for legal workflows

- legal document automation AI

- coding models for knowledge workers

- law firm automation tools

published: true

---

Imagine you need a script that reads a lease, extracts the break clause dates, cross-references them against a calendar of limitation periods, and flags any that fall within 90 days. Today, that request goes to a developer, joins a queue, and comes back in a week or two. Maybe longer. Now imagine describing that requirement in plain English and watching the code appear, tested and functional, before you finish your coffee. That is the thought experiment worth having right now. Not because it is science fiction, but because the raw capability to make it real may have just arrived. Several firms we have worked with are already exploring this.

Now imagine describing that requirement in plain English and watching the code appear, tested and functional, before you finish your coffee.

That is the thought experiment worth having right now. Not because it is science fiction, but because the raw capability to make it real may have just arrived.

## The Speed That Changes the Question

OpenAI has released [GPT-5.3-Codex-Spark](https://openai.com/index/introducing-gpt-5-3-codex-spark/), a coding model optimised to run on Cerebras' Wafer Scale Engine 3 hardware. The headline number: over 1,000 tokens per second at inference. For context, that means generating functional code in something close to real time. Not "wait 30 seconds for a response" real time. Actual, conversational-speed real time.

The model is a smaller, distilled version of GPT-5.3-Codex, tuned specifically for interactive coding tasks. According to [Cerebras' announcement](https://www.cerebras.ai/blog/openai-codexspark), it scores 77.3% on SWE-Bench Pro compared to 64% for its predecessor, while running dramatically faster. It is currently available as a research preview for ChatGPT Pro subscribers.

This is interesting as a product launch. It is far more interesting as a signal about where coding assistance is heading and what that means for professionals who are not developers but who desperately need things built.

## Why Lawyers Should Care About Coding Speed

Most lawyers do not write code. That is precisely the point.

The bottleneck in legal technology adoption has never really been the complexity of the logic. Lawyers deal with complex conditional logic every day. If the tenant has not served notice by the break date, and the landlord has not waived the condition, then the option lapses. That is an algorithm. Lawyers just write it in English rather than Python.

The bottleneck is translation. Getting from "I know exactly what I need this tool to do" to "someone has built it and it works" takes weeks, costs money, and requires a developer who understands enough about law to get the edge cases right. In most firms, that developer is either overbooked or does not exist.

A coding model that generates working scripts at conversational speed collapses that bottleneck. Not partially. Almost entirely.

Think about what a senior associate or partner could build in an afternoon if the cost of trying something were effectively zero. A script to parse Companies House filings and flag PSC changes. A macro that reformats opposing counsel's disclosure list into your firm's standard template. A tool that reads a set of loan agreements and extracts the financial covenants into a comparison table.

None of these are novel ideas. All of them currently sit in a backlog somewhere because the development resource is not available.

## The IP and Data Questions You Cannot Ignore

Speed is seductive. But professionals running client-sensitive information through any AI tool need to think carefully about what happens to that data.

When a lawyer describes a workflow requirement to a coding model, that description will often contain or imply confidential client information. "Parse these loan agreements for Deutsche Bank" is an obvious example. Even more abstract prompts can disclose privileged strategy or commercial terms.

Under UK GDPR, any processing of personal data through a third-party AI model requires a lawful basis and, where the provider is outside the UK, adequate safeguards for international transfers. The fact that you are generating code rather than drafting a letter does not change the data protection analysis. If client names, transaction details, or personal data form part of the prompt, the obligations apply.

There are also intellectual property considerations under the Copyright, Designs and Patents Act 1988. Code generated by an AI model raises unresolved questions about ownership. If the model produces a script that substantially replicates code from its training data, who owns that output? If you deploy it in a client-facing tool, have you introduced a copyright risk into your product? The UK's position on AI-generated works remains unsettled, and the safe answer for now is to treat AI-generated code as requiring the same review you would give to code written by a junior developer you had never worked with before.

[TechCrunch's coverage](https://techcrunch.com/2026/02/12/a-new-version-of-openais-codex-is-powered-by-a-new-dedicated-chip/) notes this is a research preview. The terms of use, data retention policies, and enterprise deployment options will matter enormously for any firm considering serious adoption.

## The Hardware Shift Underneath

One detail worth noting: this is OpenAI's first major model running on non-Nvidia hardware. The partnership with Cerebras, [announced in January 2026](https://www.techzine.eu/news/analytics/138754/openai-swaps-nvidia-for-cerebras-with-gpt-5-3-codex-spark/), uses Cerebras' wafer-scale chips specifically designed for ultra-low-latency inference.

Why does that matter for lawyers? Because competition in the AI hardware market affects pricing, availability, and ultimately the cost of the tools built on top of it. If Cerebras' architecture delivers faster inference at lower cost, that puts downward pressure on the price of AI-assisted coding tools more broadly. Under the Competition Act 1998, Chapter I prohibition, market watchers will be paying attention to whether the OpenAI-Cerebras relationship reduces competitive access to these capabilities. But for end users, more competition in AI infrastructure generally means better, cheaper tools arriving faster.

[Simon Willison's analysis](https://simonwillison.net/2026/Feb/12/codex-spark/) provides useful technical context on the model's capabilities and limitations. His point about the model being optimised for interactive, iterative coding rather than autonomous long-running tasks is important. This is a tool for working with, not handing off to.

## The Monday Morning Test

So what could you actually do with this on Monday?

If you have access to the research preview, start small. Pick a repetitive task you do manually. Something with clear logic and defined inputs and outputs. Describe it to the model. See what comes back.

Good candidates: reformatting data between systems, extracting specific clauses from a set of documents, generating comparison tables, building simple intake forms, or automating the fiddly spreadsheet manipulations that eat hours of associate time.

Bad candidates (for now): anything requiring legal judgement about the substance of a document, anything touching client-confidential data without proper data processing agreements in place, anything you plan to deploy without testing.

The critical discipline is the same one I emphasise in every training session I run: you must be able to verify the output. If you cannot read the code and understand broadly what it does, or if you cannot test it against known inputs to confirm it produces correct results, you are not ready to use it in production.

A 15x speed increase in code generation is only useful if the human in the loop is competent to review what comes out. Otherwise you are just generating mistakes faster.

## Where This Goes

The trend line matters more than the single product. Coding models are getting faster, more accurate, and cheaper. The gap between "I need a developer" and "I can build this myself" is narrowing quarter by quarter.

For law firms and legal teams, this means the traditional model of outsourcing all technology build to IT departments or external vendors is becoming optional rather than mandatory. Not for everything. Complex integrations and enterprise systems still need professional engineering. But for the long tail of small, specific, high-value automation tasks that currently go unbuilt because they are not worth a formal development project? That changes.

The firms and professionals who will benefit most are those who invest in understanding what these tools can do, where the limits are, and how to use them responsibly. Training is not optional. Governance is not optional. But nor is falling behind because you refused to engage with a technology that your competitors are already using.

If you want to discuss how your team can start building practical AI coding skills with proper safeguards, [get in touch](https://lextrapolate.com/contact). That is what we do.

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

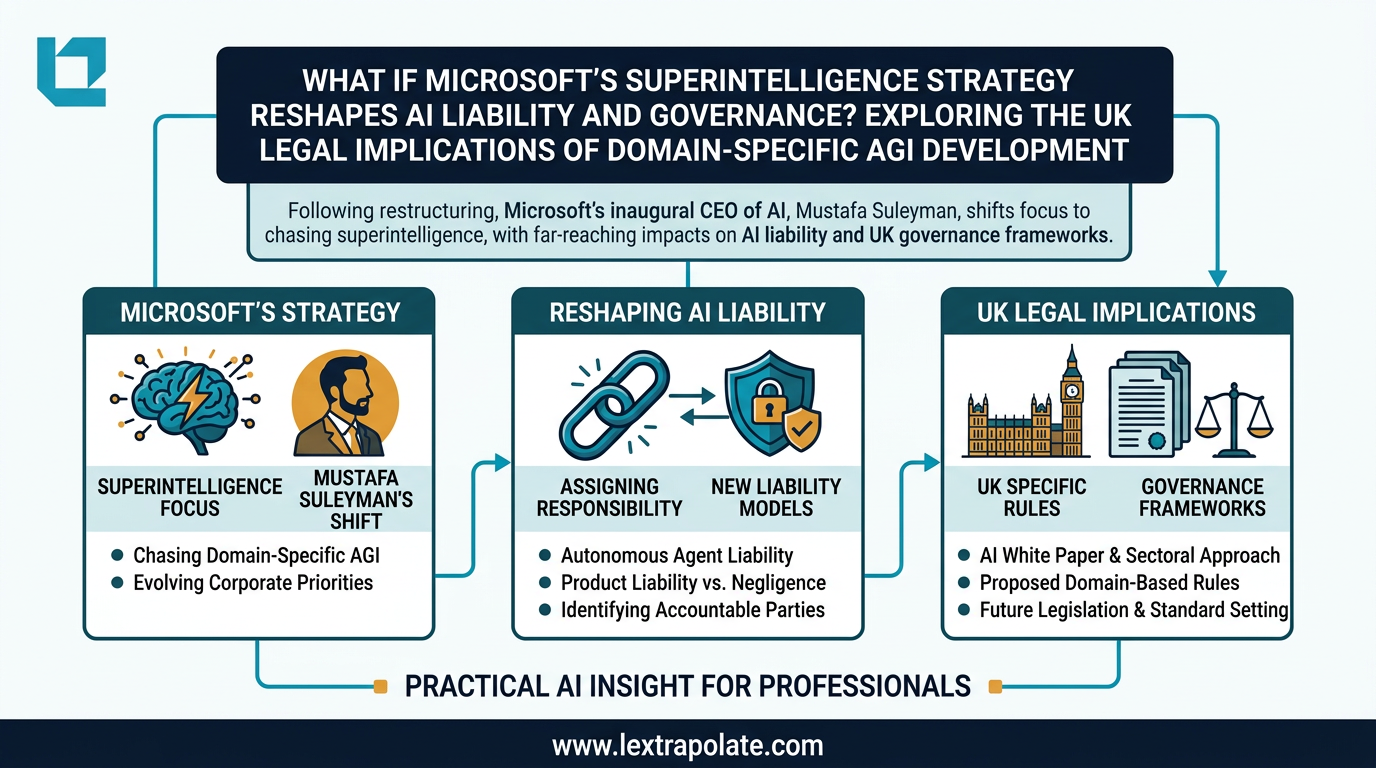

What If Microsoft's Superintelligence Strategy Reshapes AI Liability and Governance? Exploring the UK Legal Implications of Domain-Specific AGI Development

Microsoft's pivot to domain-specific superintelligence raises urgent questions about liability, regulatory classification, and enterprise contract risk for UK professionals.

If AI Agents Become Your Workforce, What Happens to the Law Firm?

A hypothetical scenario playing out in China raises a serious question for legal practice: can a solo lawyer with AI agents compete with a mid-sized firm?

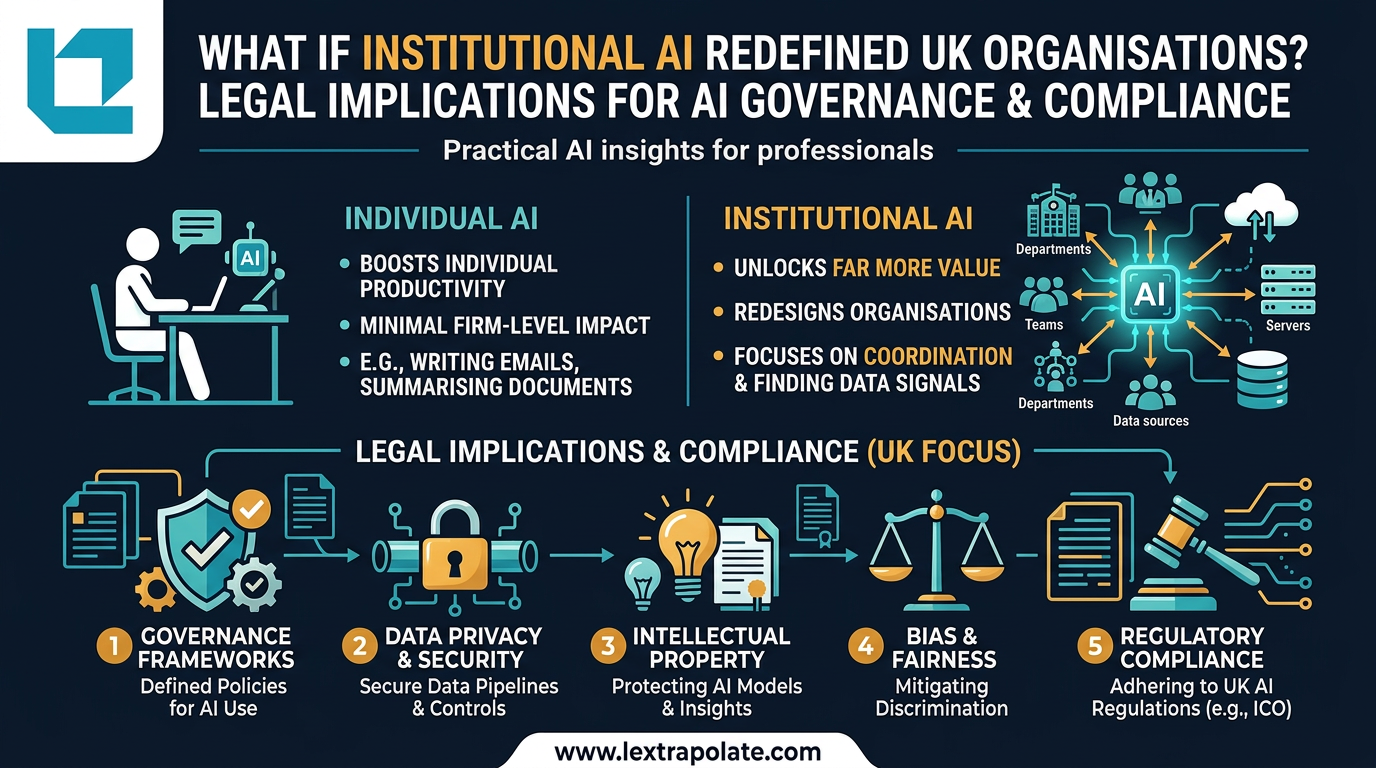

Your AI Is Working Hard. Your Firm Is Not: The Case for Institutional AI in Law

Individual AI makes lawyers faster. Institutional AI could remake law firms entirely. Most firms are pursuing the former and missing the latter.