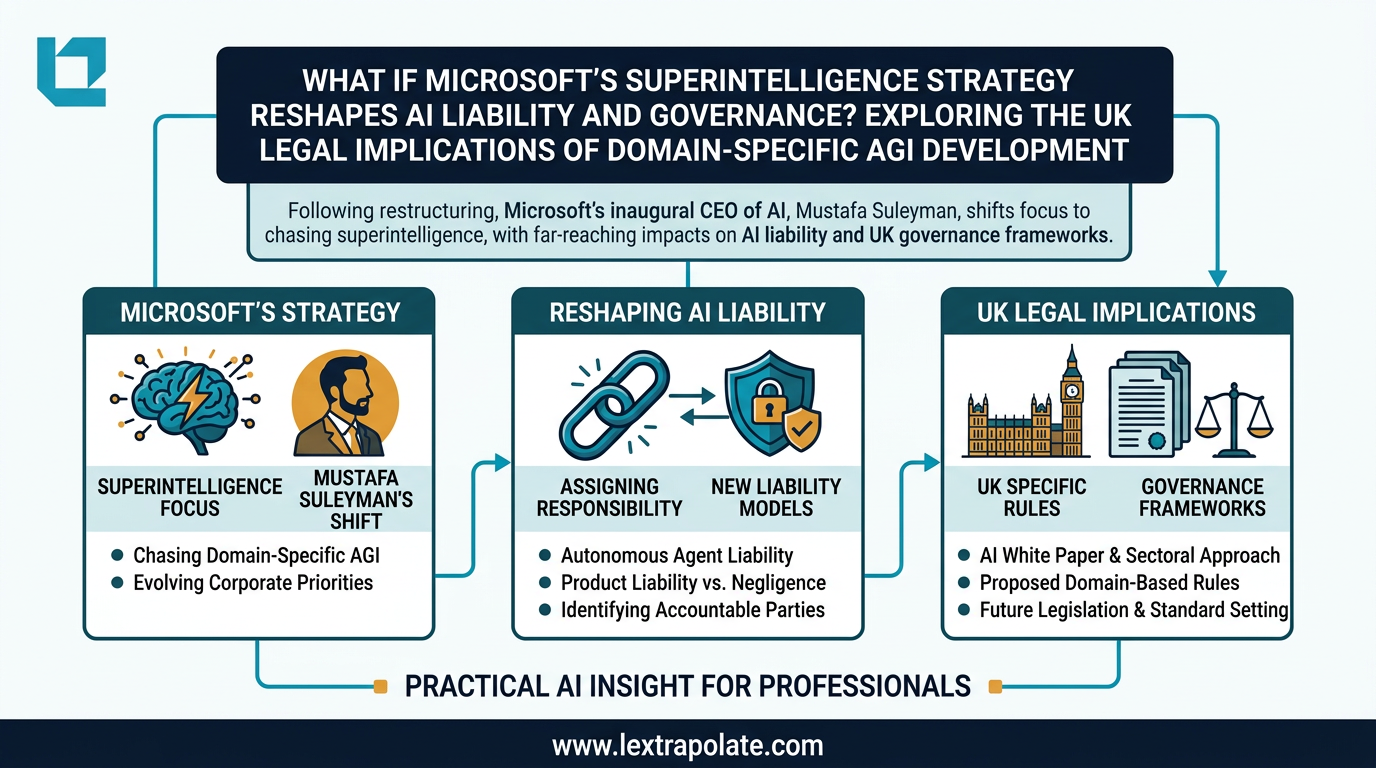

What If Microsoft's Superintelligence Strategy Reshapes AI Liability and Governance? Exploring the UK Legal Implications of Domain-Specific AGI Development

Disclaimer: Lextrapolate is a consultancy founded by a practising barrister. This article provides high-level analysis for educational and informational purposes only and does not constitute legal advice. Analysis of statutory interpretation is for educational purposes only.

Your firm's due diligence workflow runs on Microsoft Copilot. Your client's compliance function uses Azure AI. Their financial services regulator is asking questions about AI governance.

Now Microsoft has announced a major restructuring of its AI leadership to pursue a stated ambition for superintelligence. Who carries the liability if something goes wrong in that chain? That question is not hypothetical. It is the one UK professionals need to start answering now.

What Microsoft Has Actually Done

In mid-March 2026, Microsoft restructured its AI division. Mustafa Suleyman, CEO of Microsoft AI (reporting to Microsoft Corp CEO Satya Nadella), shifted focus to lead Microsoft's stated ambition for "Humanist Superintelligence" (HSI). According to reports by TechBuzz.ai, Suleyman indicated he had been planning this strategic shift for approximately nine months, meaning the decision-making process predates the public announcement by most of 2025.

The structural shift was made possible by a renegotiated contract with OpenAI, announced in October 2025, which removed restrictions on Microsoft developing Artificial General Intelligence (AGI) independently through 2030.

It is important to distinguish between 'Superintelligence'—Microsoft’s current branding for its high-capability roadmap—and 'AGI', which serves as the specific contractual trigger for independence from OpenAI. Crucially, 'Superintelligence' currently represents a branding and strategic ambition rather than a verified technical milestone; independent technical verification of superintelligence progress remains unavailable.

Microsoft is no longer simply a distributor of OpenAI's technology. It is now an independent AGI developer with its own philosophical framework and its own commercial roadmap. The proposed framework of HSI, set out in Microsoft's own published position, frames this as deliberately bounded superintelligence: domain-specific, calibrated, designed to keep humans in control rather than producing autonomous systems with unconstrained agency.

Regulatory Classification and the Domain-Specific Distinction

Under the EU AI Act (Regulation (EU) 2024/1689), which applies to organisations whose AI outputs are used within the EU, AI systems are classified by risk level. Article 51 of the Act establishes the criteria for classifying General Purpose AI (GPAI) models with systemic risk.

Specifically, Article 51(2) establishes a rebuttable presumption that a GPAI model presents systemic risks if the cumulative amount of compute used for its training is greater than 10^25 floating point operations (FLOPs). While the AI Office maintains the power to update these benchmarks via delegated acts as models evolve—and users should verify any such acts issued since 2024—this 10^25 FLOPs baseline remains the primary technical threshold for systemic risk classification.

This distinction is critical for firms assessing whether their vendor's new 'superintelligent' models fall under these more stringent systemic risk tiers. In the UK, while there is no single statutory AI Act, sector-specific regulators like the FCA and ICO exercise statutory powers that create de facto requirements.

Furthermore, established principles, such as those found in the Automated Vehicles Act 2024, provide a conceptual touchstone for statutory liability in autonomous systems. While currently limited to the transport sector, this Act serves as a legislative precedent for how UK law might eventually address autonomous decision-making and liability in enterprise software.

Liability, Indemnity, and the New Contractual Risk Profile

Microsoft's independent development path changes the potential liability chain. When a domain-specific system produces an error, attributing fault between model architecture and deployment configuration becomes more complex.

In 2026, the legal analysis is moving beyond the Consumer Protection Act 1987 toward frameworks specifically addressing autonomous systems. While the Law Commission has conducted work on 'Digital Assets', the primary vehicle for UK reform regarding product safety and AI-driven harms is the Product Regulation and Metrology Act framework and associated DSIT consultations. It is important to distinguish these government-led product safety reform paths from the Law Commission's broader work on intangible assets to ensure accurate risk mapping.

Enterprise contracts for AI tools typically assume a stable system; however, HSI development implies a roadmap of iterative capability growth. Standard SaaS limitation of liability clauses were not written to account for a system that may materially change its cognitive profile mid-contract.

Practical Considerations for Legal Teams

Considerations for legal teams include examining three key areas before the next renewal cycle:

- Capability Change Provisions: Review whether contracts provide visibility or audit rights if HSI development produces material changes to regulated workflows.

- Indemnity and Regulatory Alignment: Ensure indemnity provisions support your ability to demonstrate compliance to regulators like the FCA or ICO, who have issued specific AI governance guidance.

- AI Risk Registers: Update registers to reflect that vendors are now publicly committed to building systems with the ambition of achieving materially greater cognitive capability than previous productivity assistants.

The Governance Gap

Microsoft's HSI framework is a thoughtful philosophical posture, but philosophy is not enforcement architecture. There is currently no UK regulatory body equipped to independently audit whether a vendor's claims about human oversight are accurate at the level of system design.

The sensible response is to use contractual architecture—audit rights, incident reporting, and capability change notices—to build the governance structure that regulation has not yet fully provided.

Microsoft has made its strategic direction clear; professionals must ensure their contractual protections are equally robust.

Disclaimer: Lextrapolate is a consultancy founded by a practising barrister. This article provides high-level analysis for educational and informational purposes only and does not constitute legal advice. Analysis of statutory interpretation is for educational purposes only. If your firm is reviewing AI vendor contracts or updating governance frameworks in light of recent platform developments, Lextrapolate can help. Contact us for a structured review.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

If AI Agents Become Your Workforce, What Happens to the Law Firm?

A hypothetical scenario playing out in China raises a serious question for legal practice: can a solo lawyer with AI agents compete with a mid-sized firm?

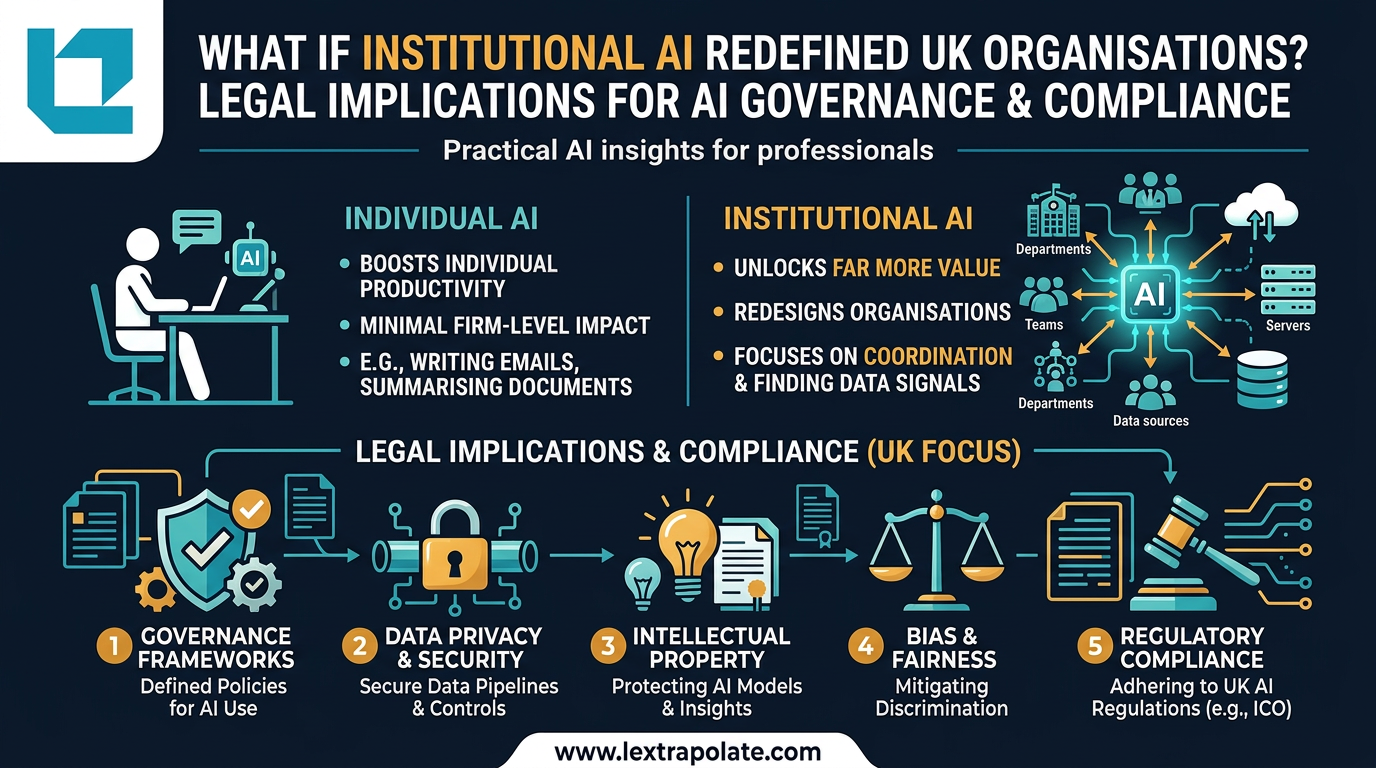

Your AI Is Working Hard. Your Firm Is Not: The Case for Institutional AI in Law

Individual AI makes lawyers faster. Institutional AI could remake law firms entirely. Most firms are pursuing the former and missing the latter.

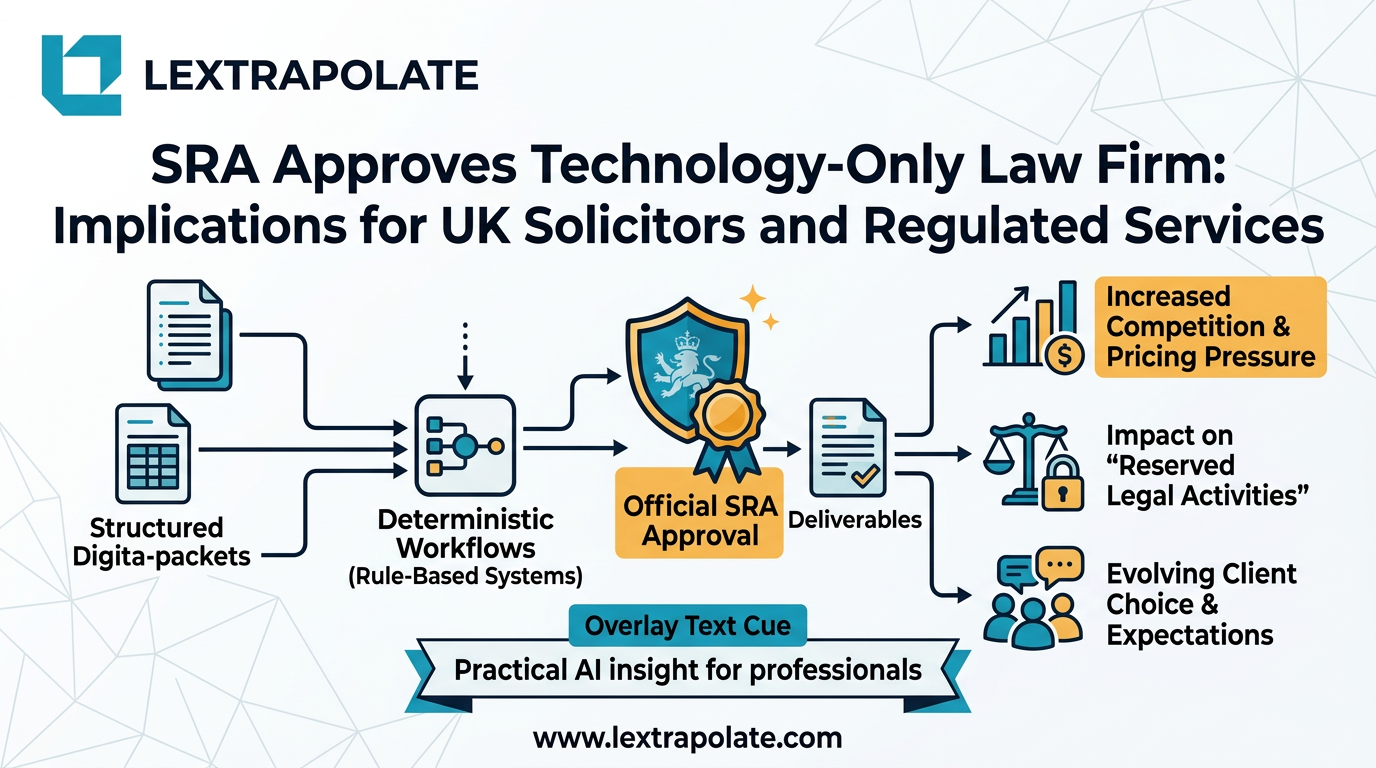

Lawyerless Law Firms: What the SRA's Approval of Deterministic Legal Tech Actually Means

The SRA has authorised a firm that delivers regulated legal services without lawyers. Here is what that precedent means for UK solicitors and the profession.