What If AI Agent Teams Could Draft Your Entire Case Bundle?

Imagine this. You instruct a team of AI agents on a Monday morning. One reads the entire trial bundle. Another drafts the skeleton argument. A third builds the chronology in PowerPoint for the client meeting. They work simultaneously, cross-referencing each other's output, and by lunchtime you have a first draft of everything.

That is not science fiction. The underlying technology now exists. The question is whether the legal profession is ready for it, and whether the competitive landscape around these tools will let practitioners access them on fair terms.

The technology that makes this real

Anthropic released Claude Opus 4.6 in early February 2026. Two features matter for lawyers.

First, "agent teams": multiple AI agents can now split a single project and work on different elements simultaneously, then synthesise their outputs. This is not one chatbot answering one question. It is coordinated, parallel, autonomous work across a complex task.

Second, a one million token context window. To put that in practical terms, one million tokens is roughly 750,000 words. That is a substantial disclosure exercise, a lengthy contract suite, or several lever arch files of witness statements, all held in memory at once. Gadgets360 reported this brings the Opus tier in line with what Sonnet previously offered for heavy document work.

Add to that new integrations letting the model work natively inside Excel and PowerPoint, reading existing templates, building financial models and presentation decks without copy and paste, and you have the skeleton of an end-to-end document production pipeline.

Now. What if you pointed all of that at legal work?

From chatbot to chambers: the thought experiment

Let us be precise about the shift. Most lawyers using AI today are doing something like sophisticated search or first-draft generation. You paste text in, you get text out, you revise heavily. That is useful. It is also limited.

The agentic model is qualitatively different. Picture a disclosure review where one agent reads and categorises documents, a second identifies privileged material, and a third produces the schedule, all working concurrently against the same million-token context. Or a due diligence exercise where parallel agents tackle different contract categories and produce a unified report.

The DataCamp analysis of Opus 4.6 noted that the model topped most agentic benchmarks, including a significant leap on ARC-AGI-2 to nearly 70%. These benchmarks test the model's ability to handle multi-step, autonomous tasks, precisely the kind of complex, sequential reasoning that legal document production demands.

This is not about replacing the solicitor or barrister. It is about replacing the hours of mechanical assembly that currently sit between "I know what the argument is" and "here is the finished document." The judgement remains yours. The grunt work becomes something you supervise rather than perform.

The competition law question nobody is asking

Here is where it gets uncomfortable. If agentic AI becomes genuinely transformative for legal productivity, the firms that access the best models first gain an enormous competitive advantage. And the market for frontier AI models is concentrating fast.

Opus 4.6 is available through Google Cloud's Vertex AI and Microsoft's Azure. OpenAI, meanwhile, launched Frontier, its enterprise platform for deploying AI agents with onboarding, permissions, and performance reviews built in. These are not neutral infrastructure plays. They are strategic integrations between the world's most powerful AI labs and the world's dominant cloud platforms.

Under the Competition Act 1998, the CMA already has the tools to scrutinise agreements and conduct that distort competition. But the Digital Markets, Competition and Consumers Act 2024 (DMCCA) was designed specifically for this kind of scenario: digital markets where network effects and vertical integration can lock out competitors before a market fully forms.

Consider the position of a mid-size law firm evaluating agentic AI. The best models are available only through specific cloud partnerships. The integrations work natively with Microsoft Office. If your firm's infrastructure does not align with those partnerships, you face higher switching costs, slower adoption, and ultimately inferior tools. That is a textbook interoperability concern under the DMCCA's Strategic Market Status framework.

The CMA's ongoing scrutiny of partnerships between frontier AI labs and Big Tech (think the Microsoft/OpenAI relationship and Google's investment in Anthropic) is directly relevant here. If agentic AI becomes a core input to professional services, the question of who controls access to the best models, and on what terms, becomes a competition law issue that affects every practitioner.

The professional regulation gap

Even setting aside competition, the regulatory framework for agentic AI in legal practice barely exists.

The SRA's guidance on AI use remains focused on the chatbot paradigm: check your outputs, do not feed in client data without proper safeguards, maintain competence. That is necessary but insufficient for a world where multiple agents work autonomously on your client's matter.

Who is responsible when Agent Three's PowerPoint chronology contains an error that Agent One's document review should have caught? The answer under current professional conduct rules is clear: you are. But the practical challenge of supervising parallel autonomous agents working across a million tokens of context is fundamentally different from proofreading a single AI-generated draft.

The Opus 4.6 system card addresses safety evaluations and risk mitigations. But Anthropic's safety framework is designed for general use. It does not account for the specific duties that attach to legal work: duties to the court, duties of confidentiality, the cab rank rule's implications for how and when you can delegate.

Training matters here more than ever. The Ayinde case demonstrated what happens when practitioners use AI without understanding its limitations. Scale that to multi-agent workflows and the potential for compounding errors increases significantly.

What this means for Monday morning

Let me bring this back to earth with five concrete points.

1. Understand the architecture before you adopt it. "Agent teams" is not a marketing term. It describes a specific technical capability where multiple AI instances collaborate autonomously. If you are going to use this in practice, you need to understand what each agent can and cannot do, and where the handoff points create risk.

2. Map your workflows before you automate them. The firms that will benefit most from agentic AI are those that already have disciplined, documented processes. If your current approach to bundle preparation is "it depends on who's doing it," an AI agent team will replicate that inconsistency at scale.

3. Watch the competition law landscape. If your firm is being steered towards a particular cloud provider or AI vendor because of integration lock-in, that is a strategic risk. The DMCCA gives the CMA new tools to intervene in digital markets. Know your options and maintain them.

4. Invest in training now, not later. Every month that passes without structured AI training for your team is a month where bad habits calcify. Agentic workflows will amplify whatever level of competence, or incompetence, your people bring to them.

5. Engage with your regulator. The SRA, the BSB, and CILEx need to hear from practitioners about how agentic AI will change supervisory obligations. If you wait for the regulators to work this out on their own, the guidance will arrive late and fit poorly.

The real question

This thought experiment is not really about technology. It is about readiness.

The capability to run multi-agent AI workflows on large-scale legal documents exists today. The question is whether the legal profession will adopt it thoughtfully, with proper training, robust supervision, and eyes open to the competitive dynamics shaping the market, or whether we will stumble into it the way we stumbled into everything from email to electronic bundles.

I know which outcome the profession's track record suggests. I also know it does not have to be that way.

If you are thinking about how agentic AI fits into your practice, or if you are a firm leader trying to work out what investment in this technology actually looks like, get in touch. The window for getting ahead of this is open. It will not stay open forever.

Sources

Stay ahead of the curve

Get practical AI insights for lawyers delivered to your inbox. No spam, no fluff, just the developments that matter.

Chris Jeyes

Barrister & Leading Junior

Founder of Lextrapolate. 20+ years at the Bar. Legal 500 Leading Junior. Helping lawyers and legal businesses use AI effectively, safely and compliantly.

Get in TouchMore from Lextrapolate

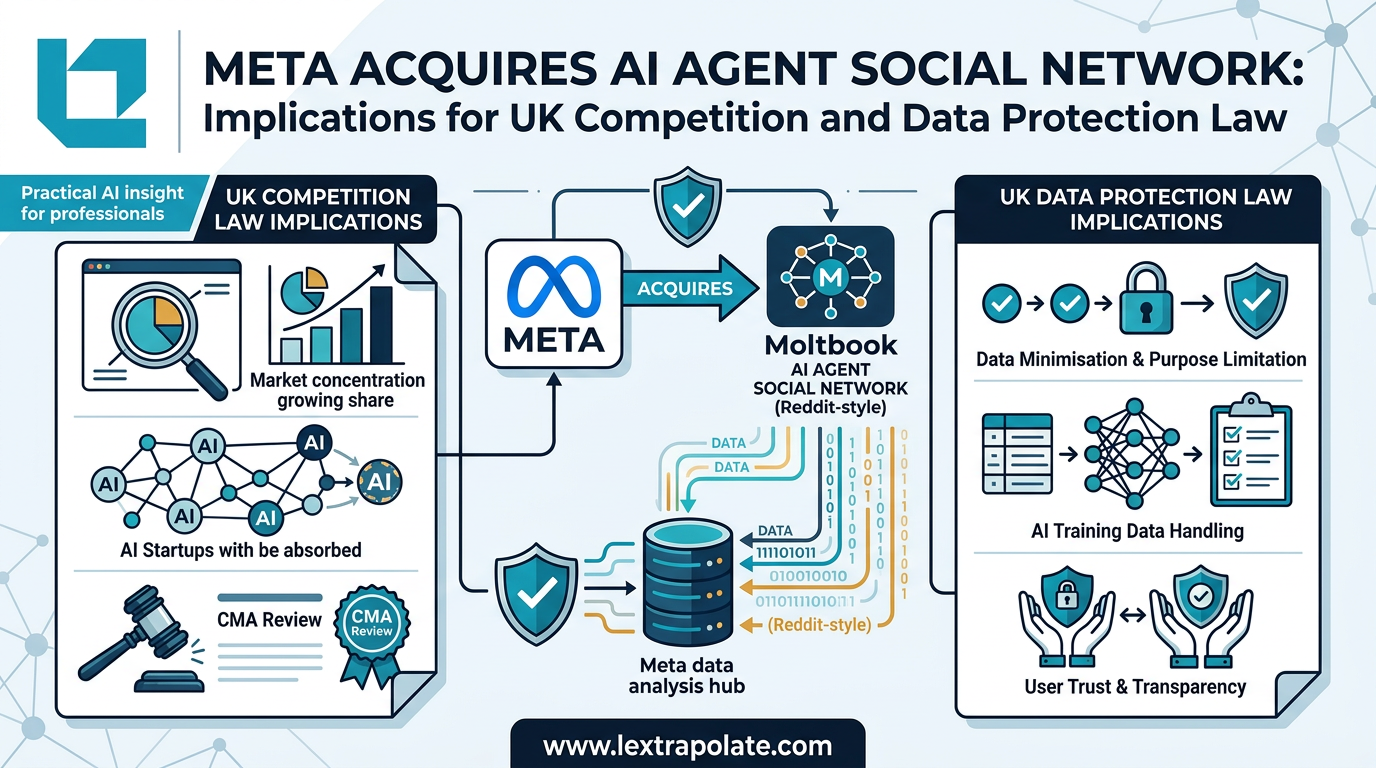

AI Agents Talking to Each Other: What It Means When Social Networks Go Autonomous

Meta has acquired a platform built for AI-to-AI communication. The regulatory and practical implications for UK professionals are worth examining carefully.

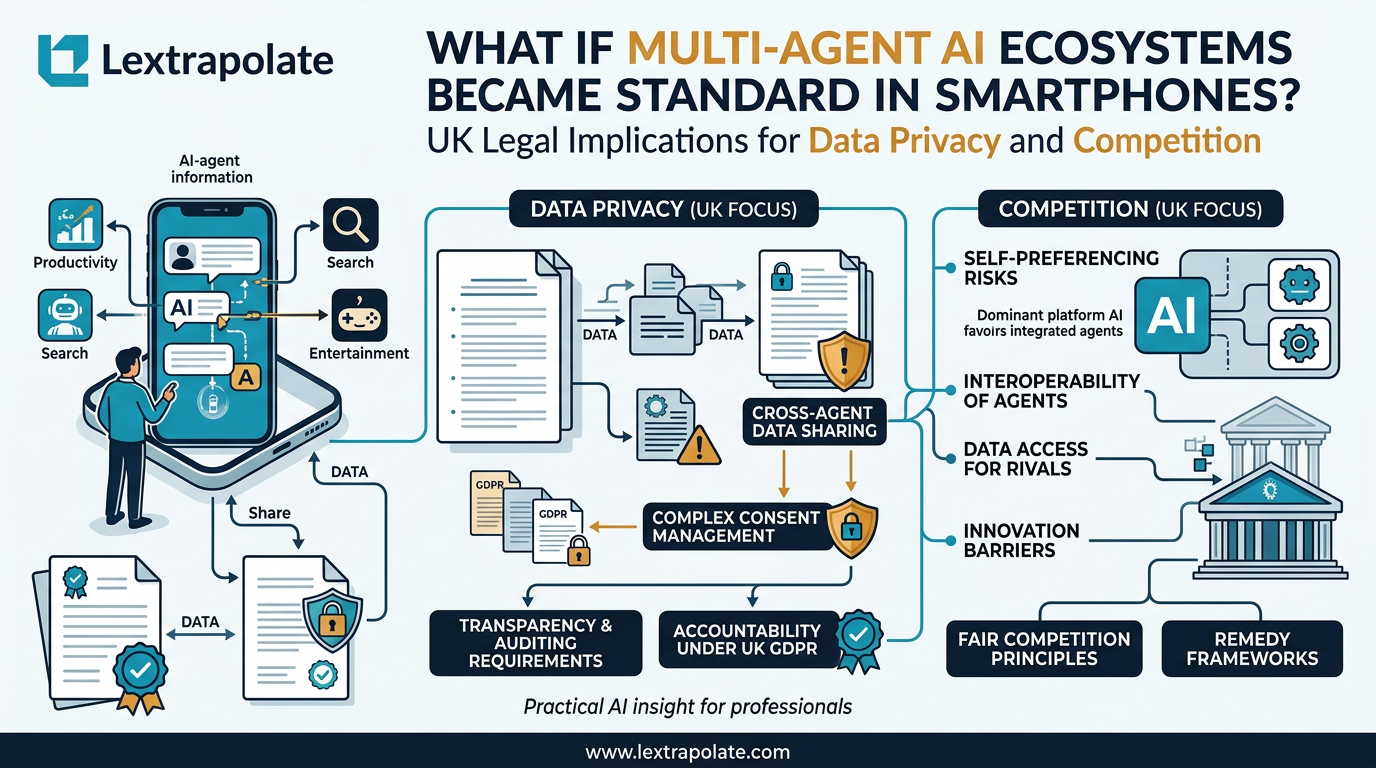

When Your Phone Becomes a Research Assistant: The Legal Questions Multi-Agent AI Raises

AI agents embedded at OS level change how professionals research on the move. The concept is significant. The legal questions it raises are more significant still.

Zach + Richard's Legal AI Insights: What UK Lawyers Need to Know About Anthropic's Legal Push and AI 2.0

Abramowitz and Tromans dissect Anthropic's legal market entry and what AI 2.0 means for UK lawyers. Practical takeaways from their latest Legal AI Adventure.